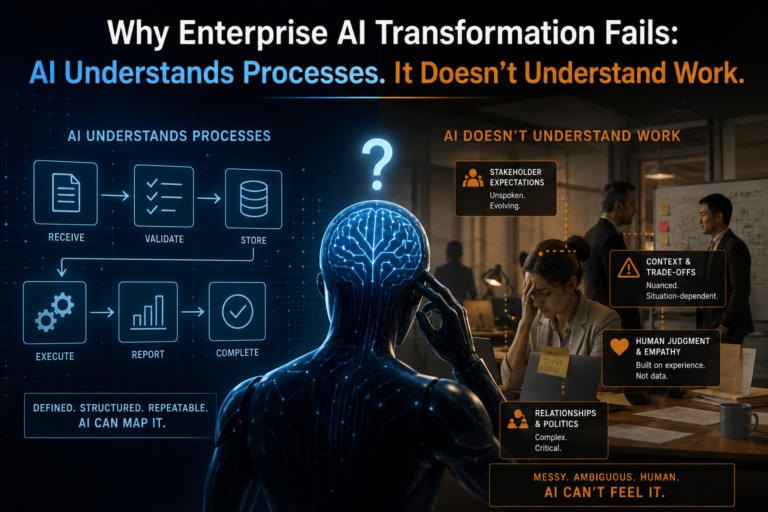

The hidden reason digital transformation breaks when AI enters the enterprise

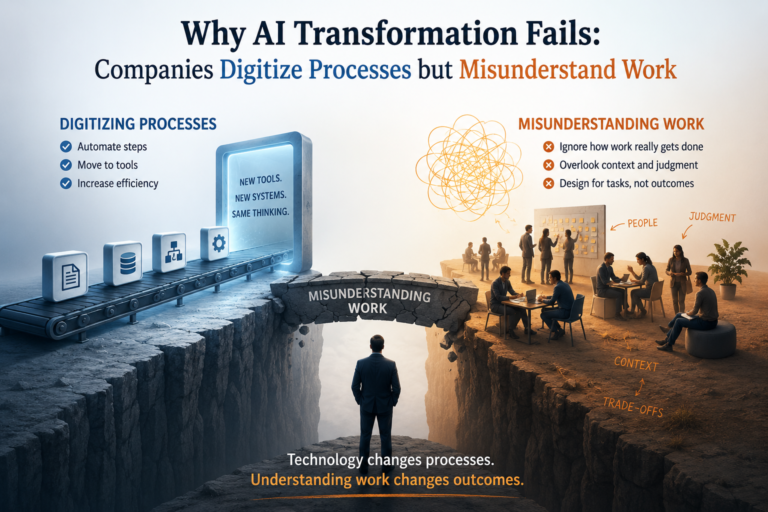

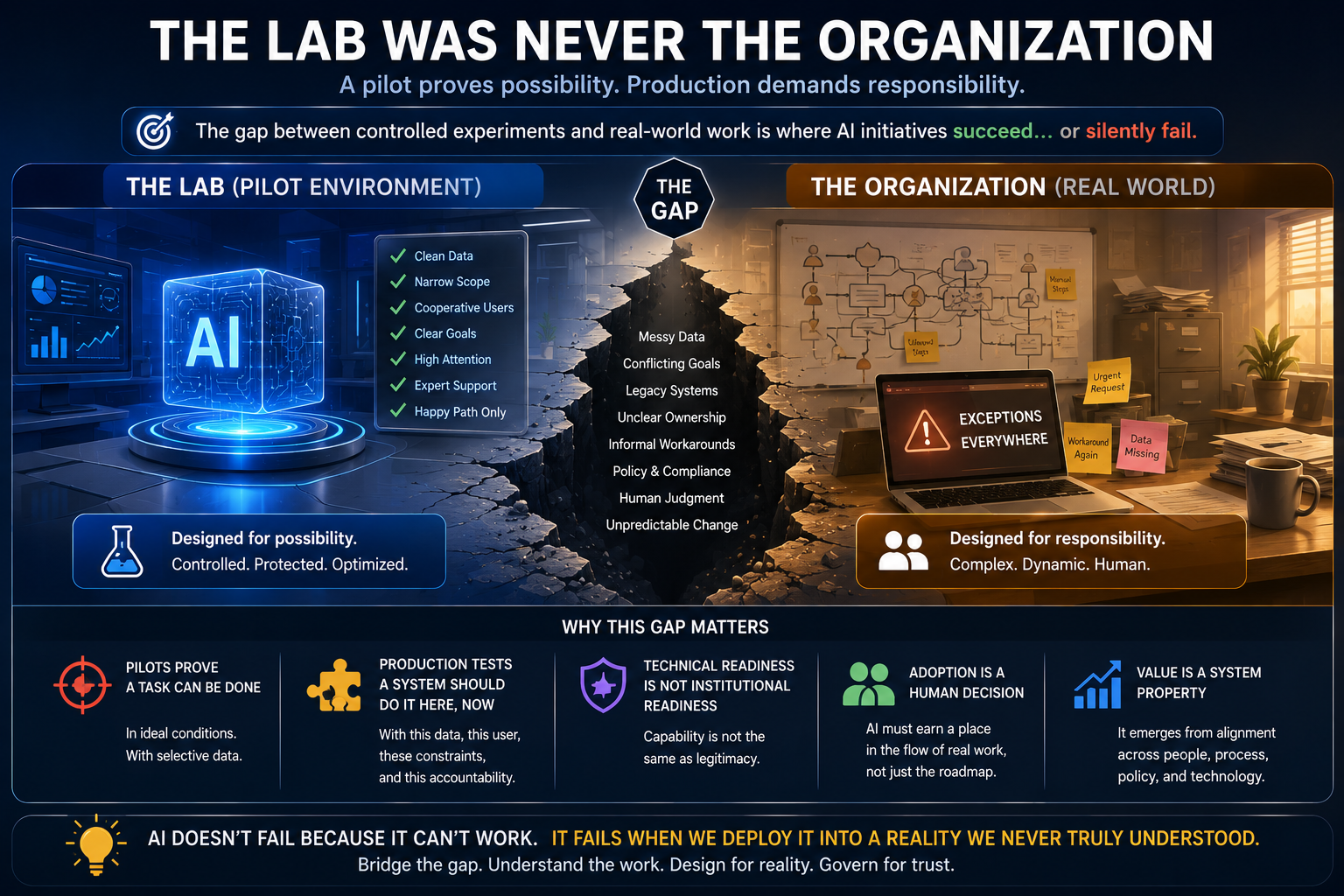

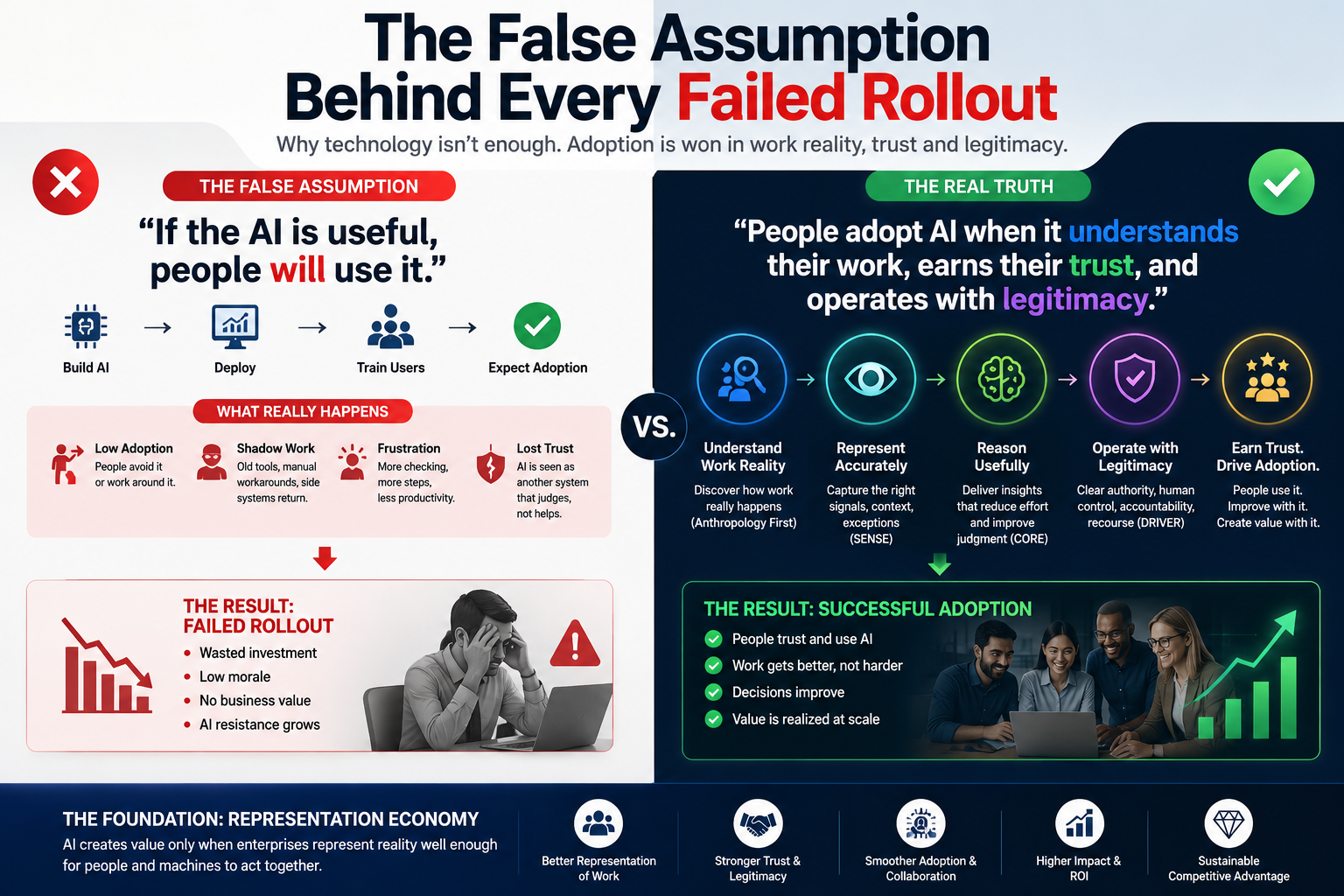

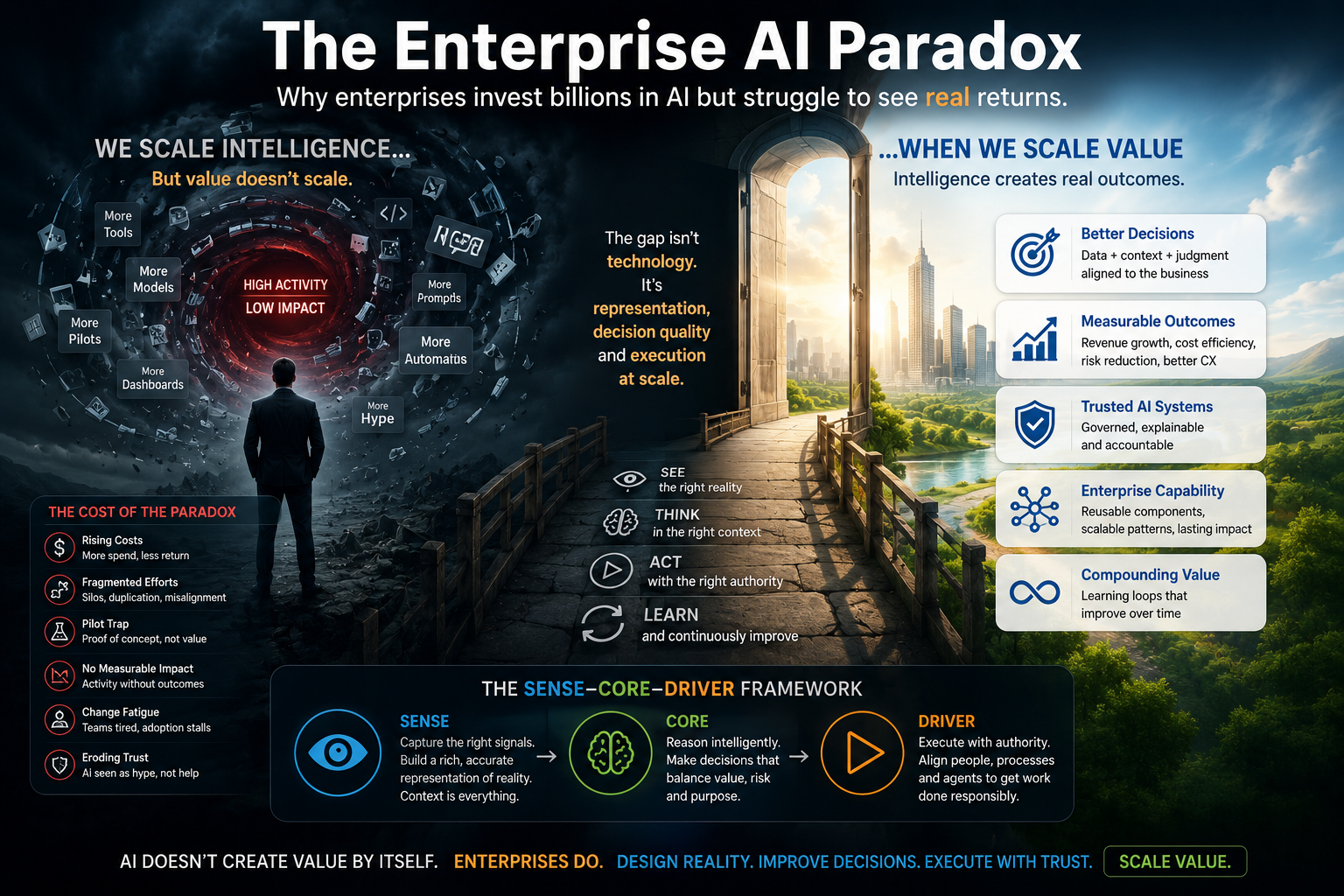

Most companies did not fail at digital transformation because they lacked technology.

They failed because they digitized processes without fully understanding work.

AI is now exposing that mistake.

For more than two decades, enterprises have invested in workflow systems, CRM platforms, ERP modernization, cloud migration, mobile apps, analytics dashboards, automation tools, and digital operating models. Many of these efforts looked successful on paper. Forms moved online. Approvals became digital. Customer interactions were captured. Documents became searchable. Dashboards multiplied. Processes became more visible.

Then AI arrived.

Suddenly, companies expected these digital foundations to become intelligent. They wanted AI agents to act on workflows, copilots to support employees, generative AI to summarize institutional knowledge, predictive systems to improve decisions, and autonomous tools to reduce manual effort.

But something awkward happened.

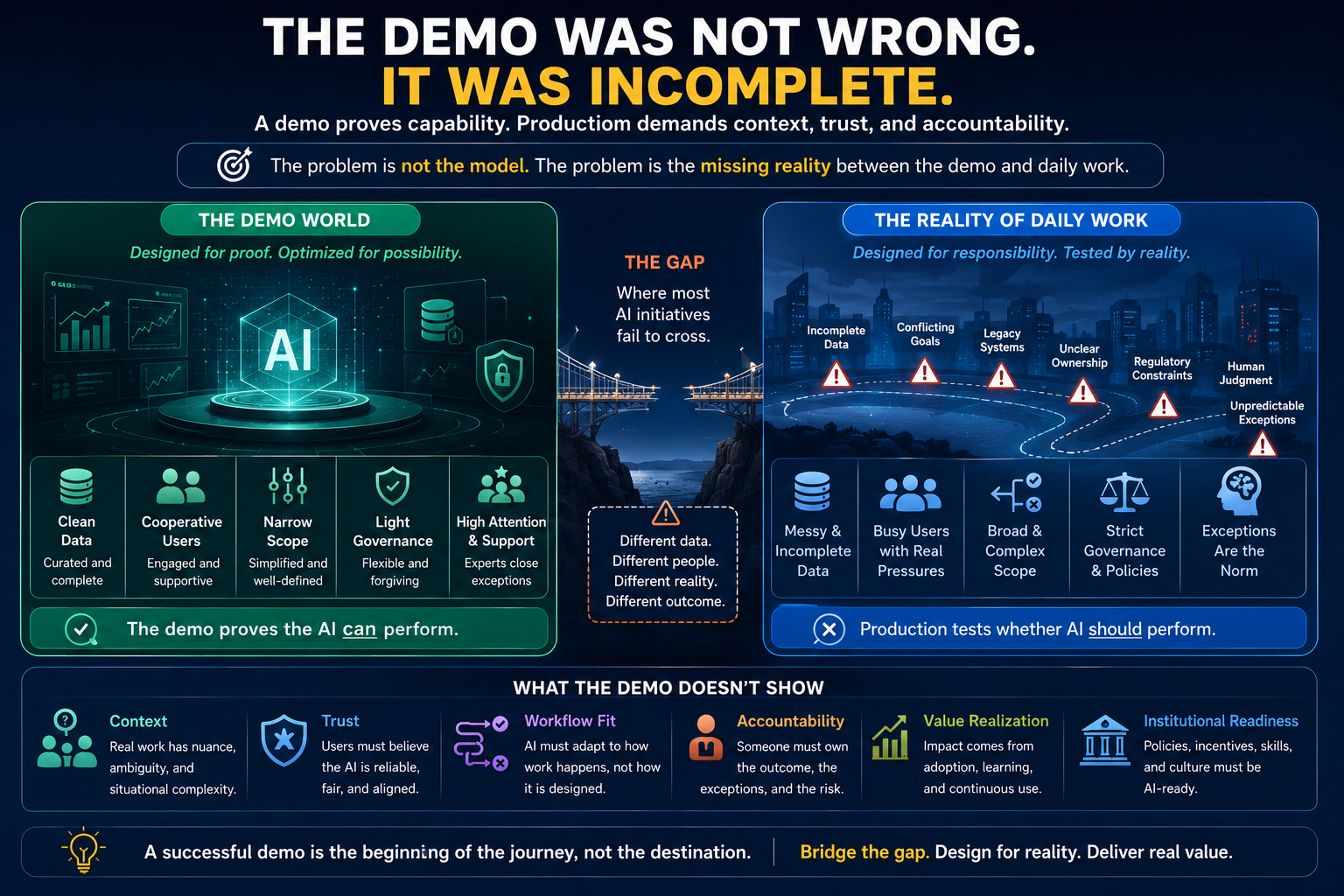

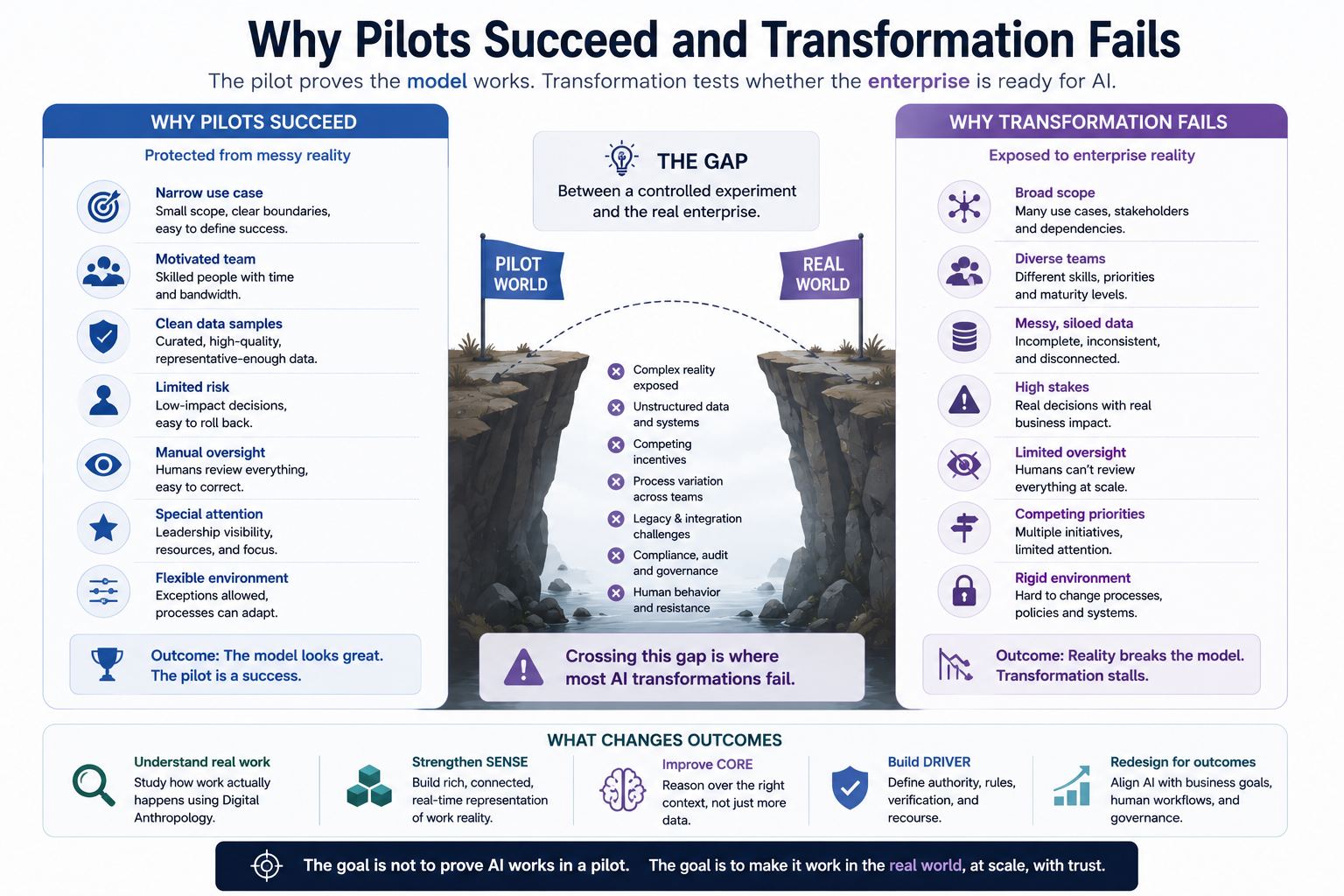

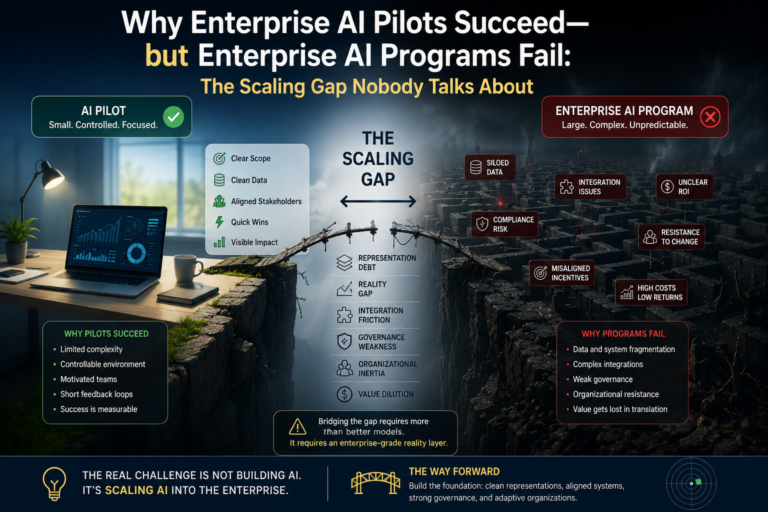

The AI worked in demos. It worked in pilots. It worked in narrow use cases.

Yet it struggled to transform the enterprise.

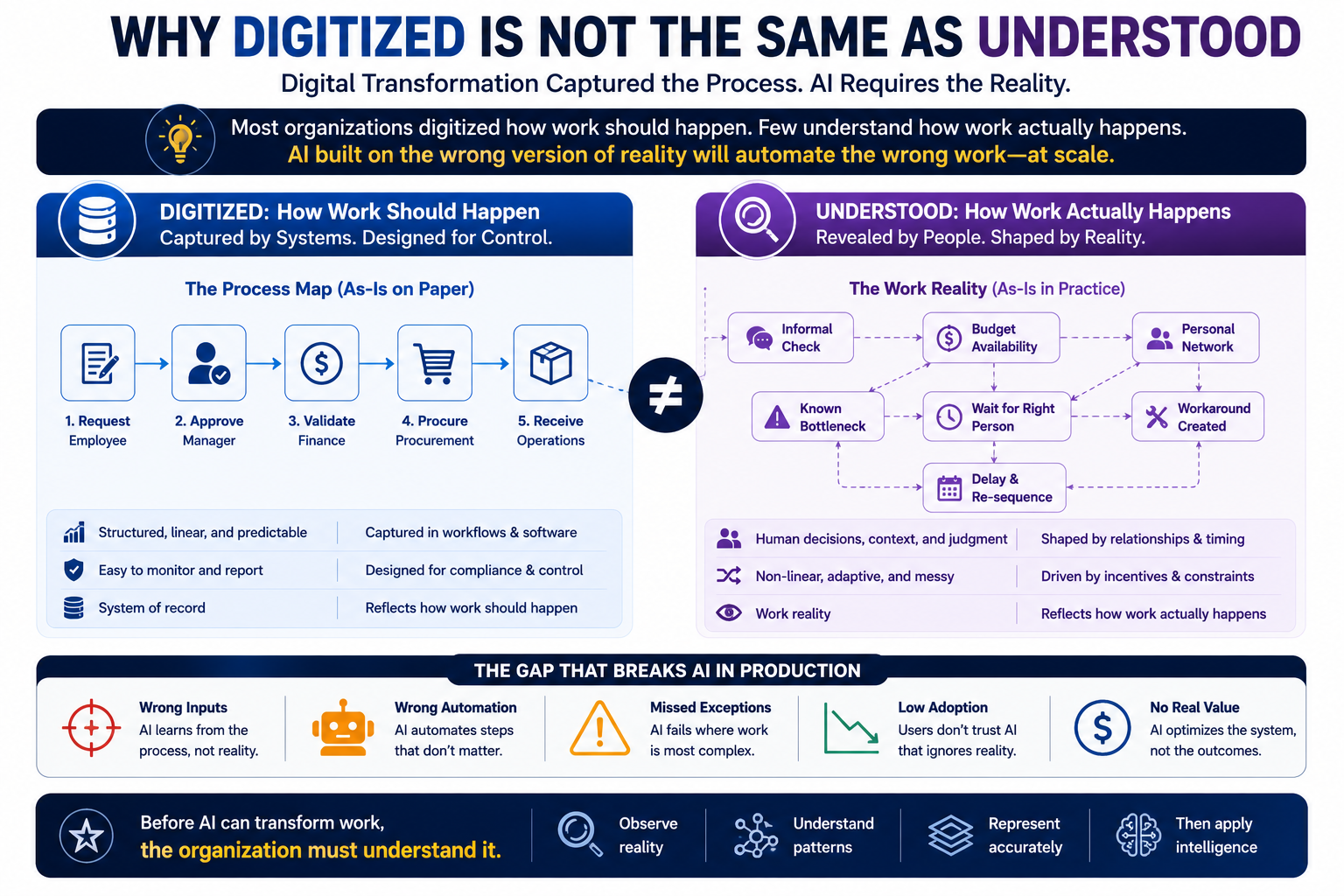

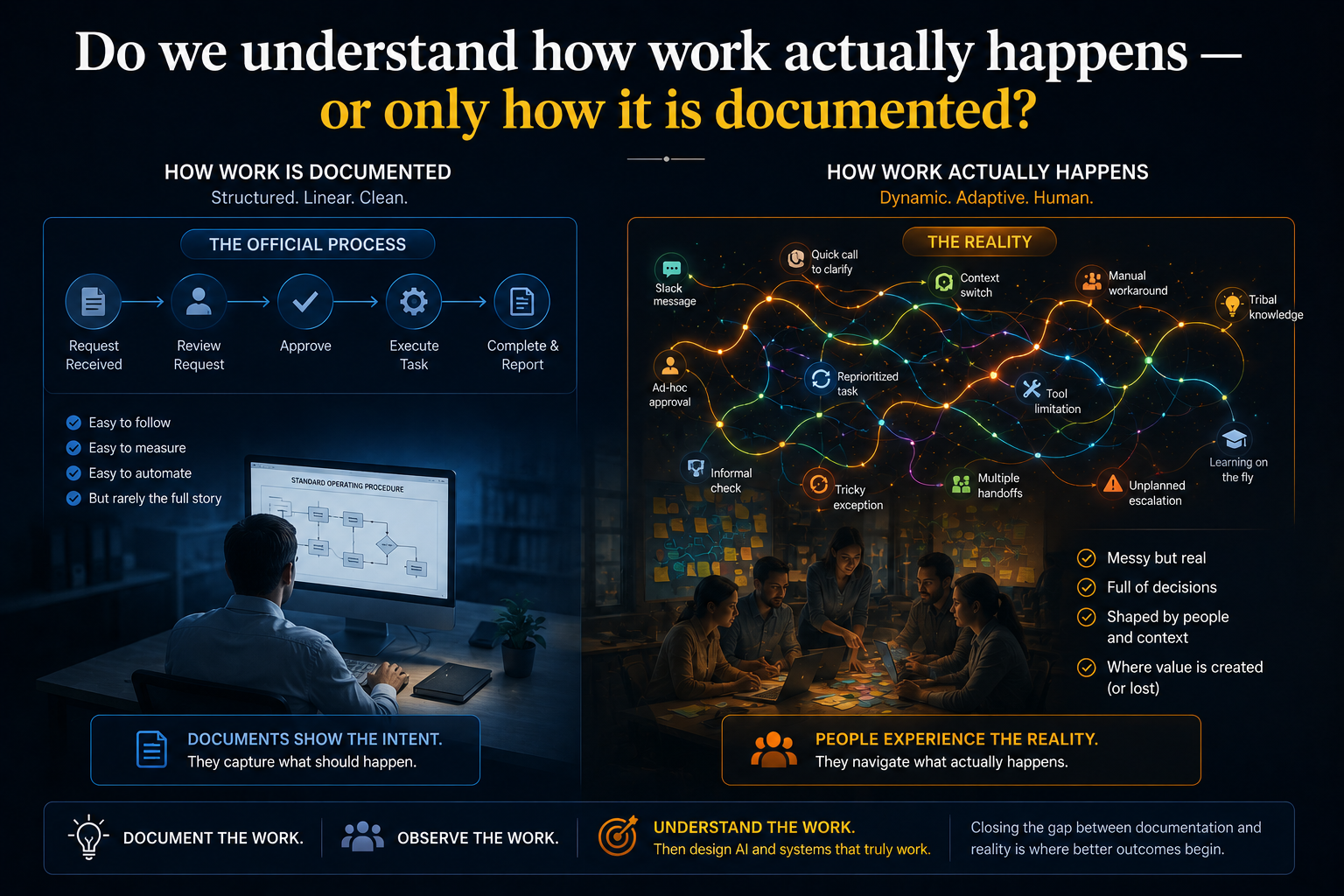

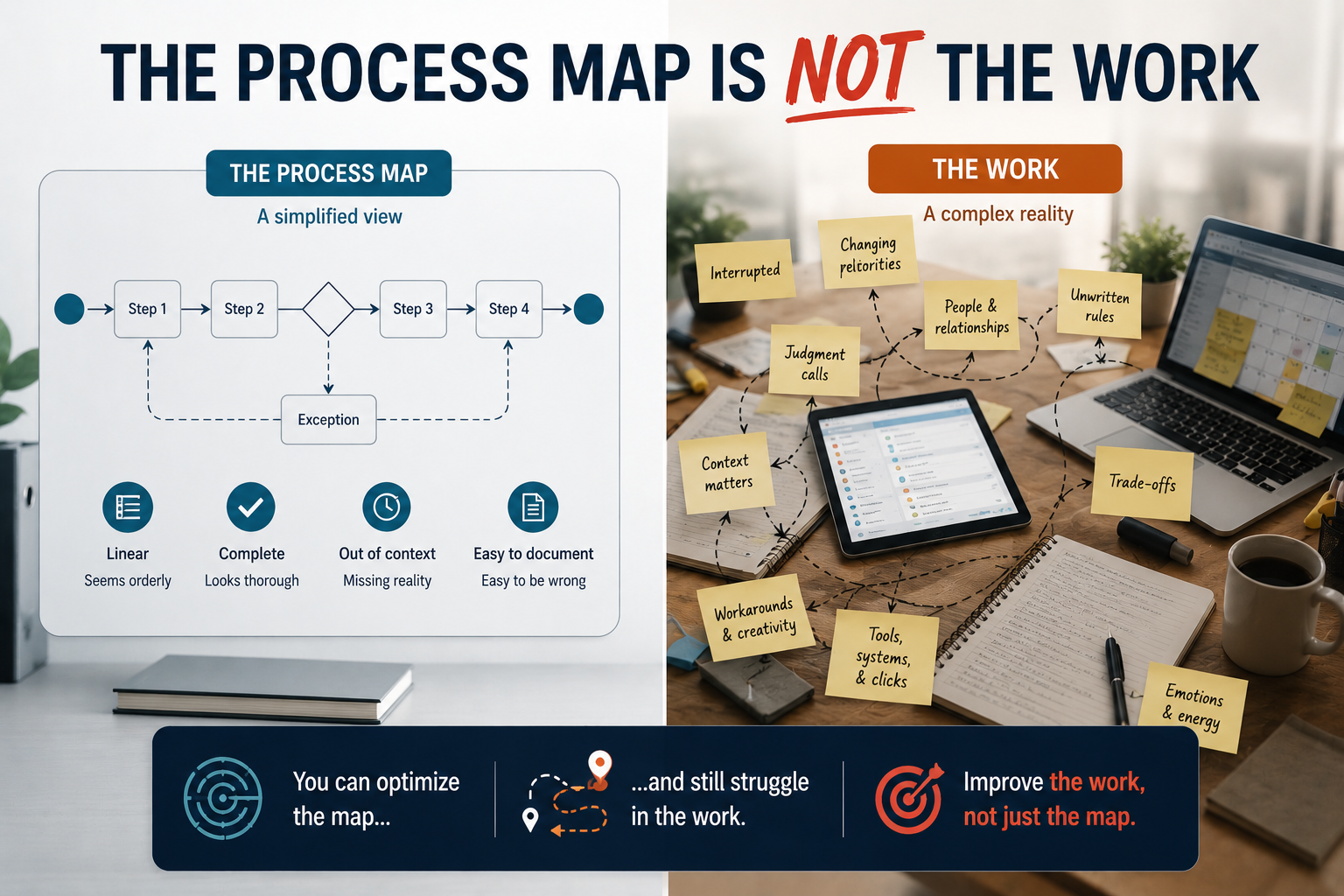

The reason is uncomfortable: much of what companies digitized was the formal process, not the real work.

That distinction now matters.

A process is what the organization says happens.

Work is what actually happens.

A process is visible in systems, policies, workflows, and dashboards.

Work includes exceptions, judgment, habits, trust, negotiation, shortcuts, local context, informal escalation, human interpretation, and the invisible coordination that keeps the enterprise functioning.

Digital transformation often captured the process.

AI transformation needs to understand the work.

That is the new fault line.

What is the difference between digital transformation and AI transformation?

Digital transformation focused on digitizing records, automating workflows, and improving efficiency. AI transformation goes further by helping organizations understand work, context, decisions, and human behavior. While digital transformation changed how work is performed, AI transformation changes what work becomes possible.

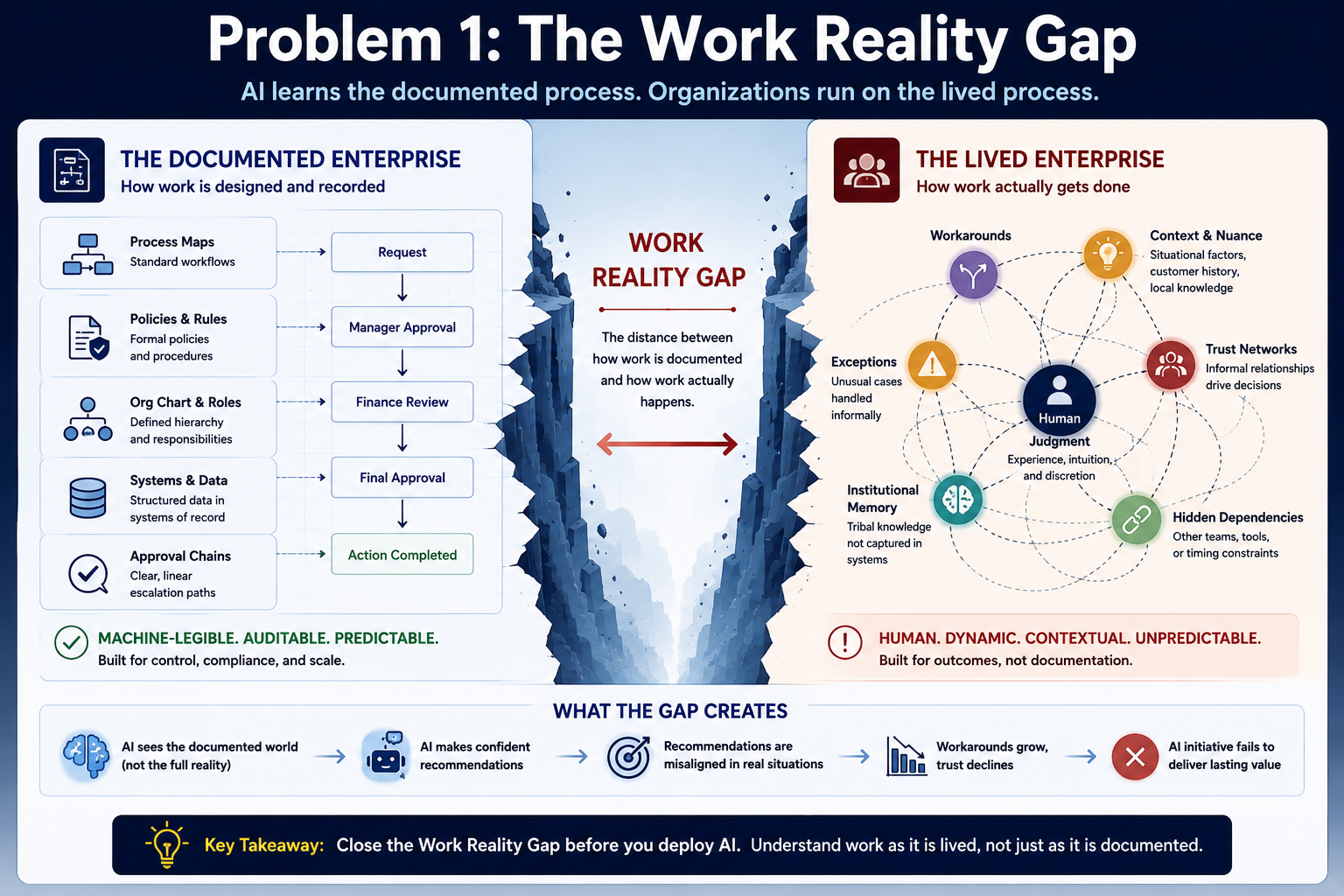

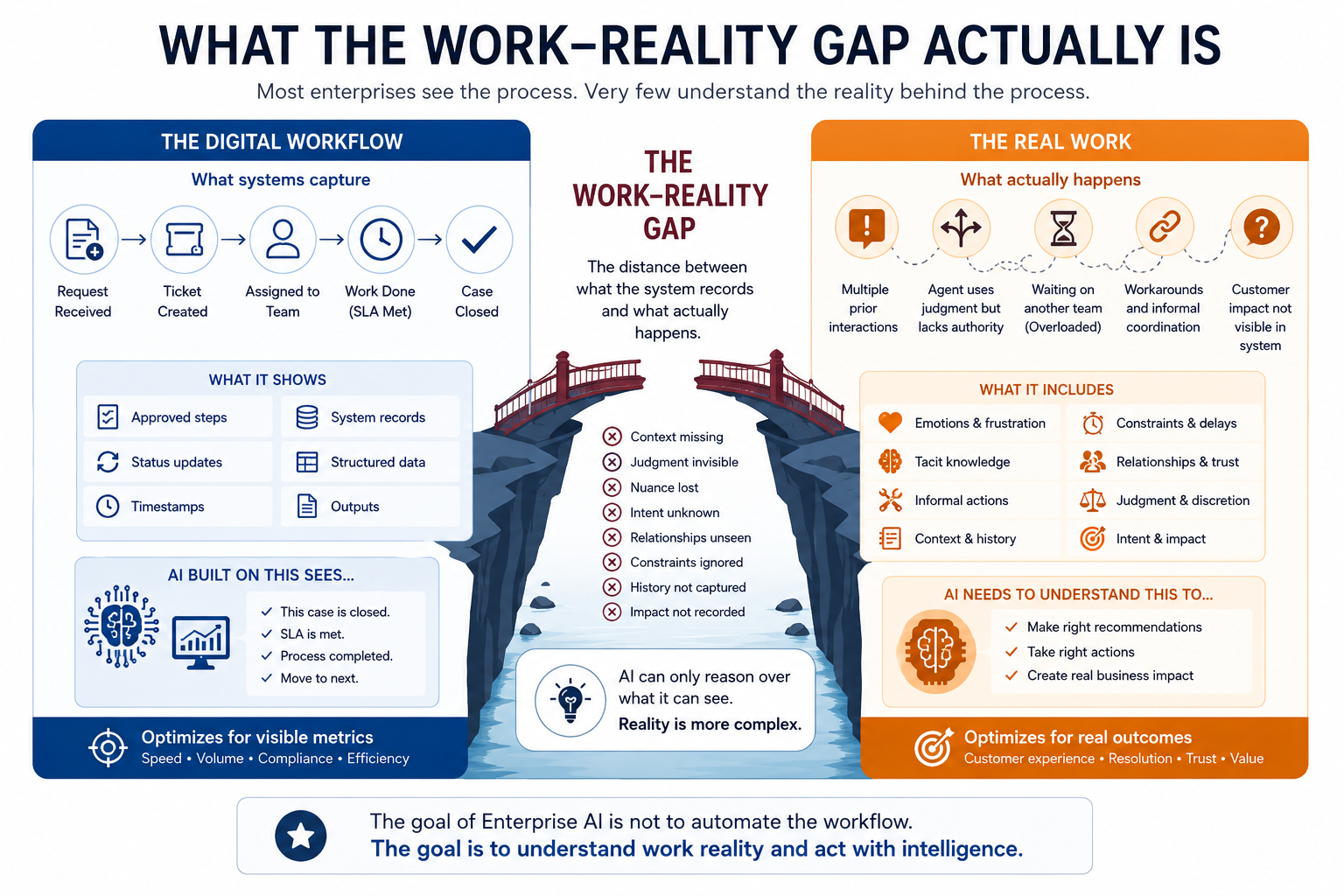

The process map is not the work

Every large organization has process maps.

A customer complaint is logged, categorized, assigned, resolved, and closed.

A purchase order is raised, approved, matched, paid, and reconciled.

A loan application is received, verified, scored, approved, rejected, or escalated.

A software defect is reported, triaged, assigned, fixed, tested, and released.

These maps are useful. They create order. They define accountability. They support automation. They help organizations scale.

But they are not the full reality.

In the real world, the customer complaint may require reading between the lines. The purchase order may be delayed because the supplier has a history of partial fulfillment. The loan application may need human judgment because the customer’s formal data does not reflect their actual earning capacity. The software defect may be technically minor but strategically urgent because it affects an important client.

The process map sees the official sequence.

The human worker sees the situation.

This is why AI transformation fails when companies assume that digitized process data is the same as operational understanding.

AI systems can read the process.

But can they understand the work?

That is the question.

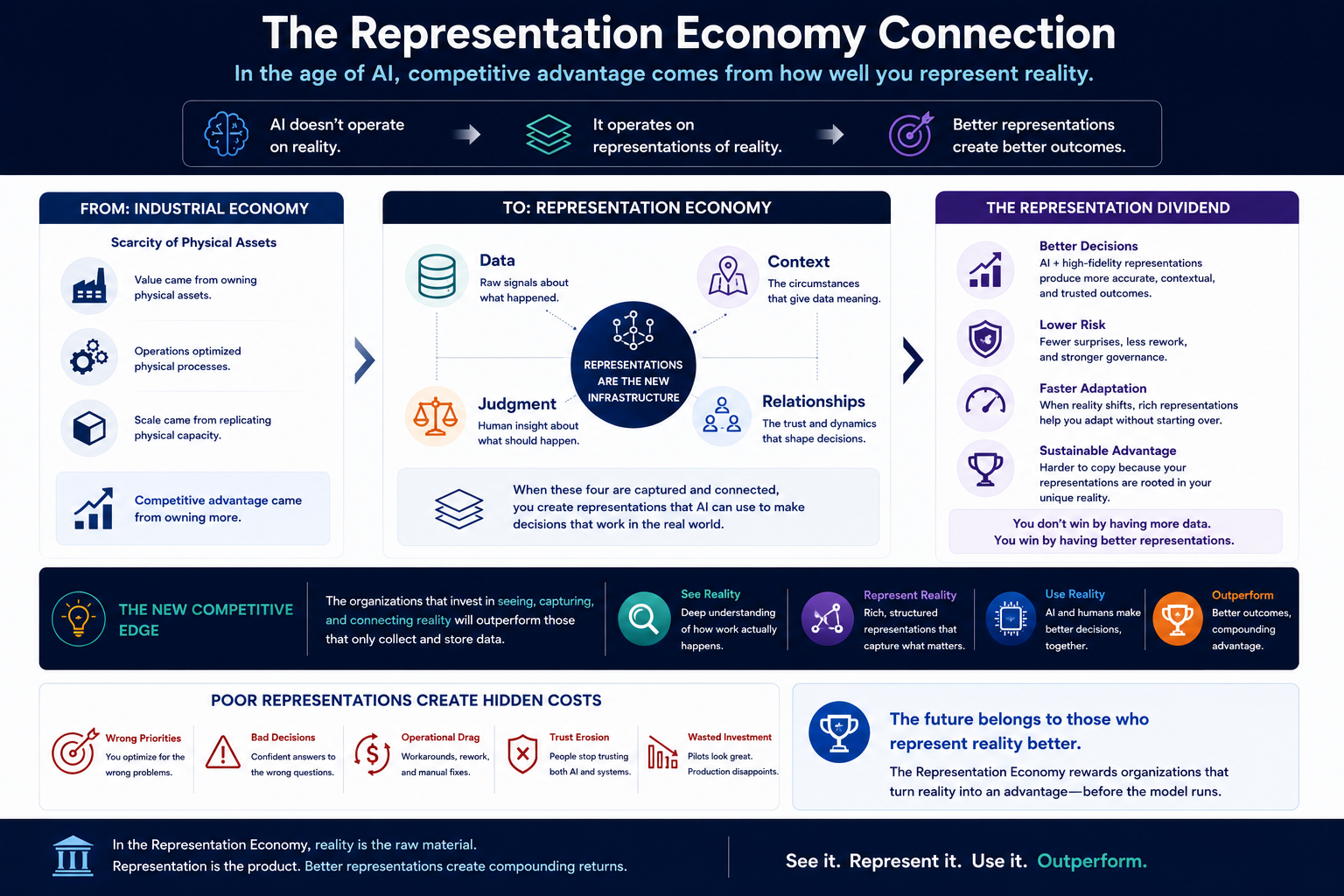

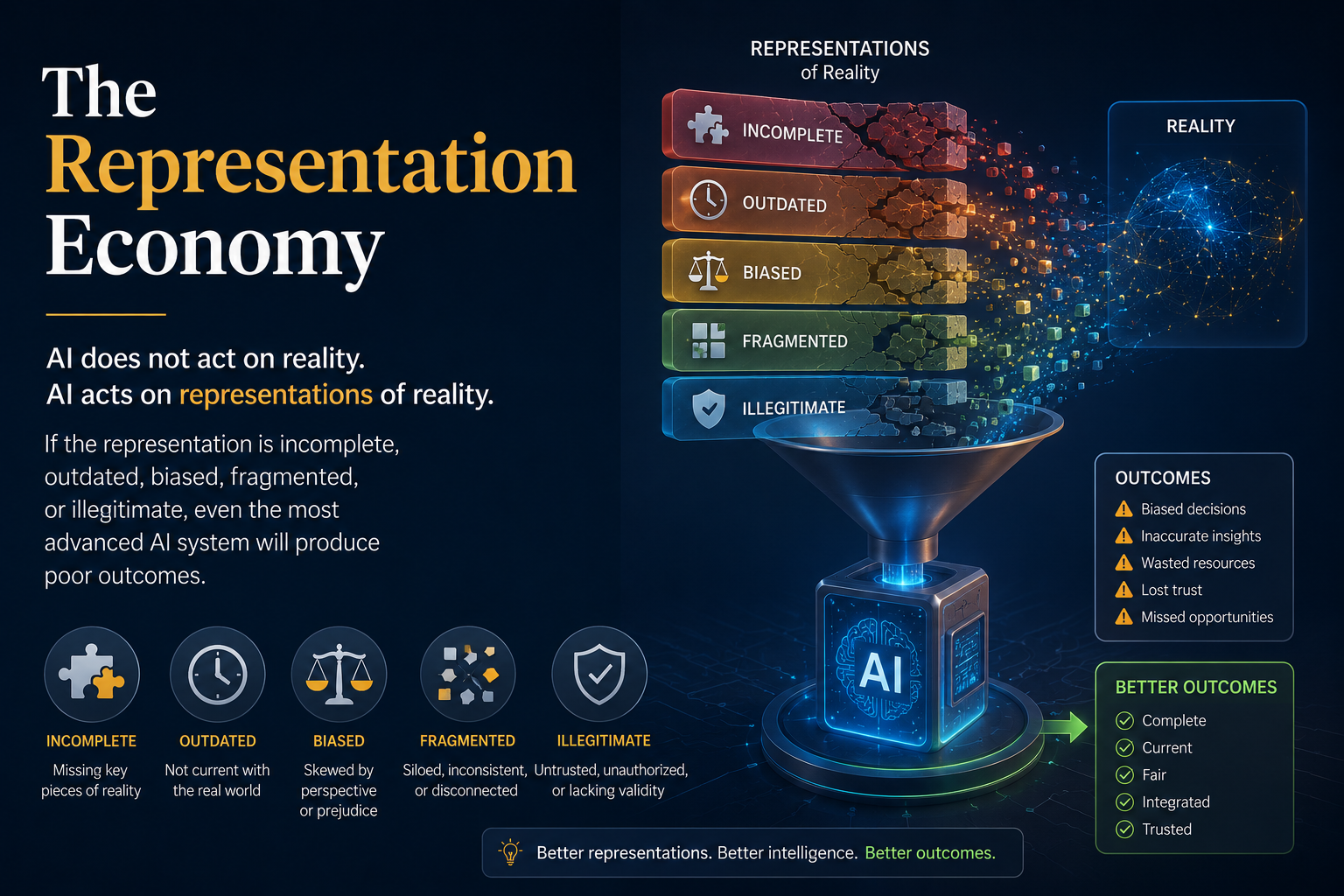

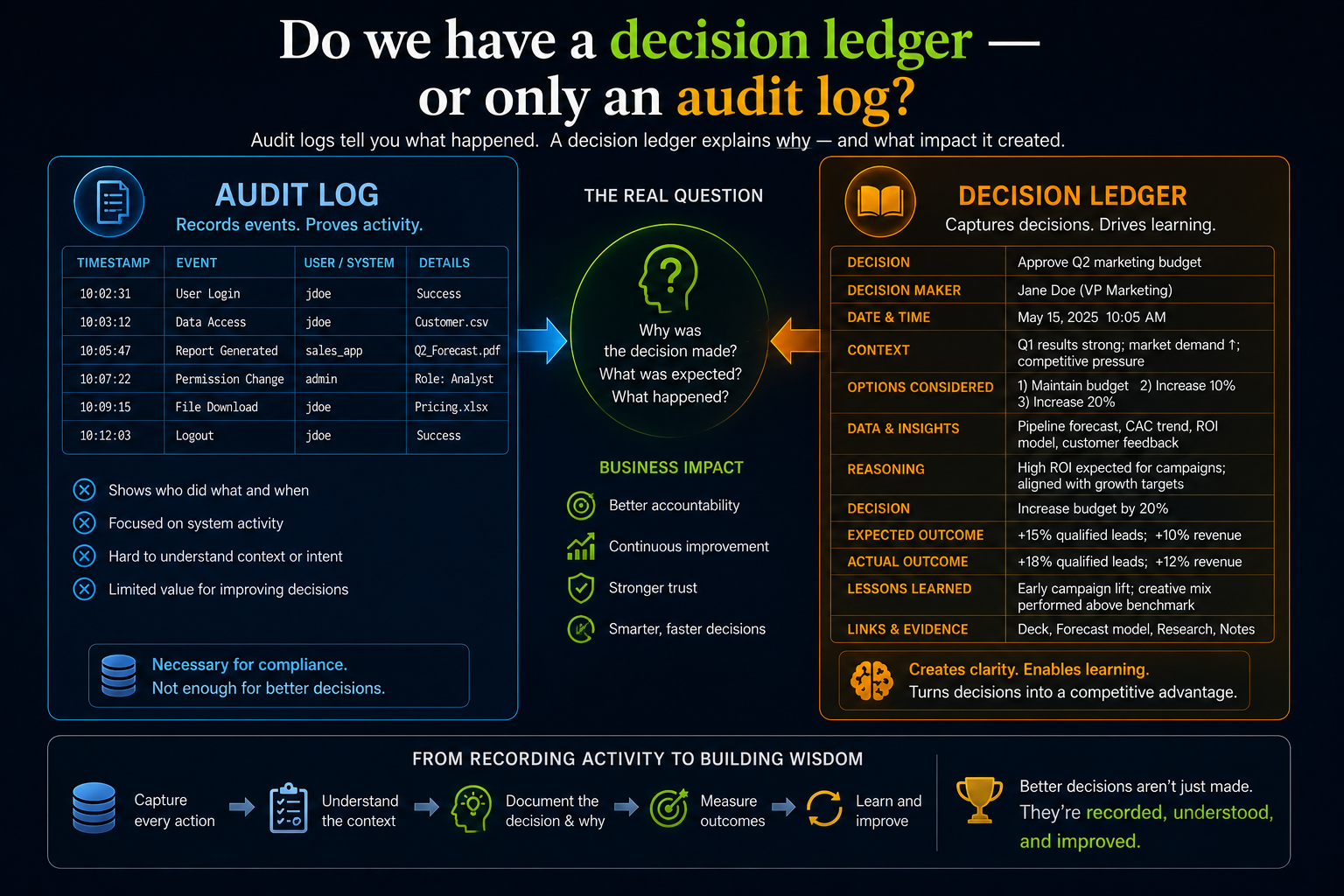

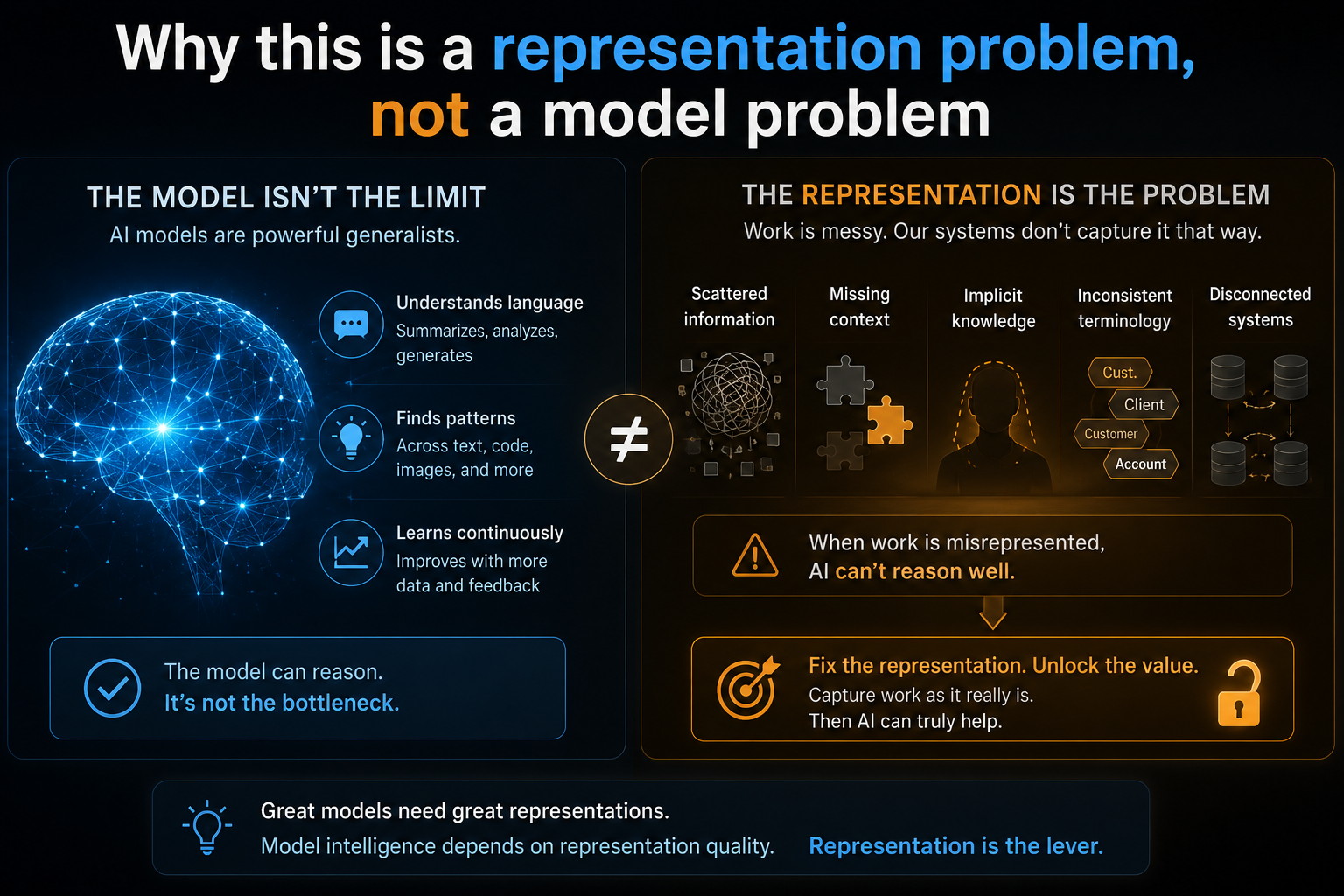

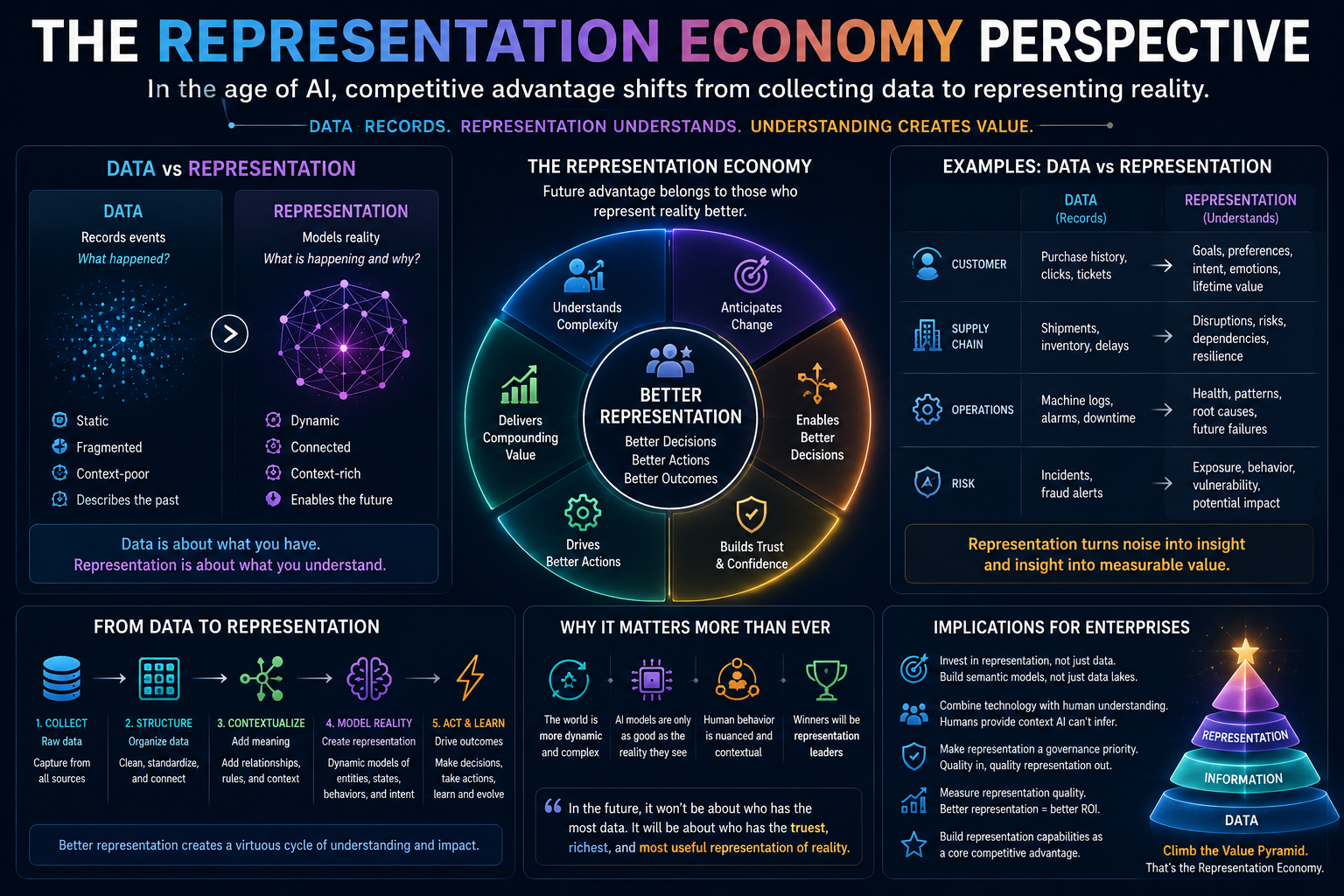

Digital transformation created records. AI transformation needs representation.

Digital transformation gave companies digital records.

AI transformation requires reliable representation.

A record says something happened.

A representation explains what that event means in context.

A CRM system may record that a customer called three times in one week. That is a record.

But what does it represent?

It may represent dissatisfaction. It may represent confusion. It may represent an urgent unresolved issue. It may represent a high-value customer losing trust. It may represent poor product design. It may represent a gap between sales promises and service delivery.

The same record can mean different things depending on context.

AI systems need that context.

Without it, they may optimize the wrong thing.

They may reduce call-handling time while increasing customer frustration.

They may automate approvals while increasing downstream risk.

They may summarize documents while missing which document is authoritative.

They may recommend next actions without understanding political, operational, or compliance consequences.

That is why AI transformation cannot be built only on digital records.

It must be built on high-quality representation.

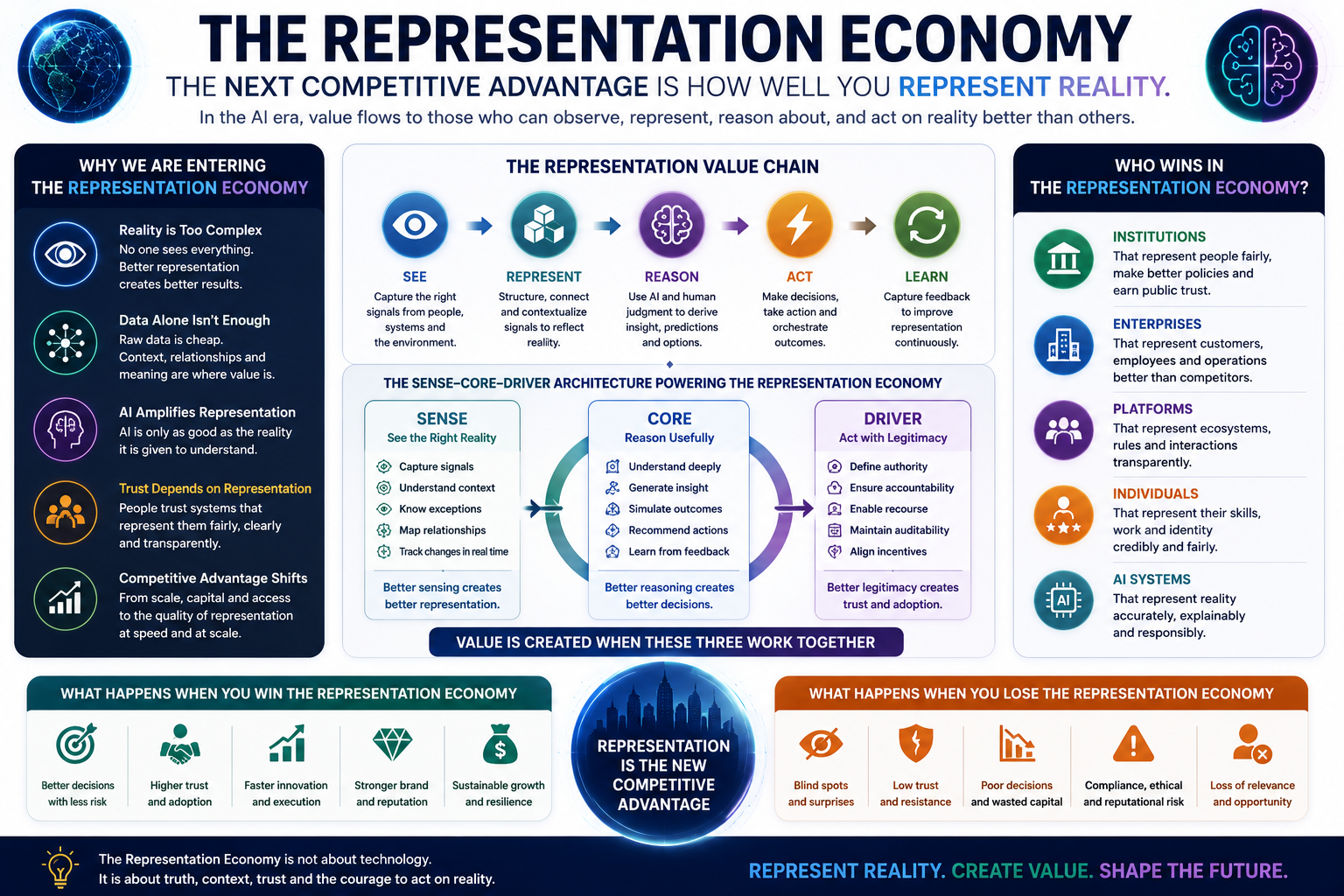

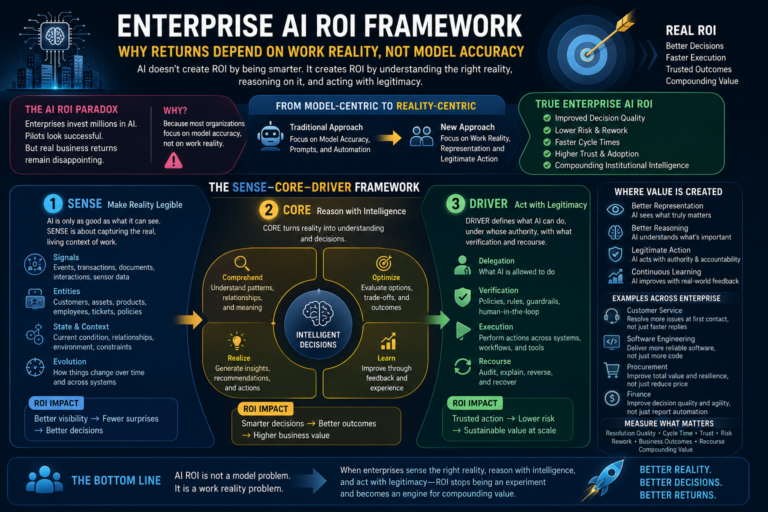

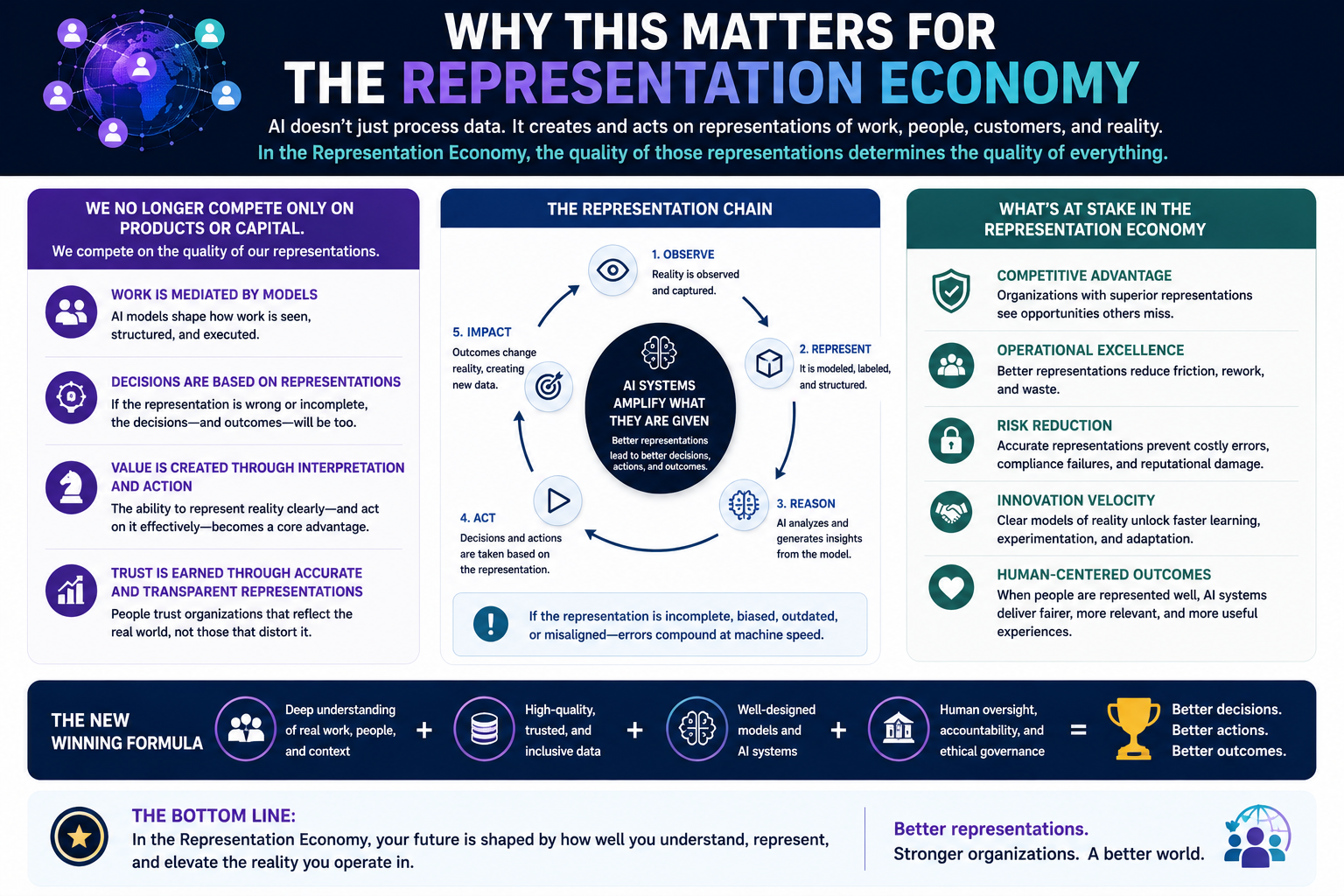

This is the core idea of the Representation Economy: value in the AI era depends on how well institutions represent reality in ways that are machine-readable, trustworthy, contextual, governable, and actionable.

Digital records are not enough.

Machine-readable reality is the real foundation.

Why companies misunderstand work

Companies misunderstand work for a simple reason: much of work is invisible to systems.

The enterprise system captures the ticket.

It does not capture the hesitation before escalation.

The workflow captures the approval.

It does not capture the informal phone call that made the approval possible.

The dashboard captures the delay.

It does not capture the conflict between two teams.

The CRM captures the customer status.

It does not capture the relationship history known only to the account manager.

The HR system captures role and reporting line.

It does not capture who people actually go to when they need help.

This invisible layer is where work often happens.

Experienced employees know which rule can be applied strictly and which one needs interpretation. They know when a customer is angry but still recoverable. They know when a supplier delay is normal and when it signals a deeper risk. They know which project status is green only because people are working nights to hide the real problem.

AI systems rarely know this unless the enterprise deliberately represents it.

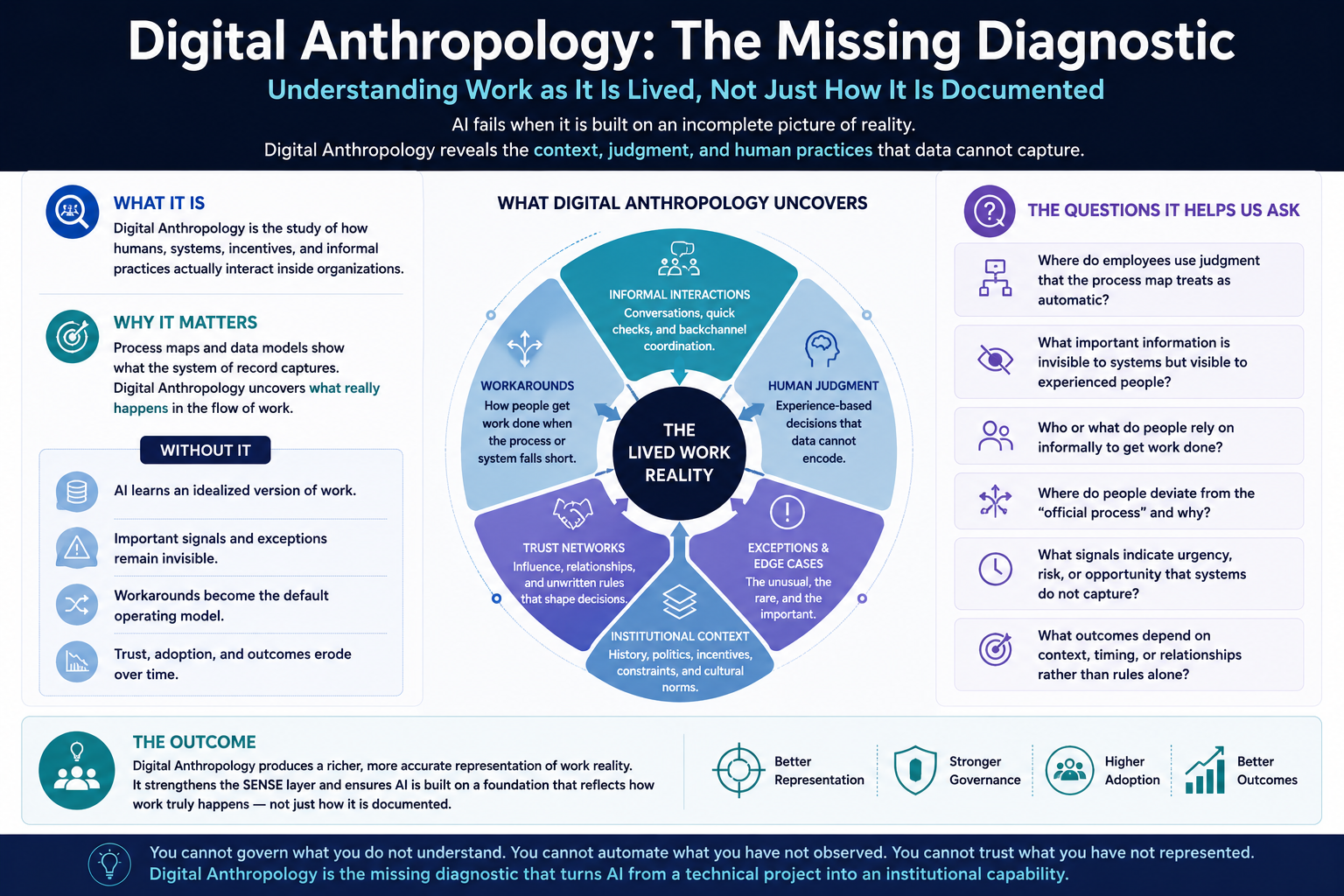

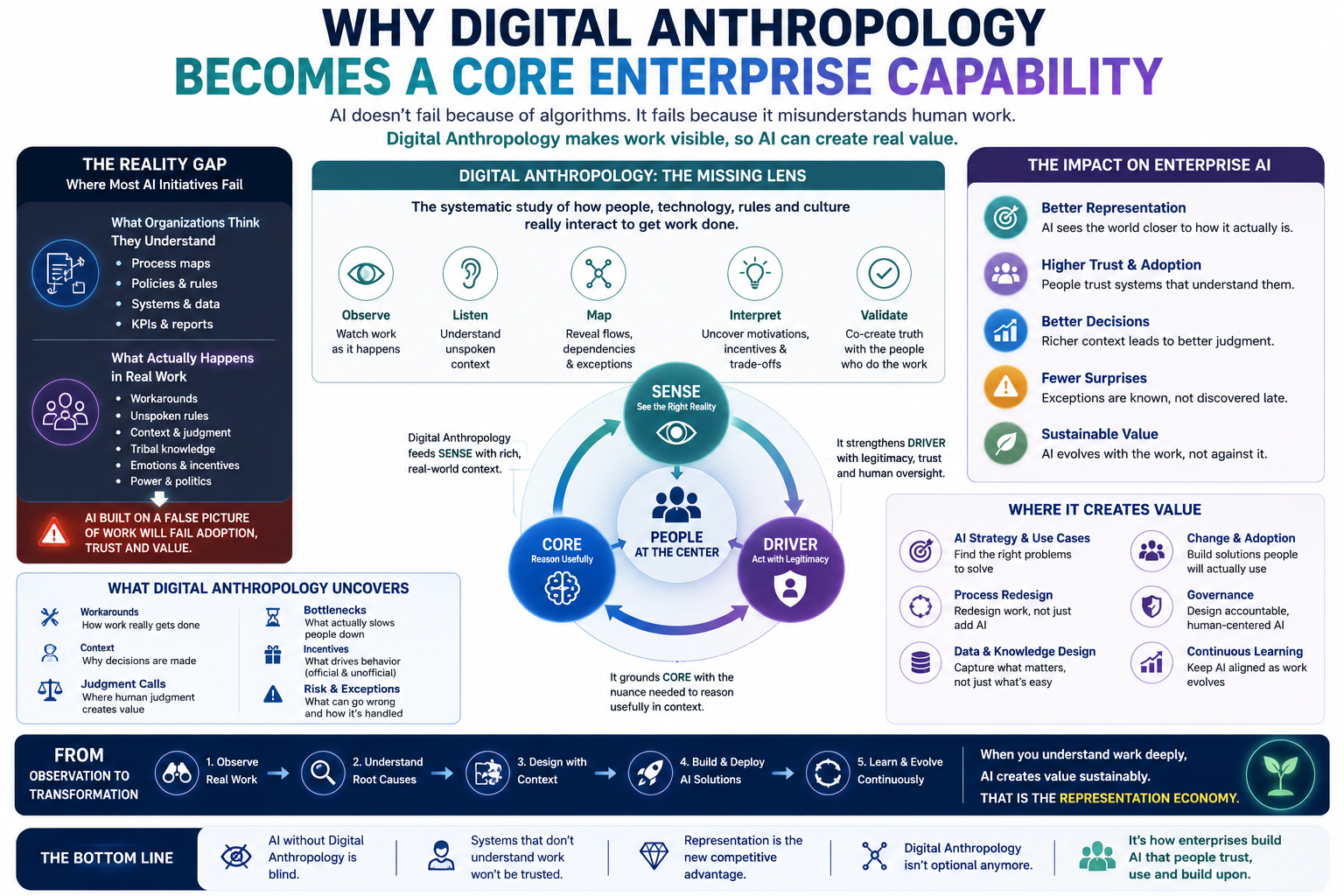

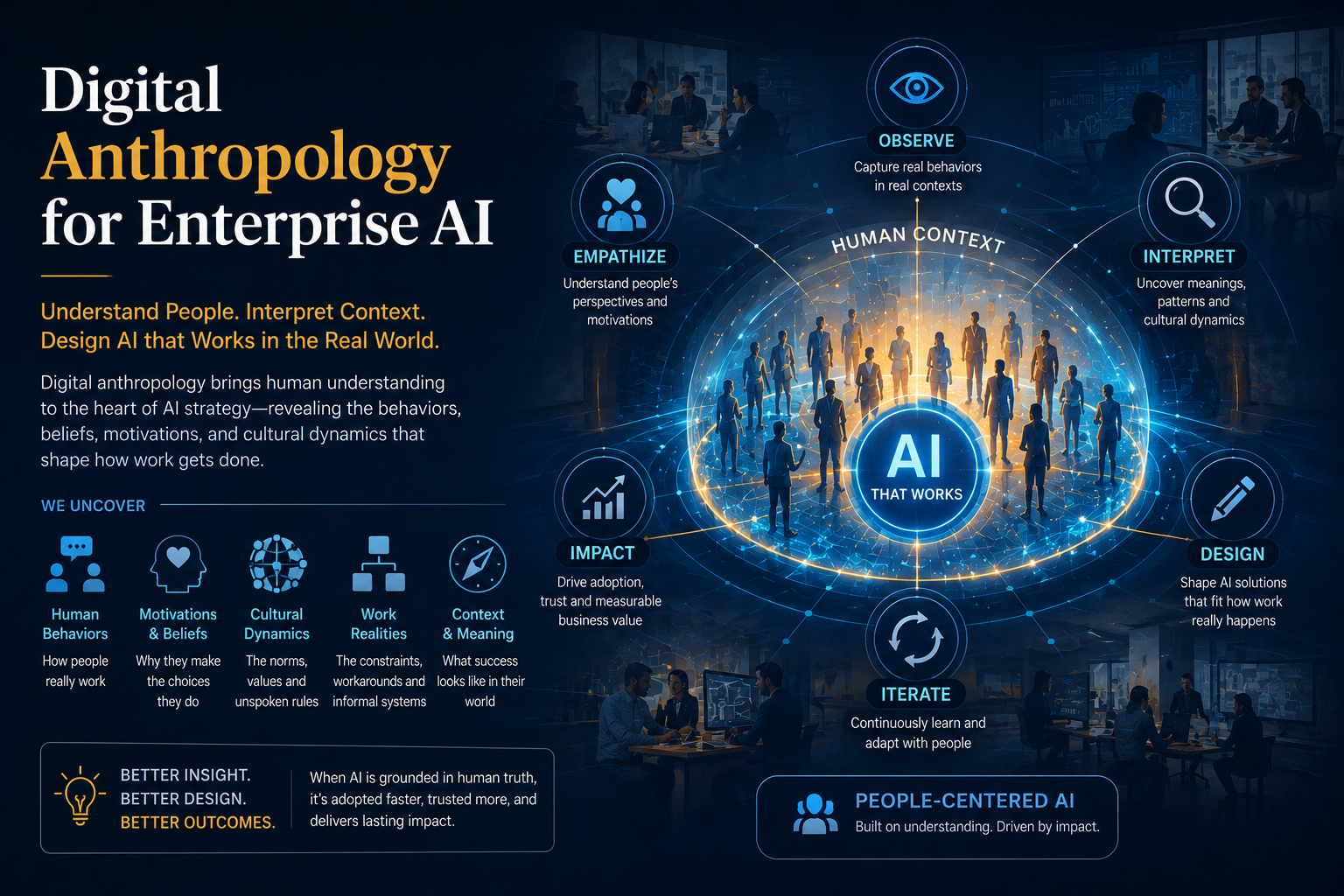

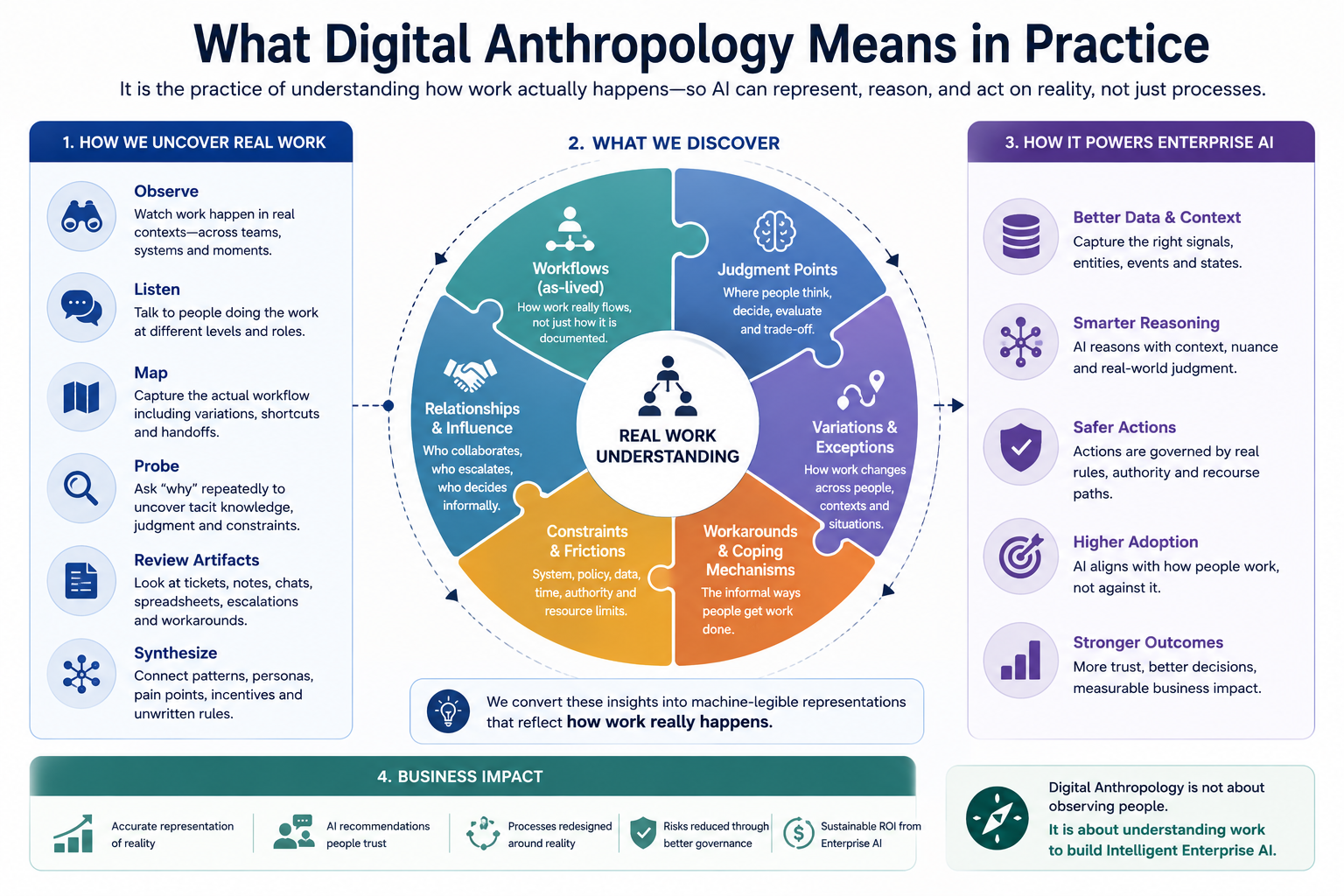

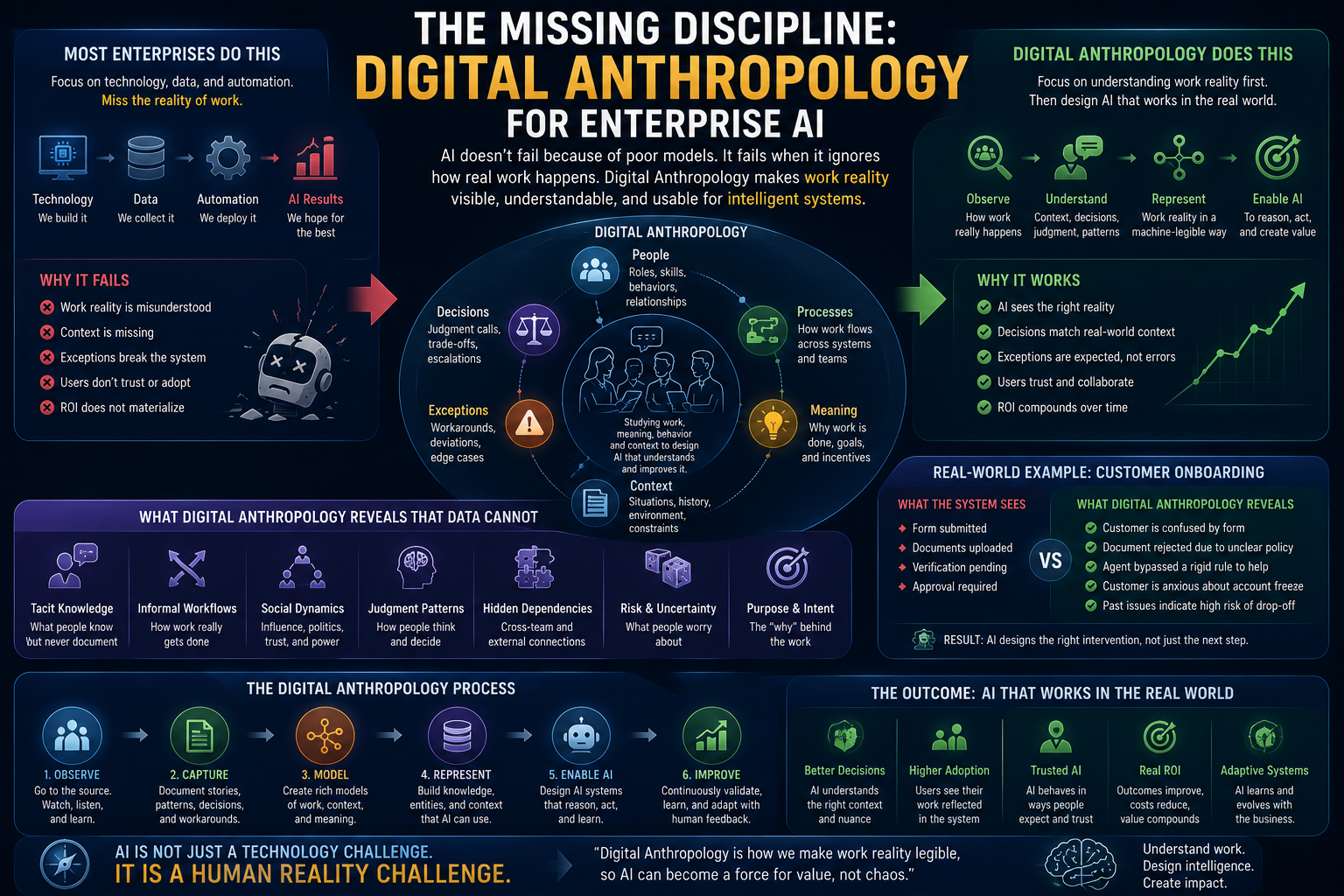

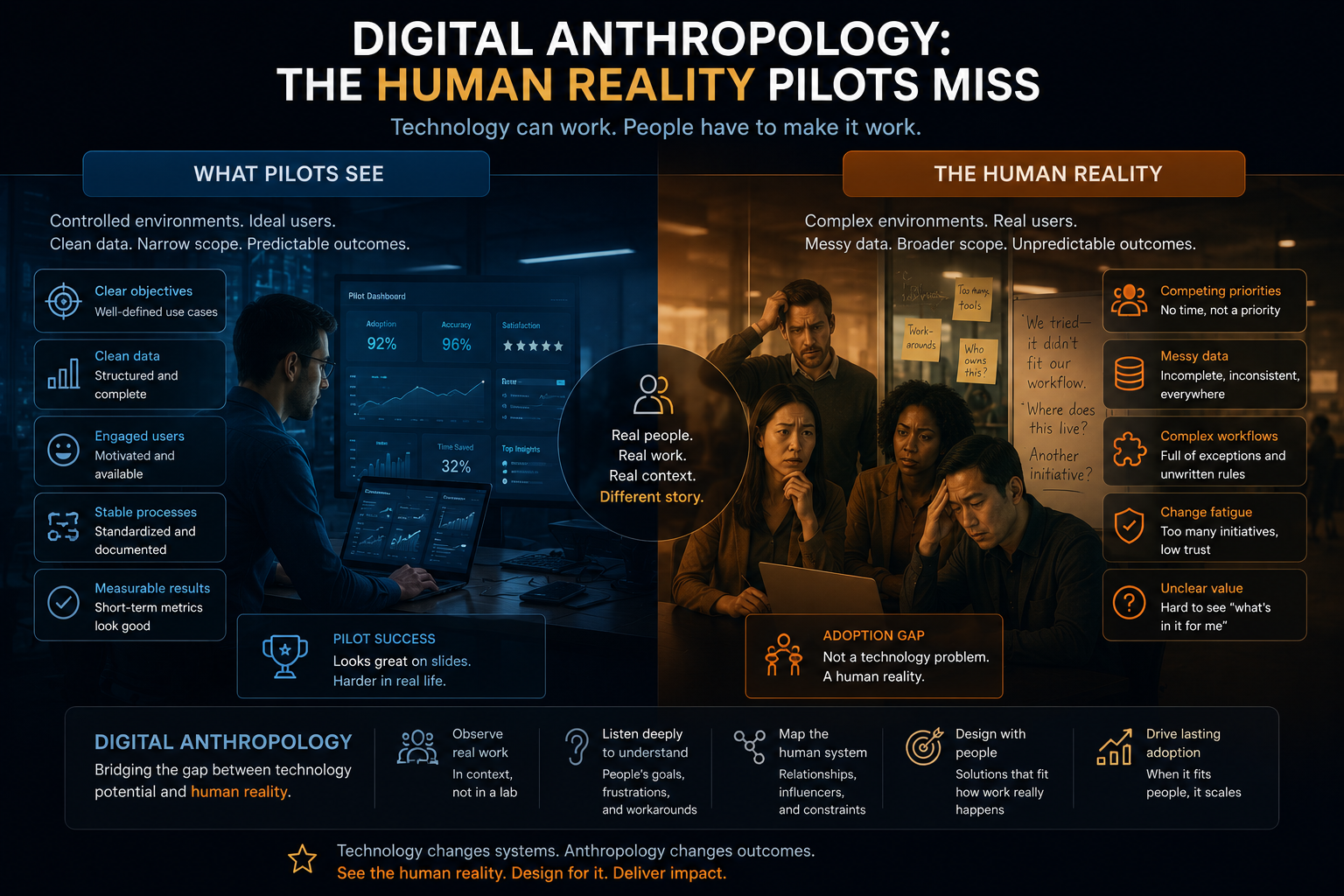

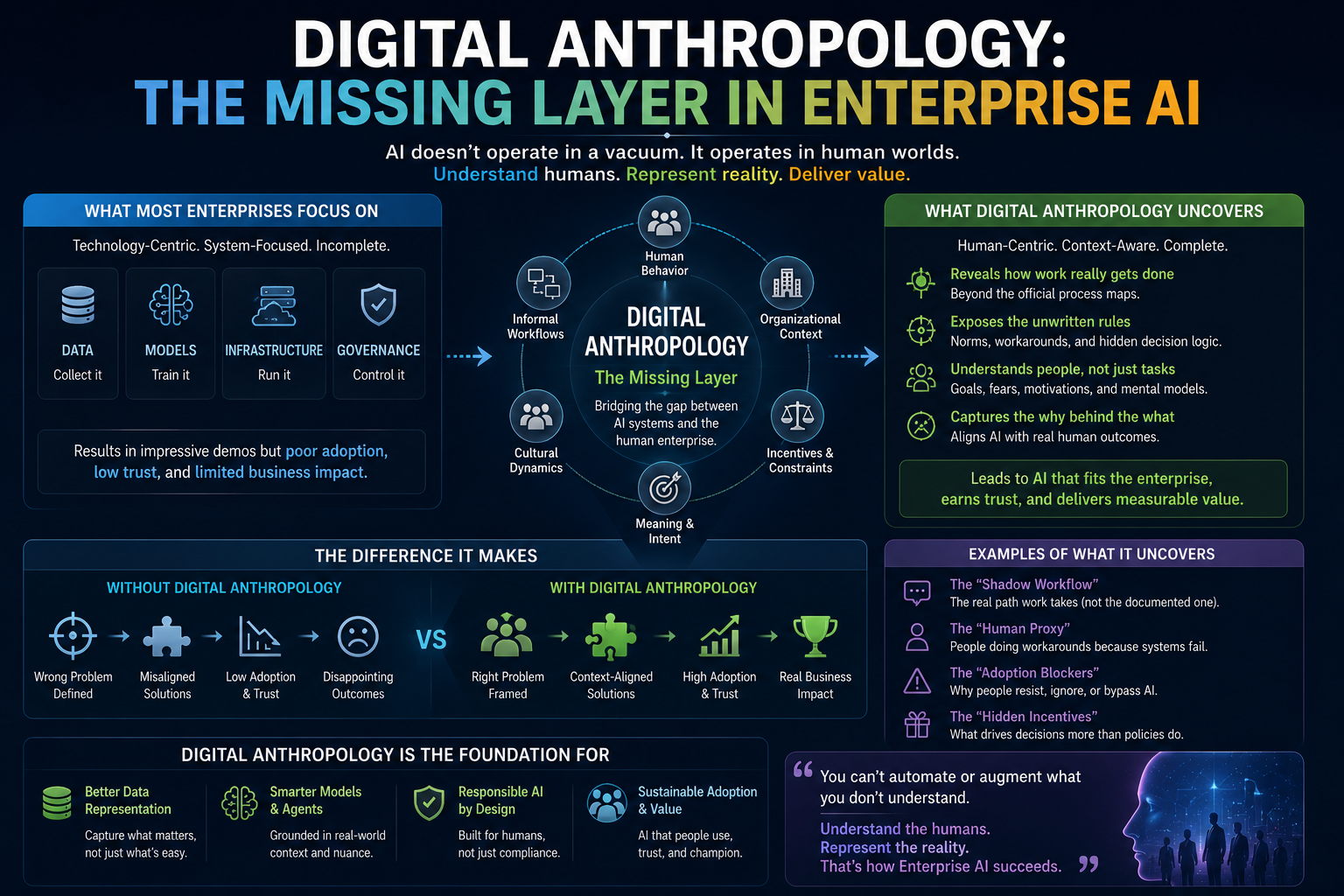

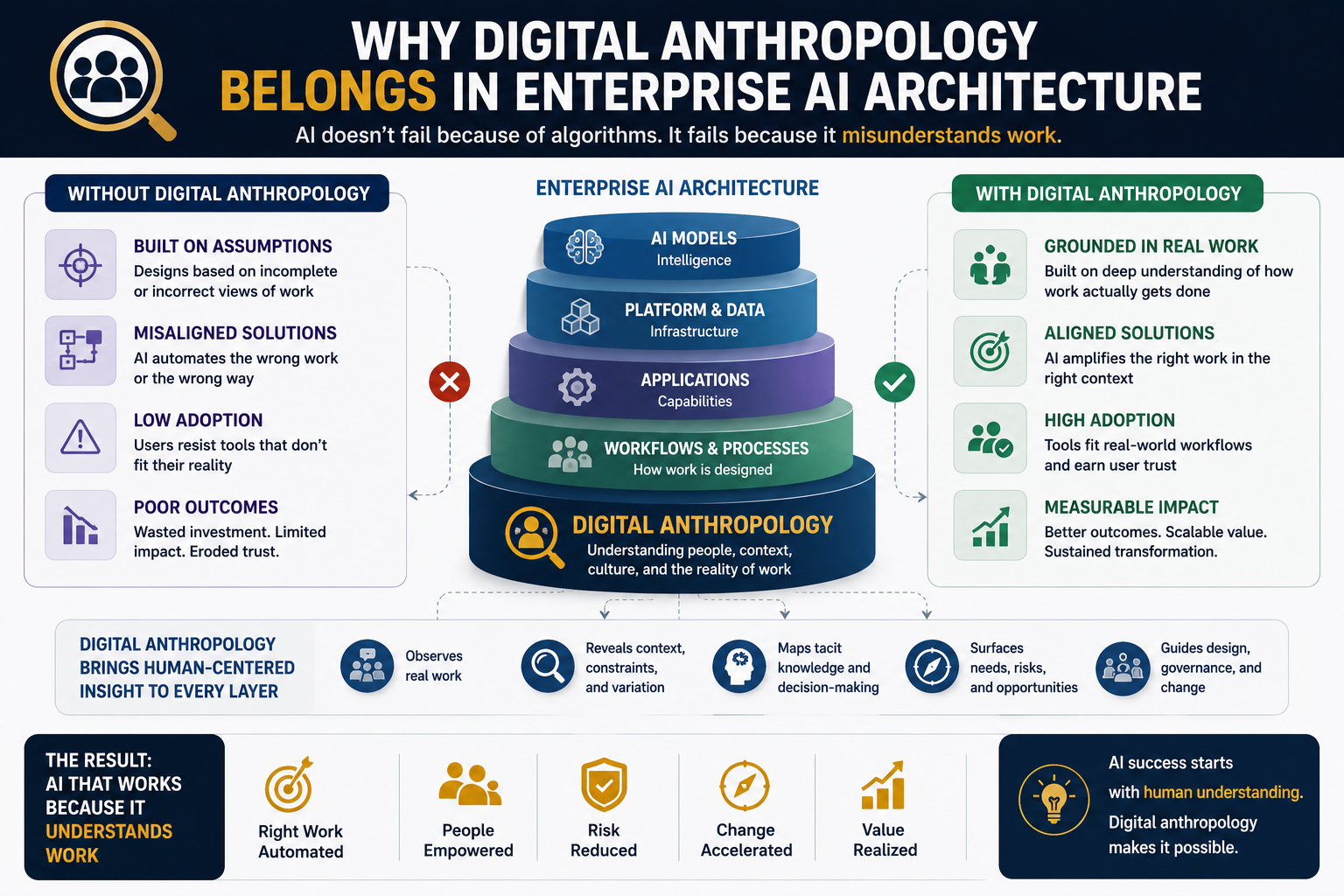

That is why digital anthropology matters.

Digital anthropology helps organizations study real human, social, and institutional behavior inside digital environments. It asks how people actually use systems, where they trust them, where they bypass them, where they add judgment, and where the official workflow hides real work.

For enterprise AI, this is not academic decoration.

It is operational intelligence.

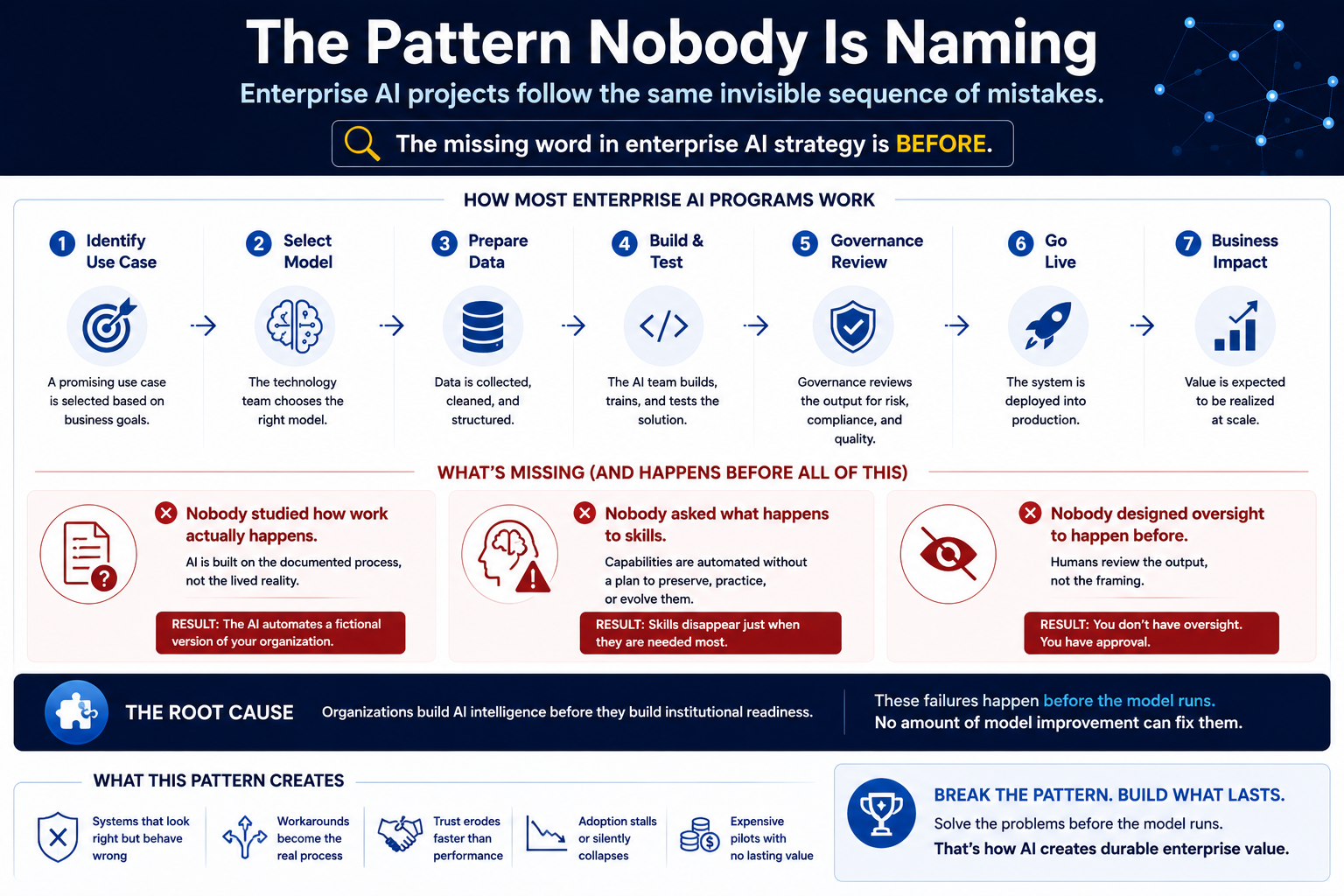

The old digital transformation mistake

Many digital transformation programs made a dangerous assumption.

They assumed that if a process was digitized, the organization understood it.

That was not always true.

Digitization often made the visible parts of work faster. It did not always make the hidden parts clearer.

A company could digitize procurement and still not understand why procurement delays happen.

A bank could digitize loan origination and still not understand how relationship managers interpret risk.

A hospital could digitize patient records and still not understand the informal coordination between nurses, doctors, administrators, and families.

A software company could digitize agile boards and still not understand why delivery teams miss commitments.

This was manageable when systems were mostly passive.

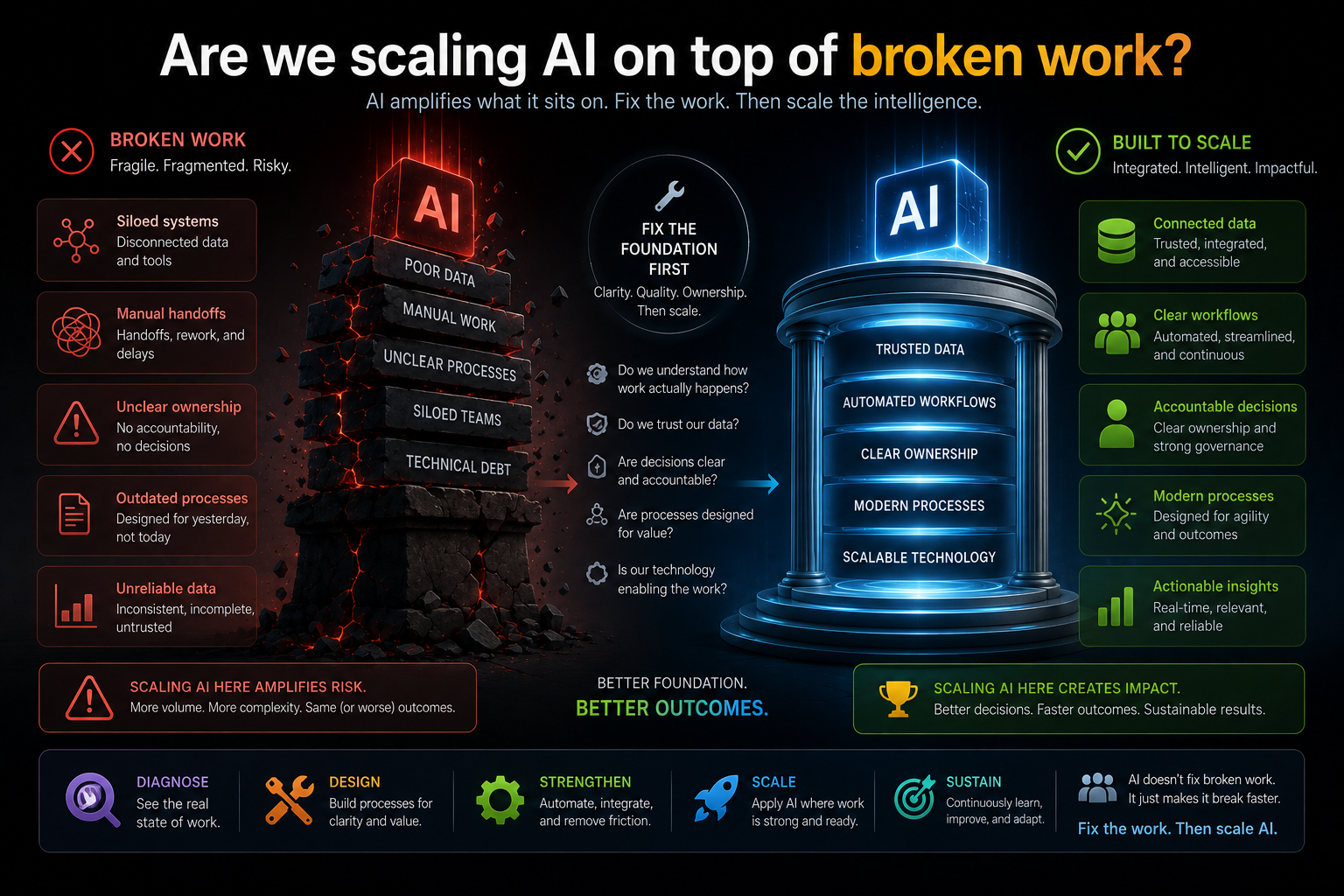

But AI changes the stakes.

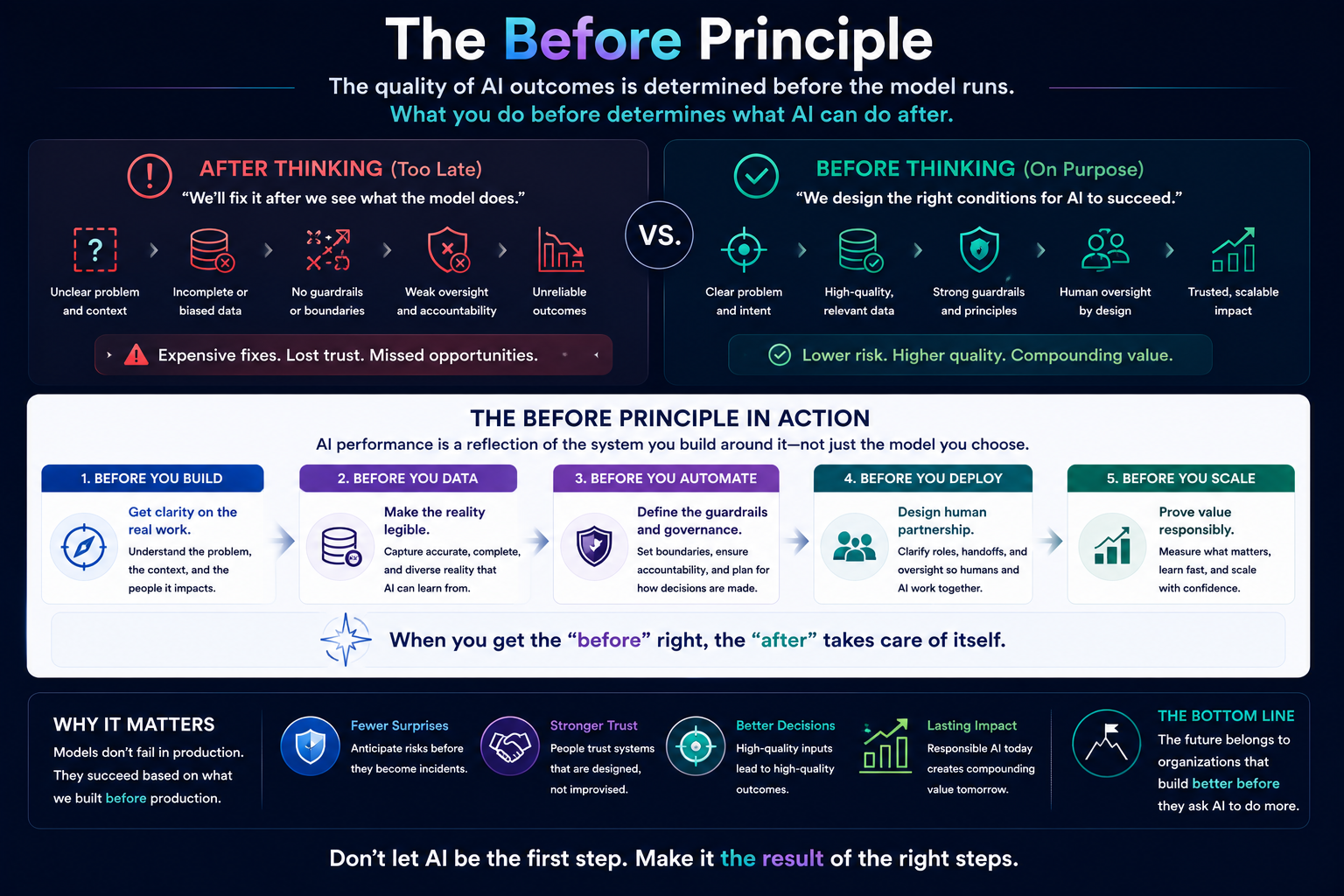

When software only stored information, weak representation created inefficiency.

When AI starts recommending, deciding, prioritizing, escalating, or acting, weak representation creates risk.

The old digital transformation problem becomes the new AI transformation failure.

AI does not fix broken understanding. It scales it.

There is a common belief that AI will help organizations overcome messy processes.

Sometimes it will.

But if the underlying representation of work is poor, AI may not fix the problem. It may amplify it.

If a company misunderstands its customer journey, AI may personalize the wrong experience.

If a company misunderstands employee workflows, AI may automate the wrong steps.

If a company misunderstands operational risk, AI may accelerate unsafe decisions.

If a company misunderstands accountability, AI may make it harder to know who is responsible.

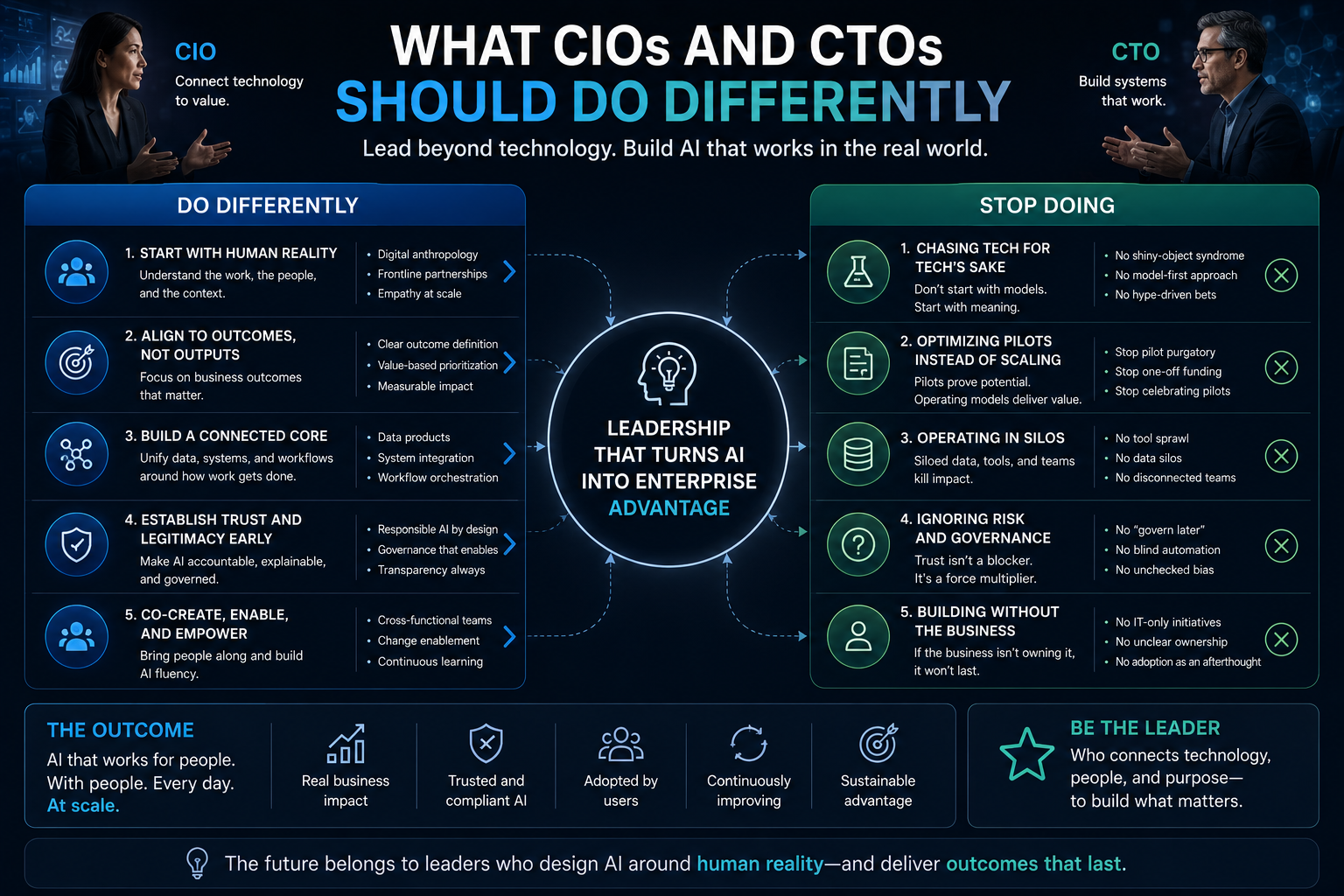

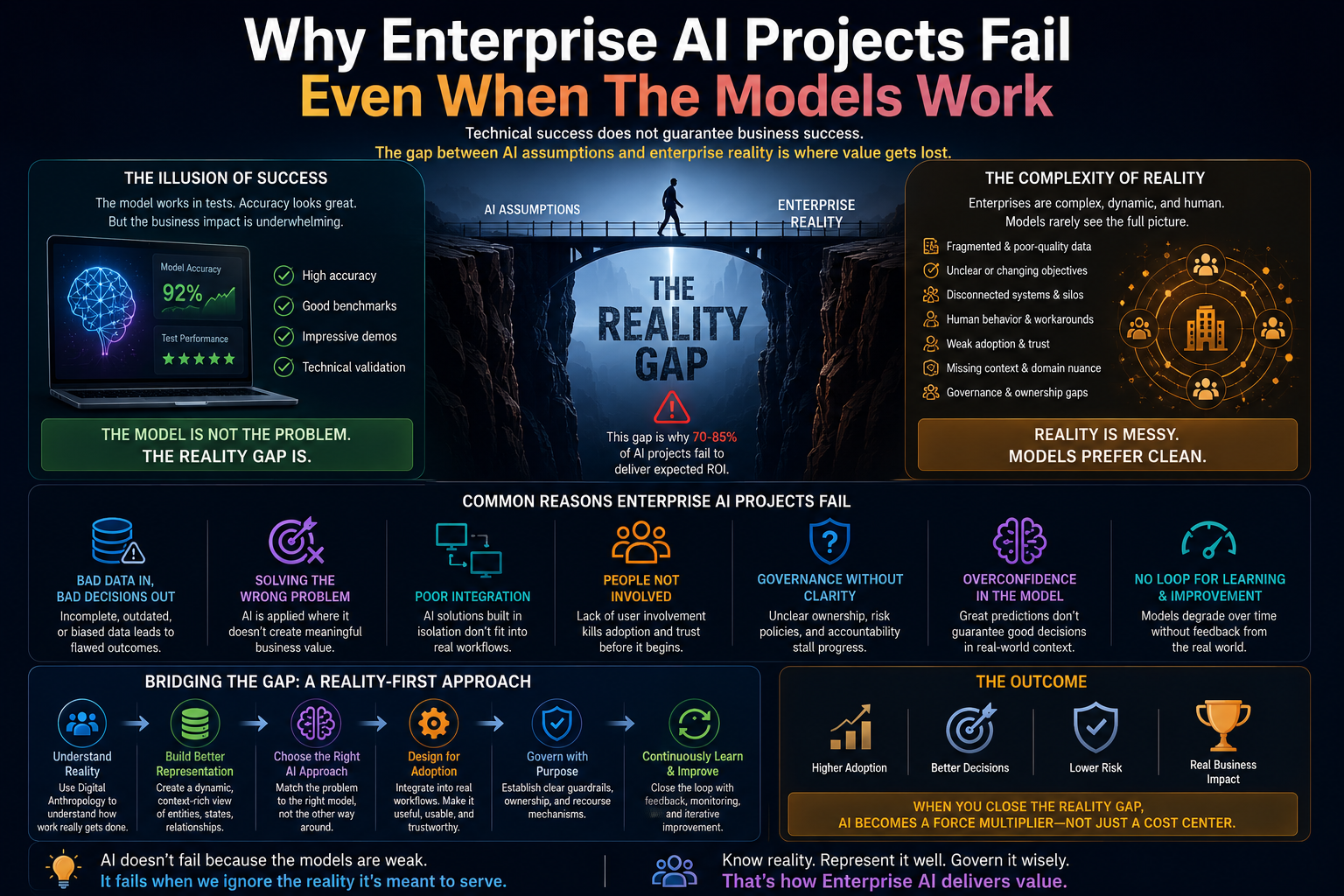

This is one of the most important lessons for CIOs and CTOs:

AI does not automatically create understanding.

AI operates on the reality the organization gives it.

If that reality is incomplete, fragmented, outdated, or politically filtered, AI will reason over a distorted world.

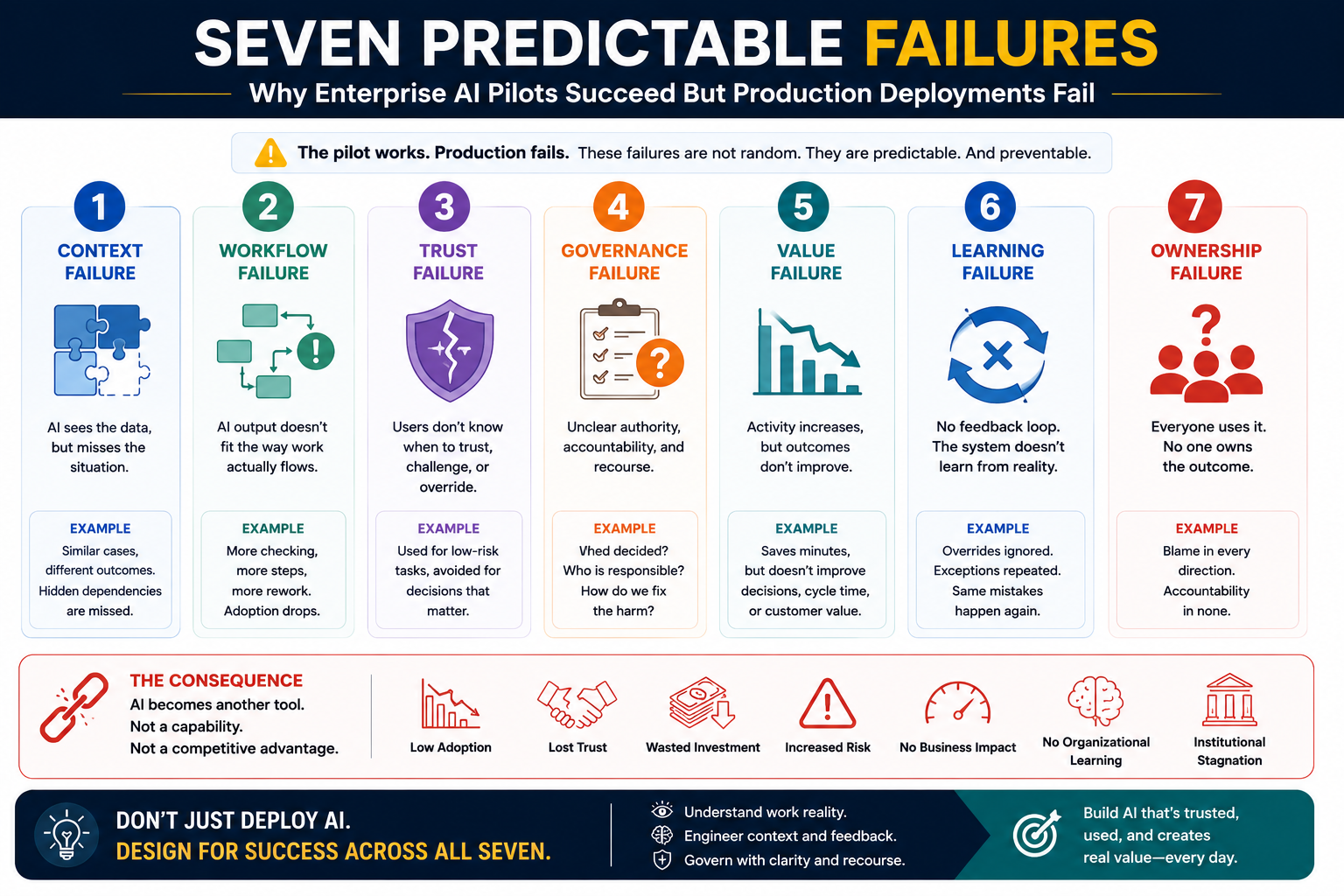

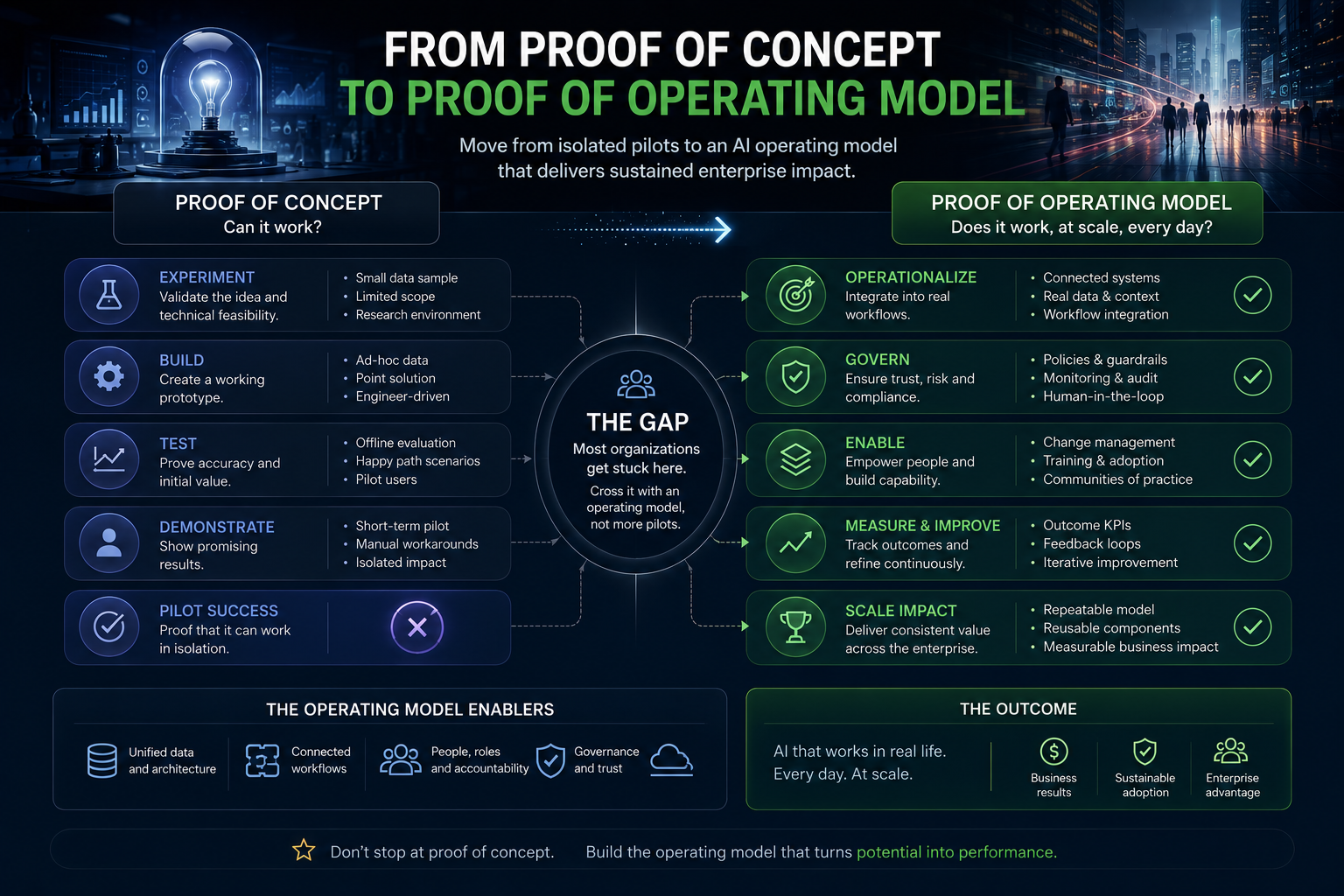

This is why some AI systems look impressive in pilots but disappoint in production.

The pilot uses simplified reality.

The enterprise contains contested reality.

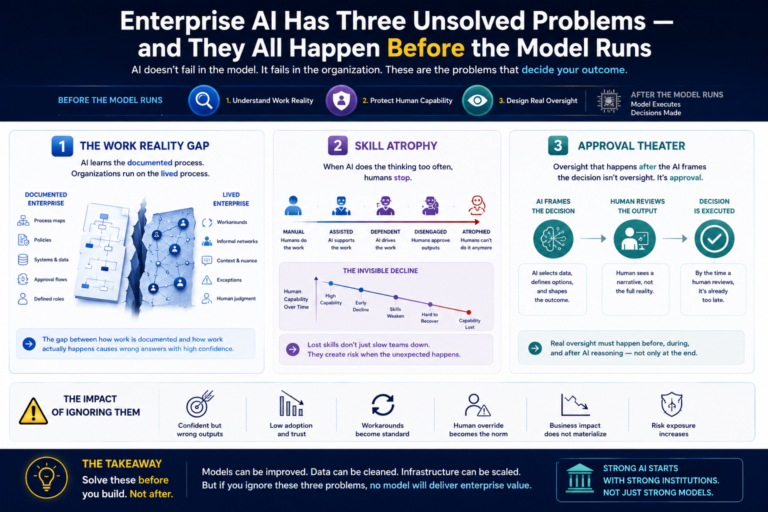

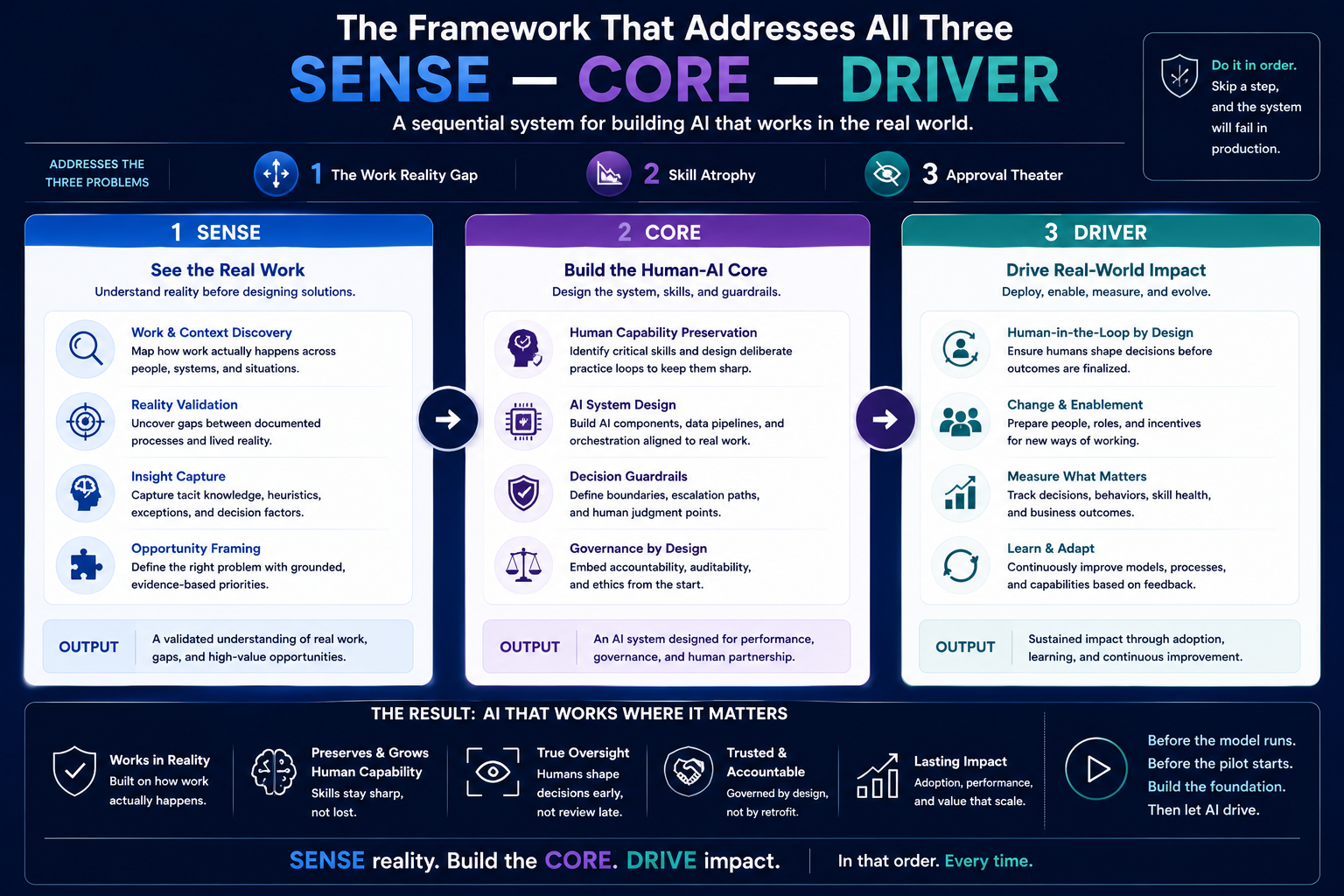

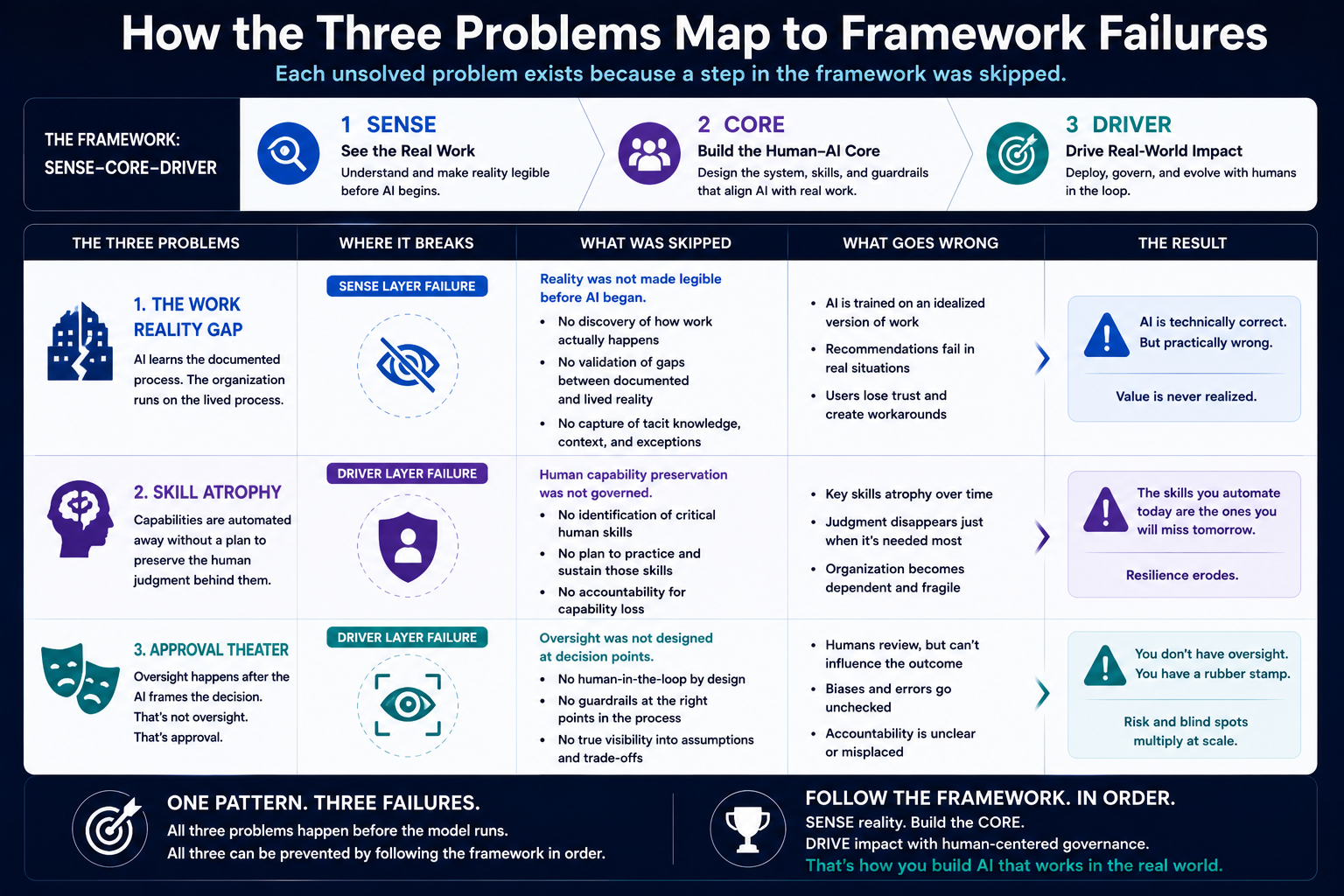

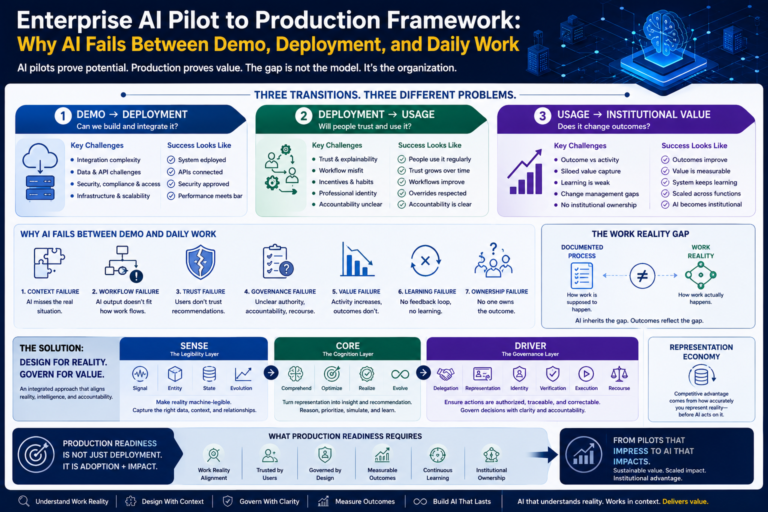

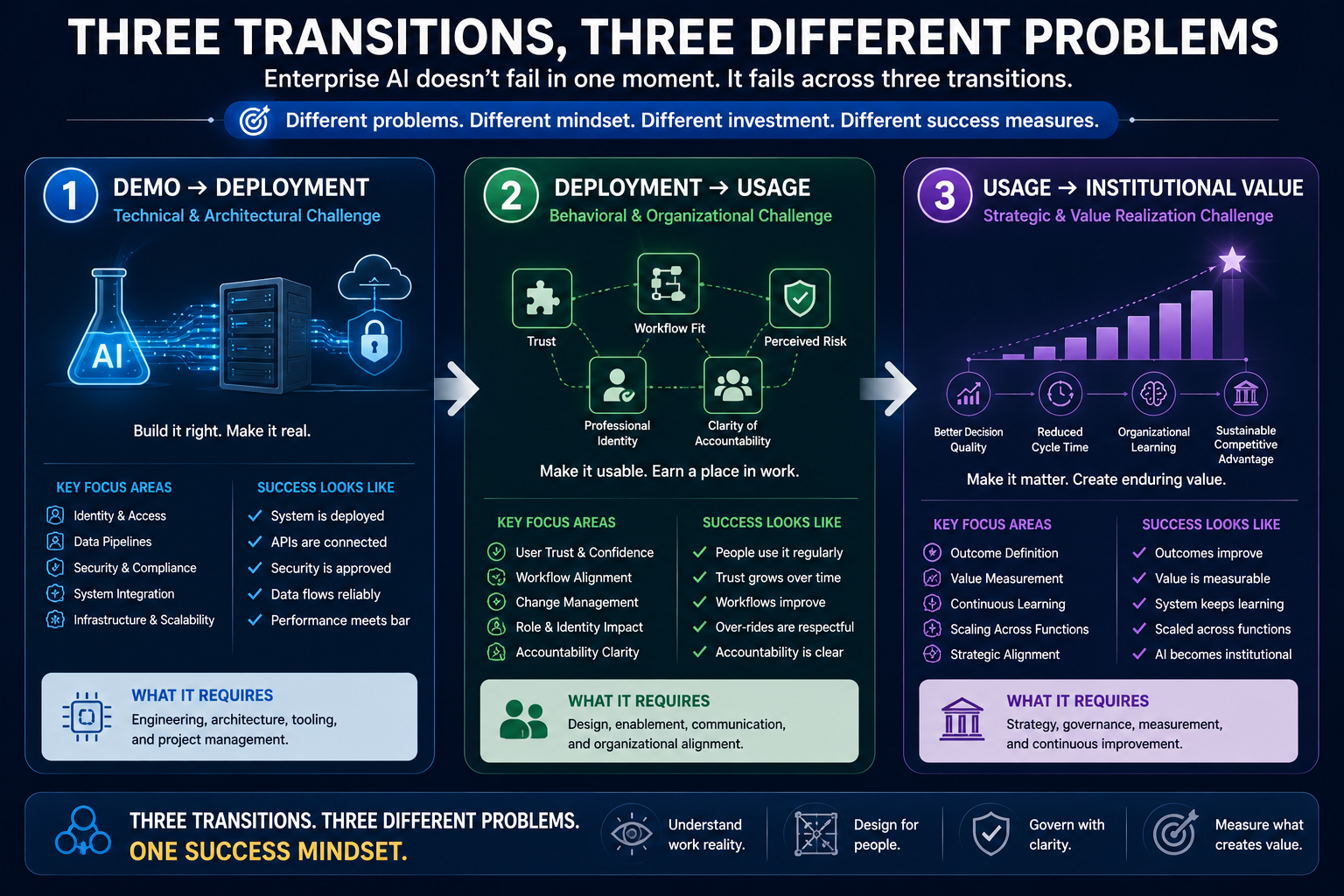

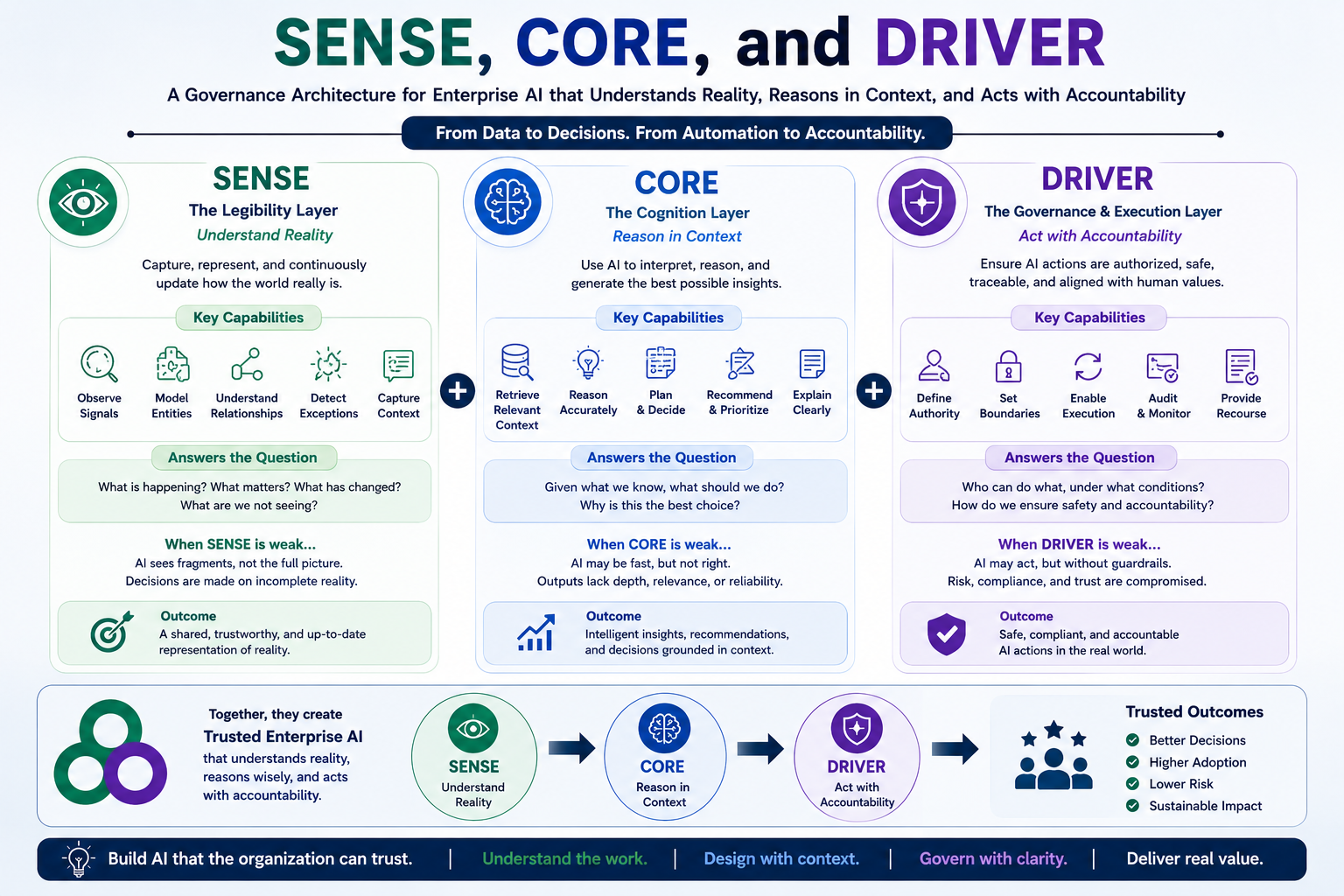

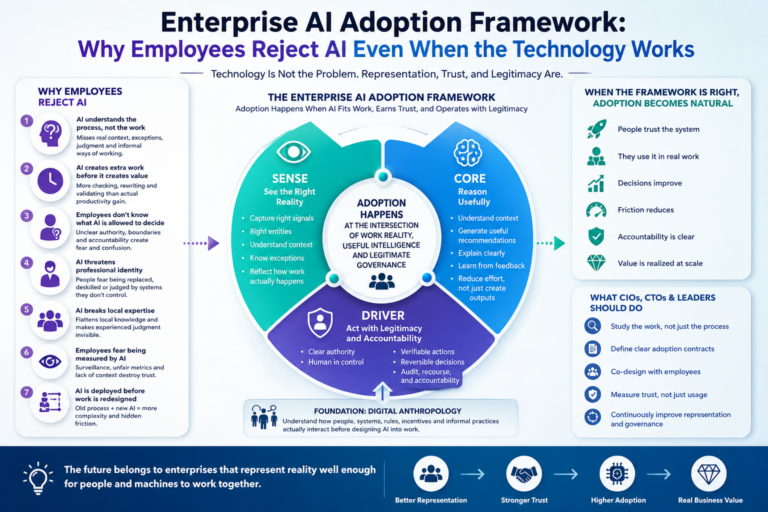

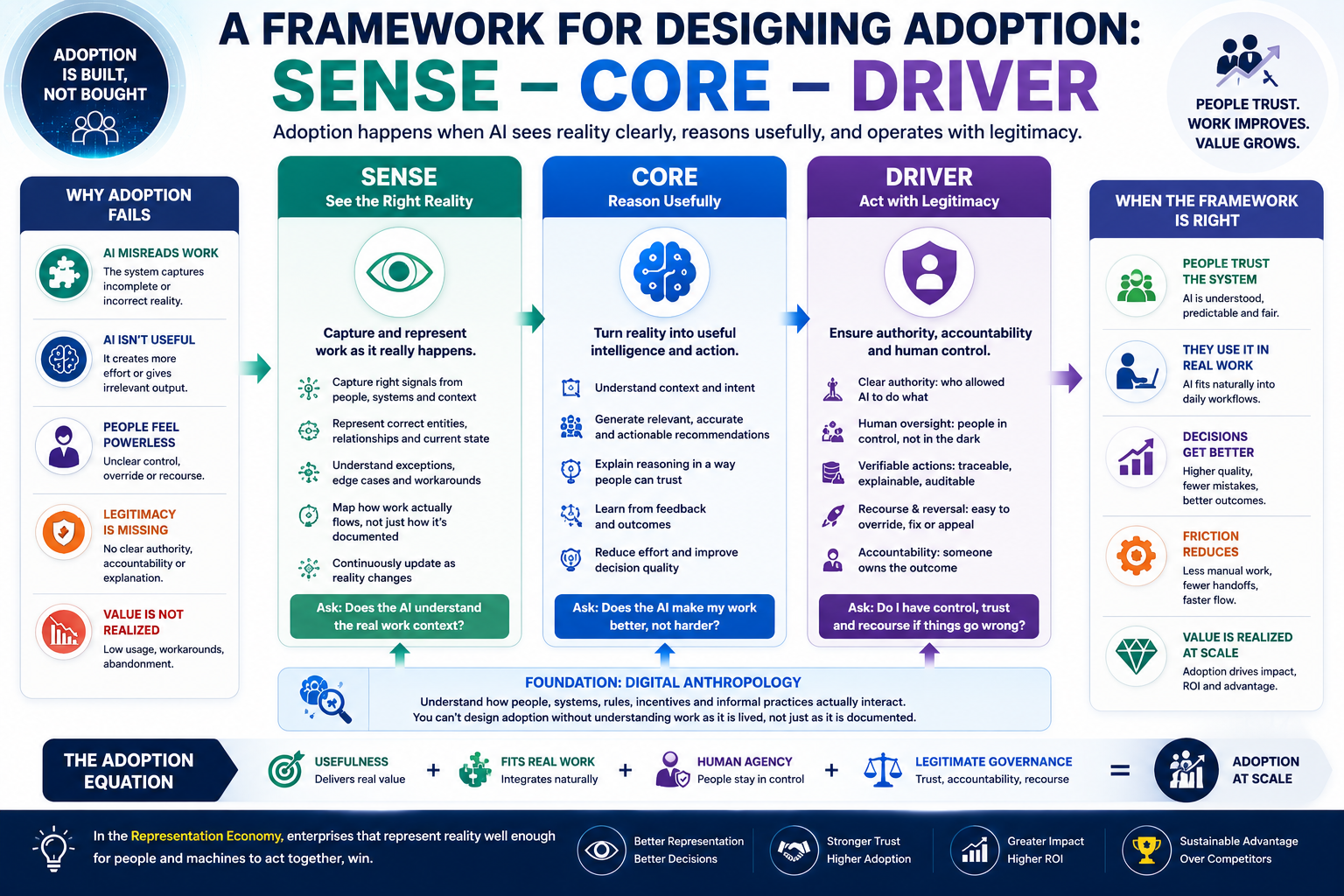

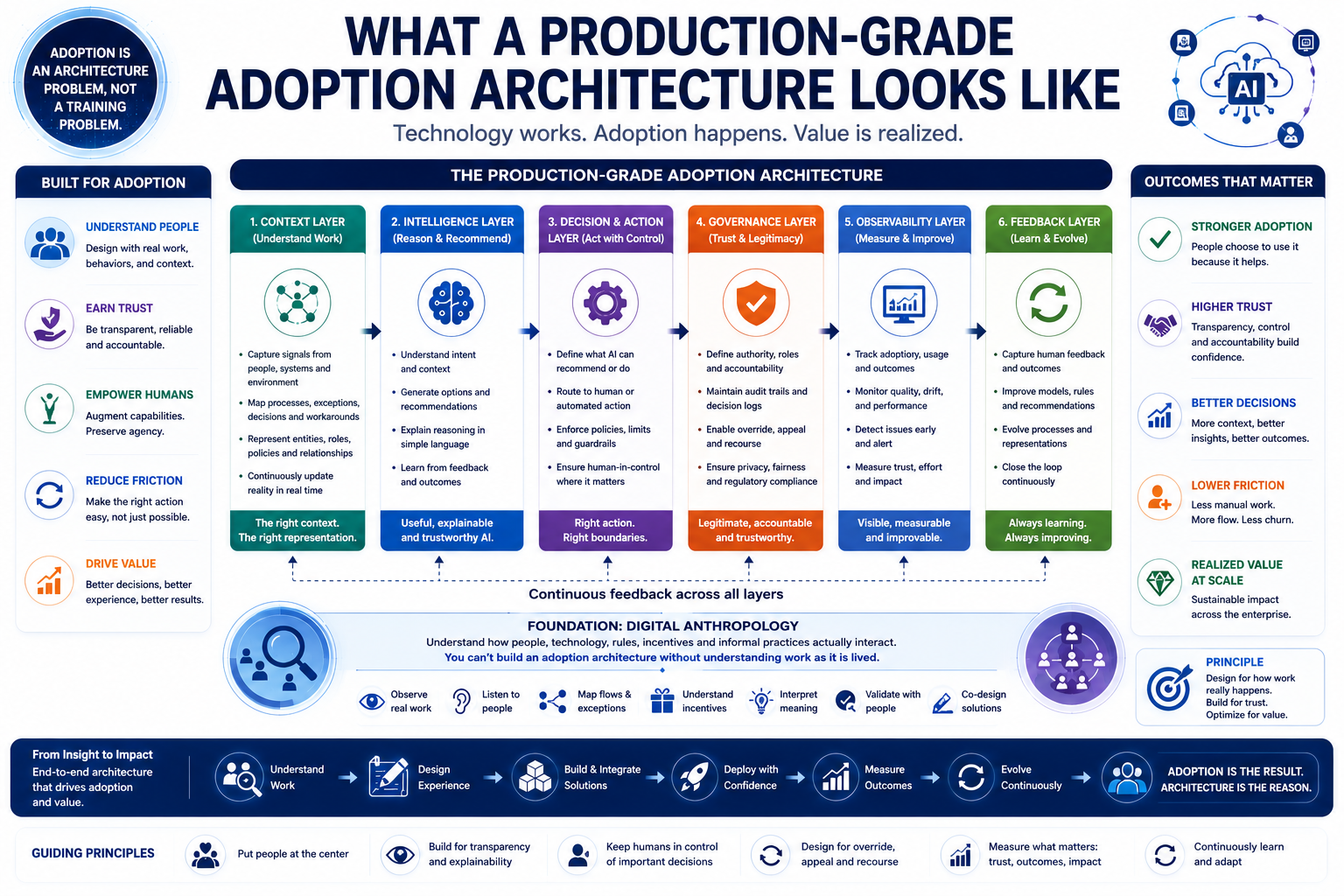

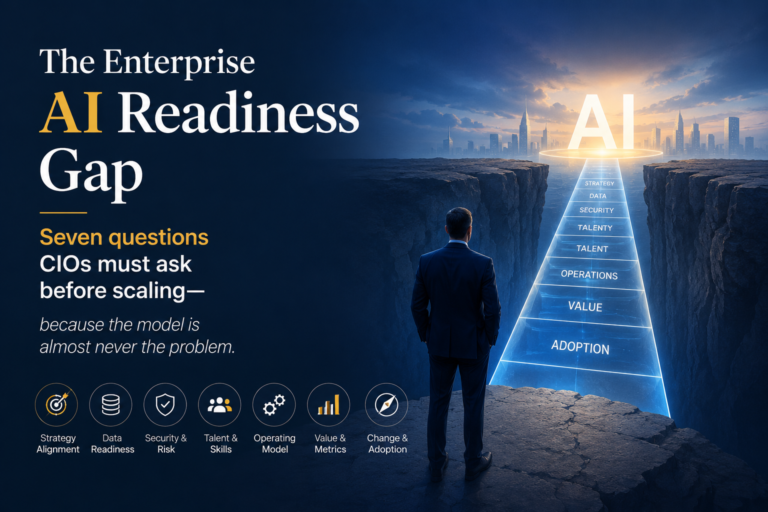

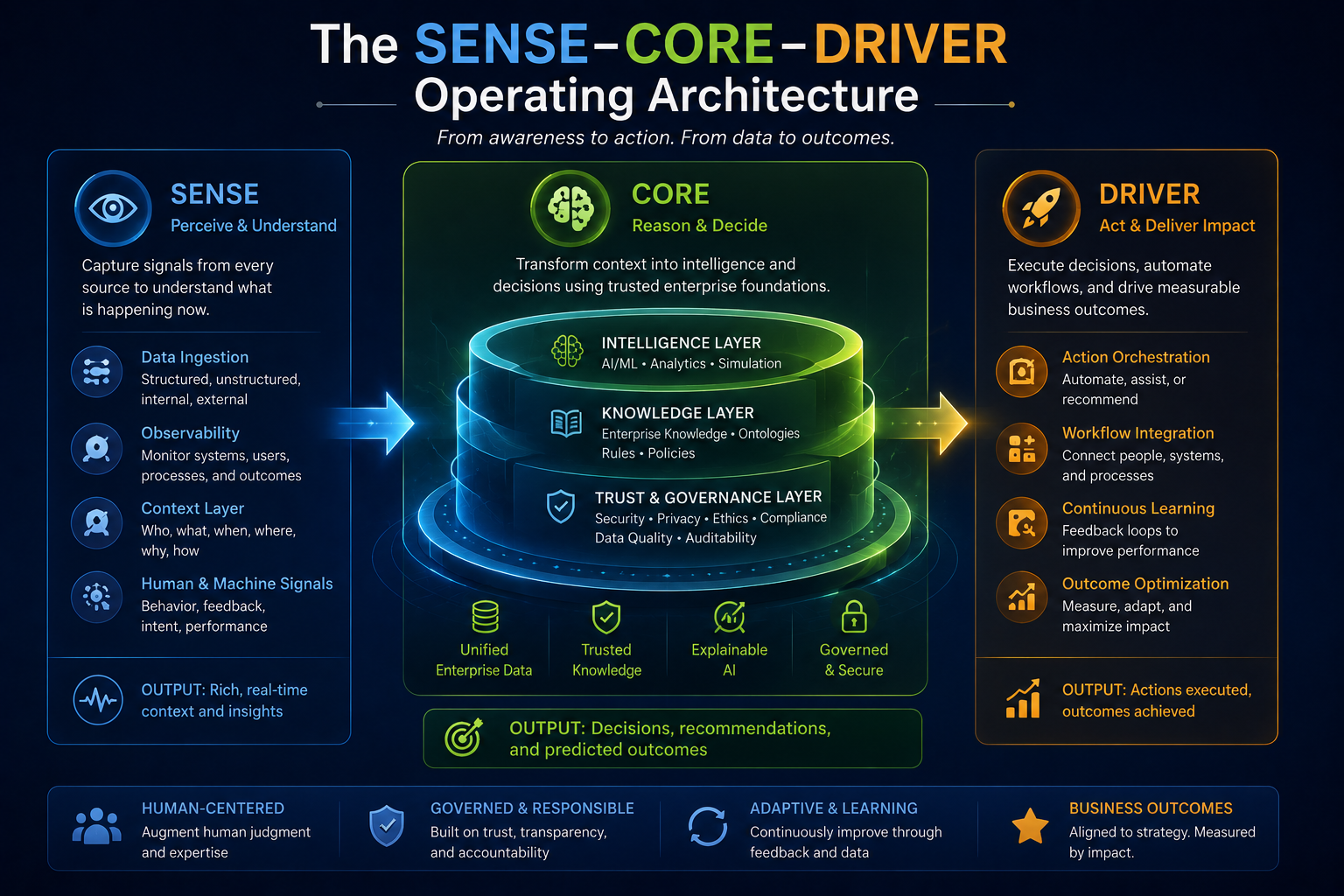

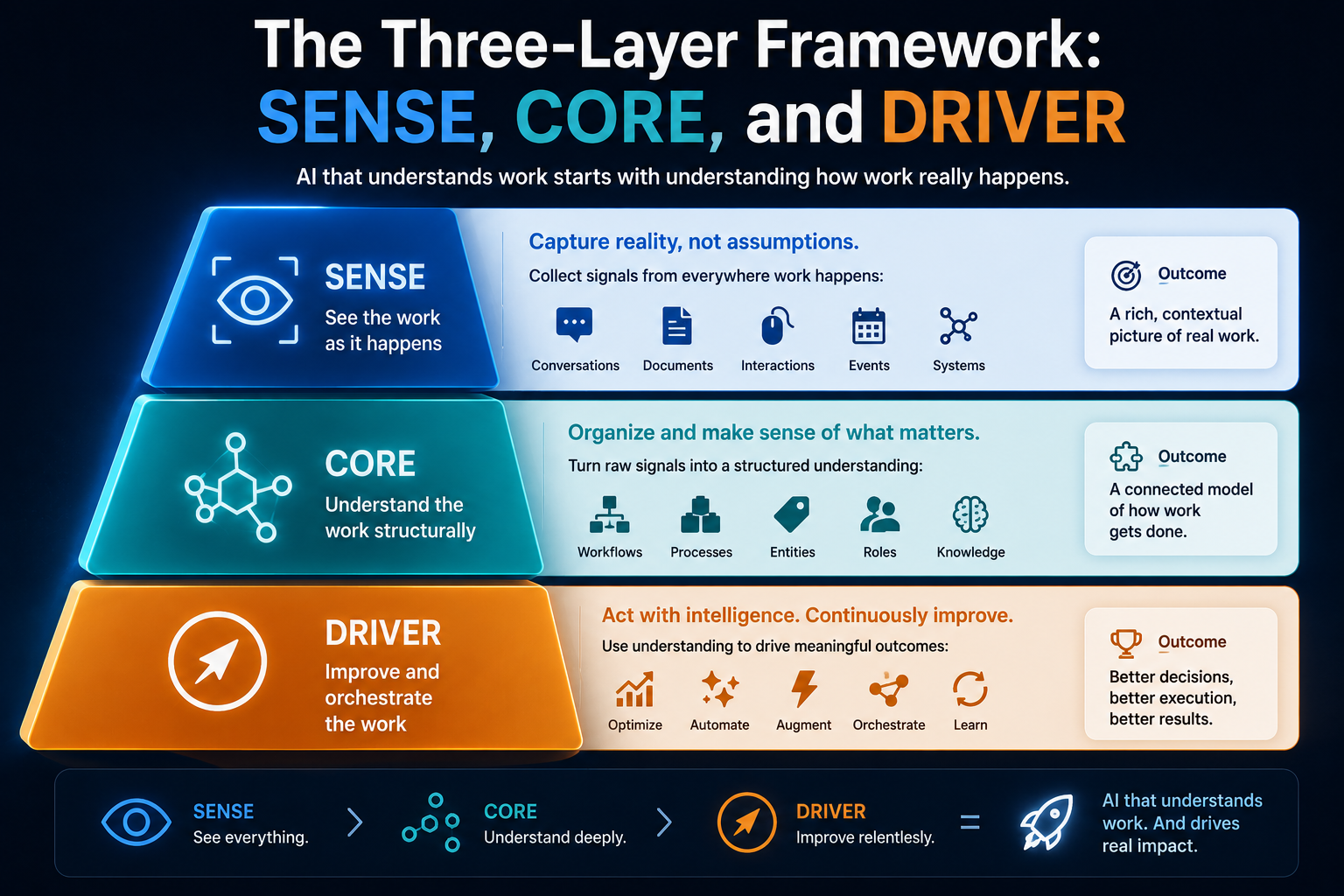

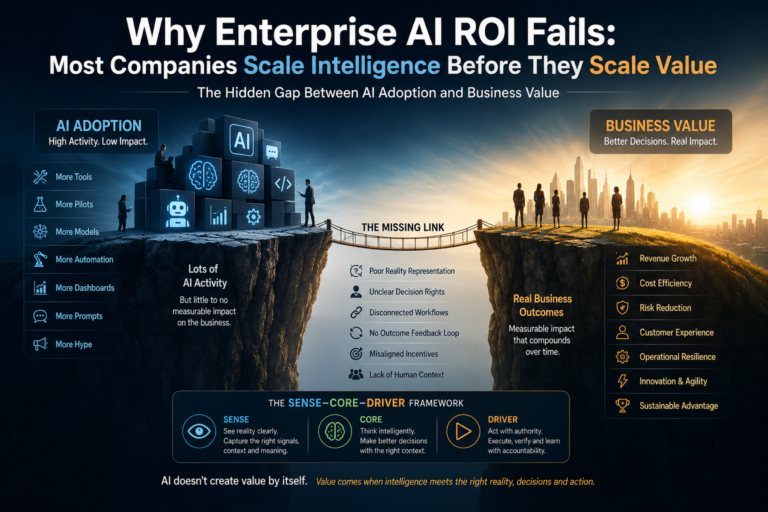

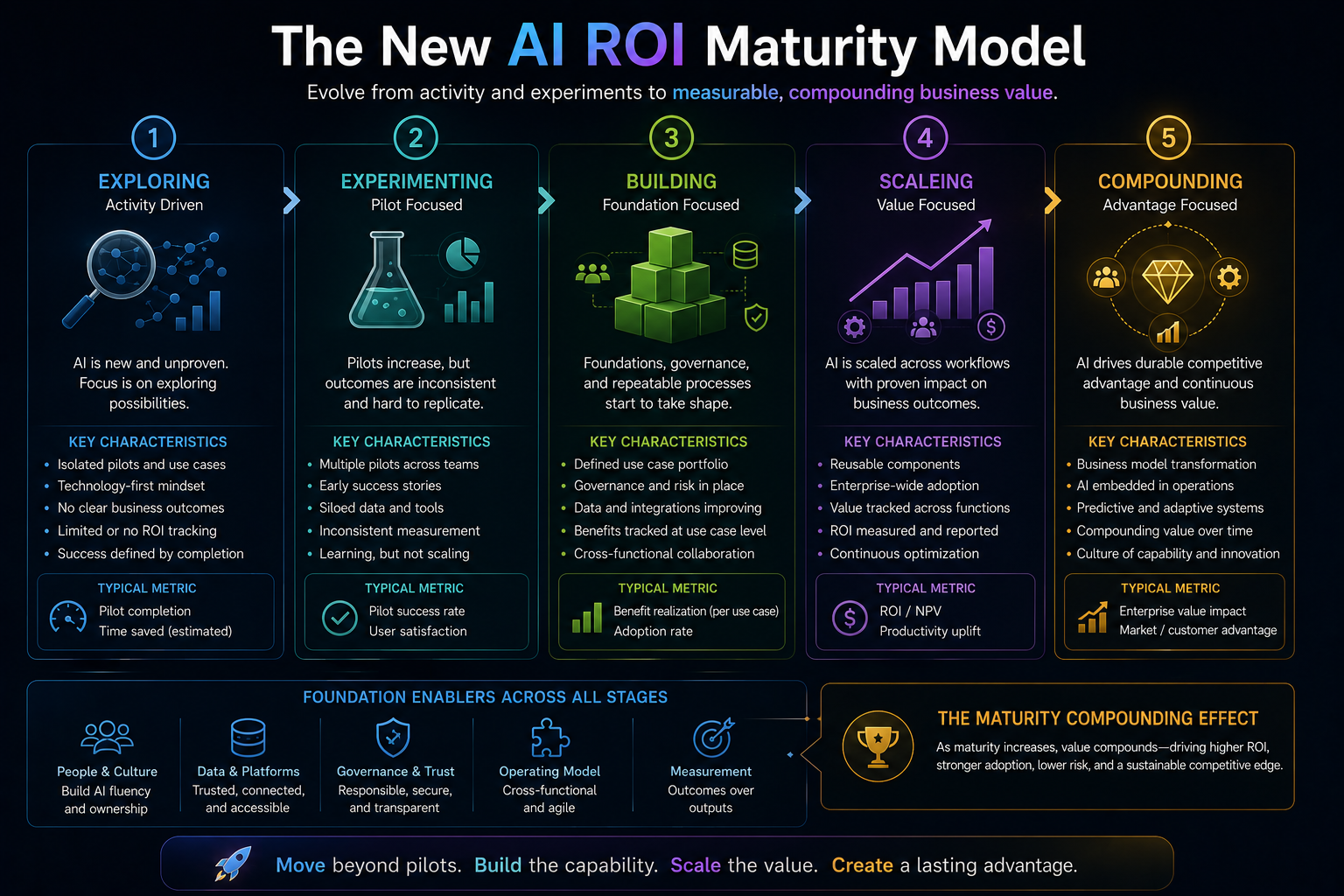

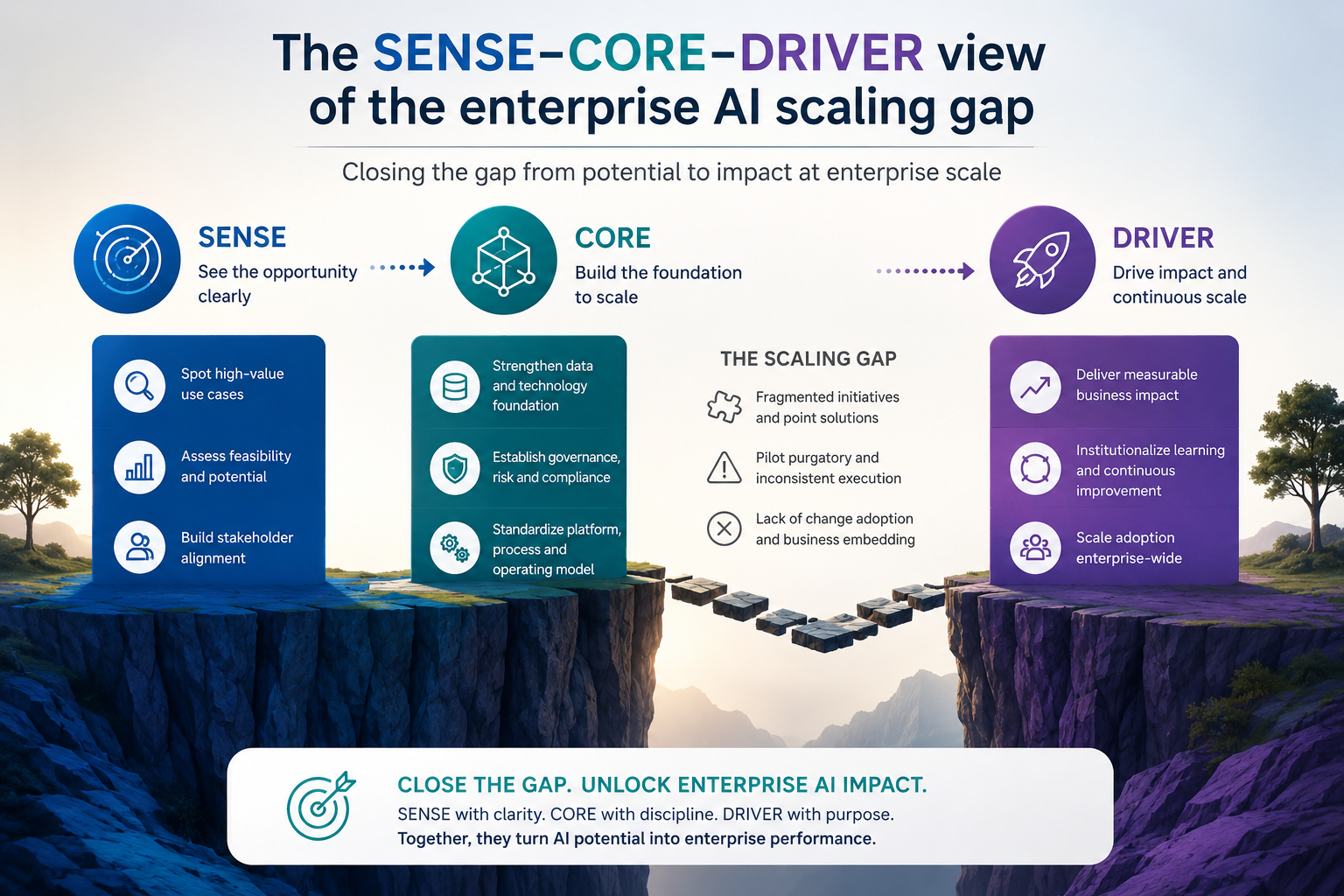

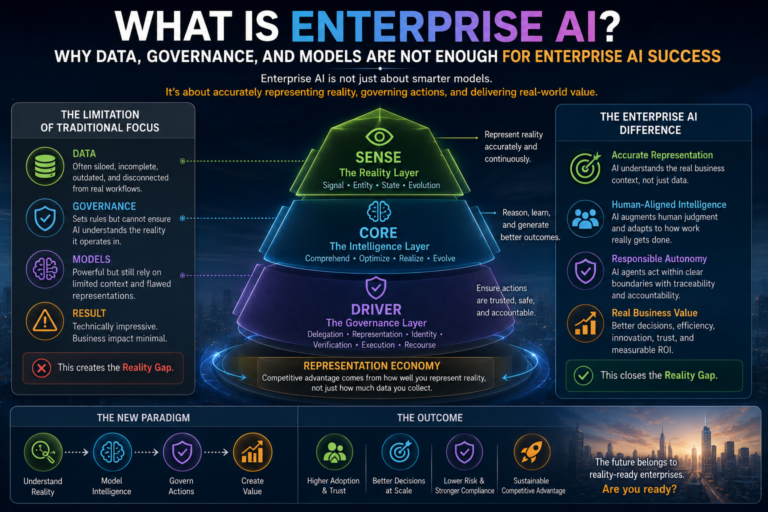

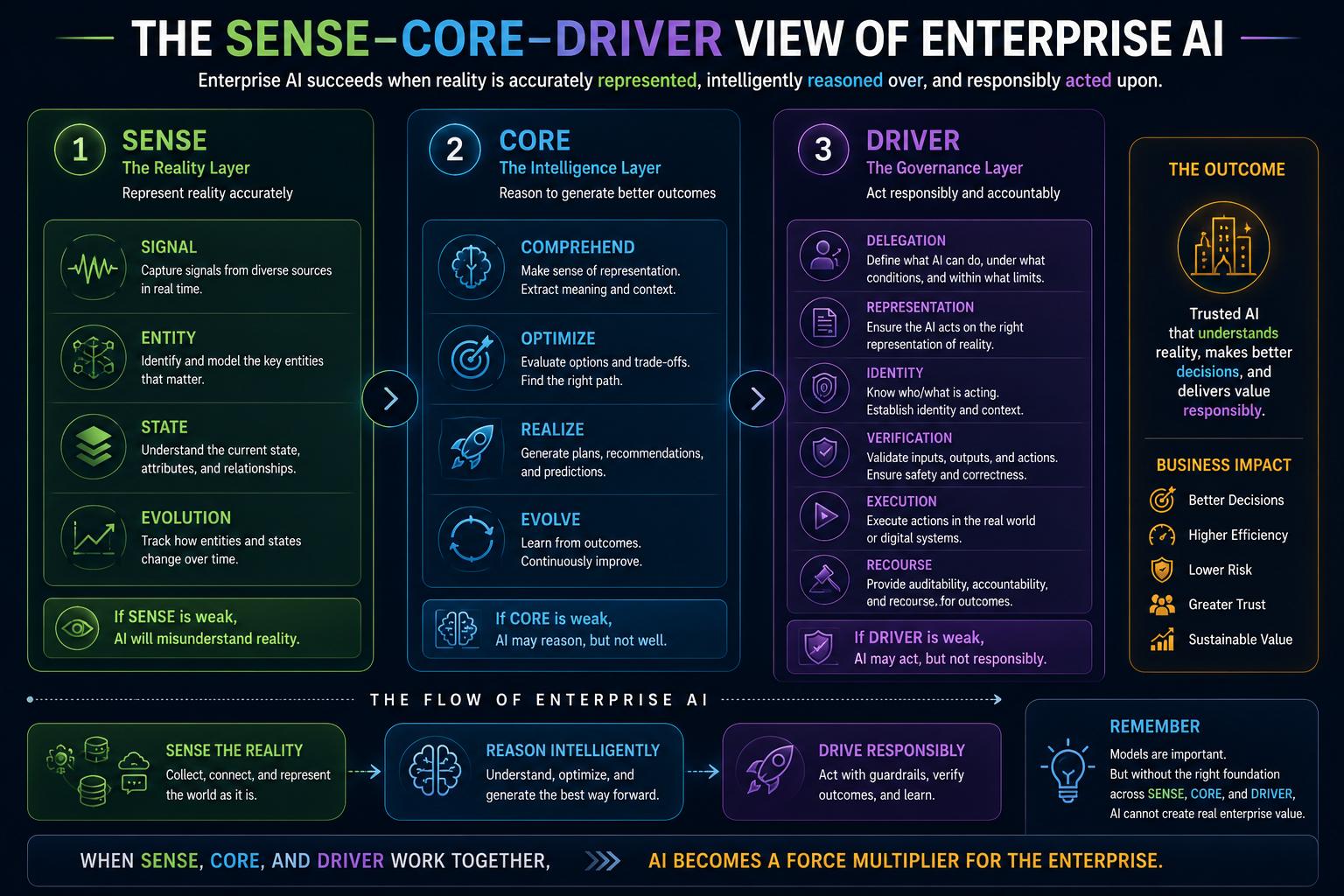

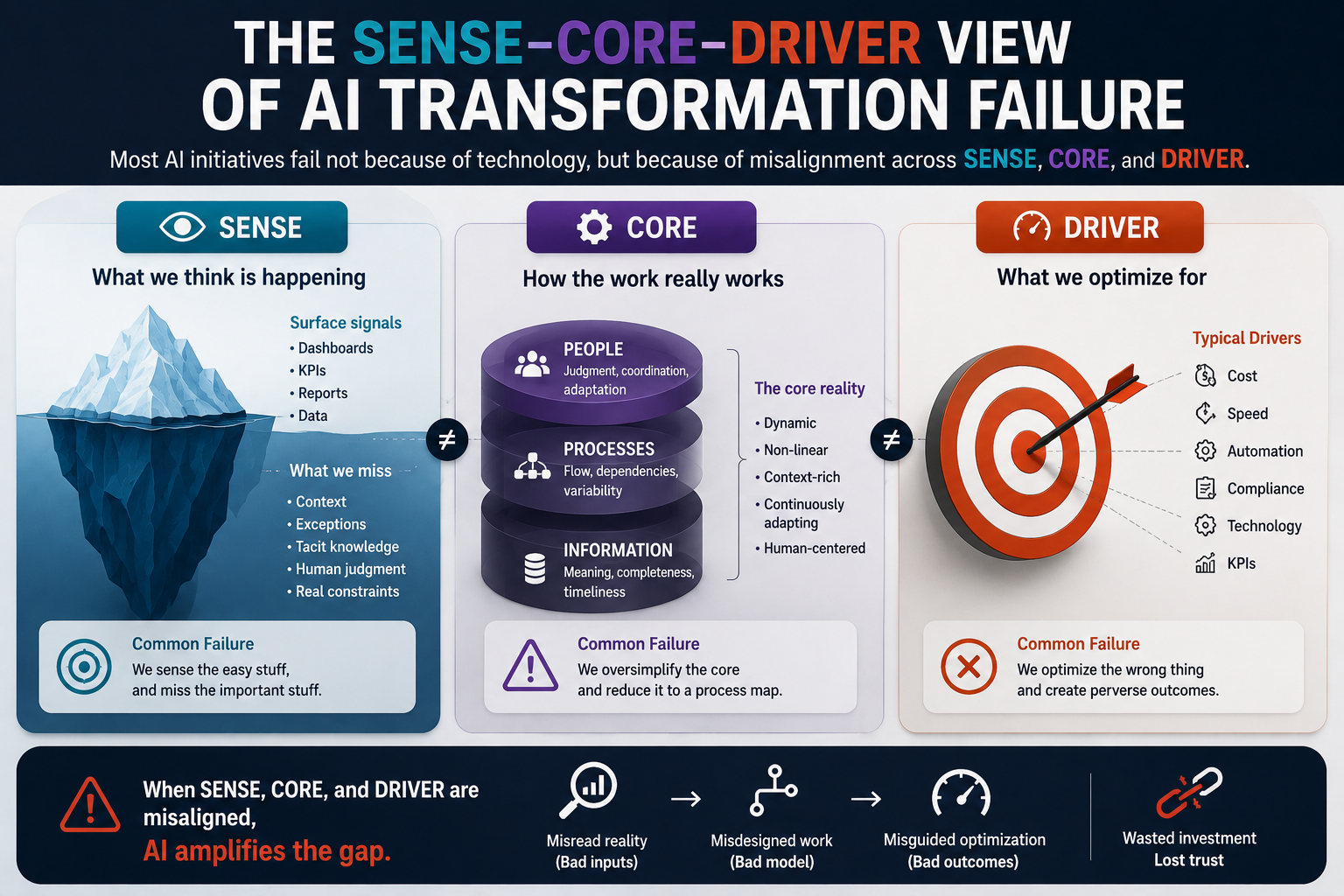

The SENSE–CORE–DRIVER view of AI transformation failure

The failure becomes easier to see through the SENSE–CORE–DRIVER framework.

SENSE is the layer where reality becomes machine-readable. It captures signals, attaches them to entities, builds state representation, and updates that state as reality changes.

CORE is the cognition layer. It reasons, predicts, summarizes, generates, recommends, compares, and optimizes.

DRIVER is the execution and legitimacy layer. It governs authority, verification, action, accountability, and recourse.

Most AI transformation programs overinvest in CORE.

They buy models. They build copilots. They create agents. They test prompts. They benchmark outputs. They compare model accuracy.

But they underinvest in SENSE and DRIVER.

They do not ask whether the AI system can see real work.

They do not ask whether the AI system understands the state of entities, processes, and obligations.

They do not ask whether the AI system has legitimate authority to recommend or act.

They do not ask whether users can challenge, reverse, or recover from AI decisions.

This is why AI transformation fails.

The CORE may be powerful.

But SENSE is weak and DRIVER is immature.

The enterprise has intelligence without enough reality, and action without enough legitimacy.

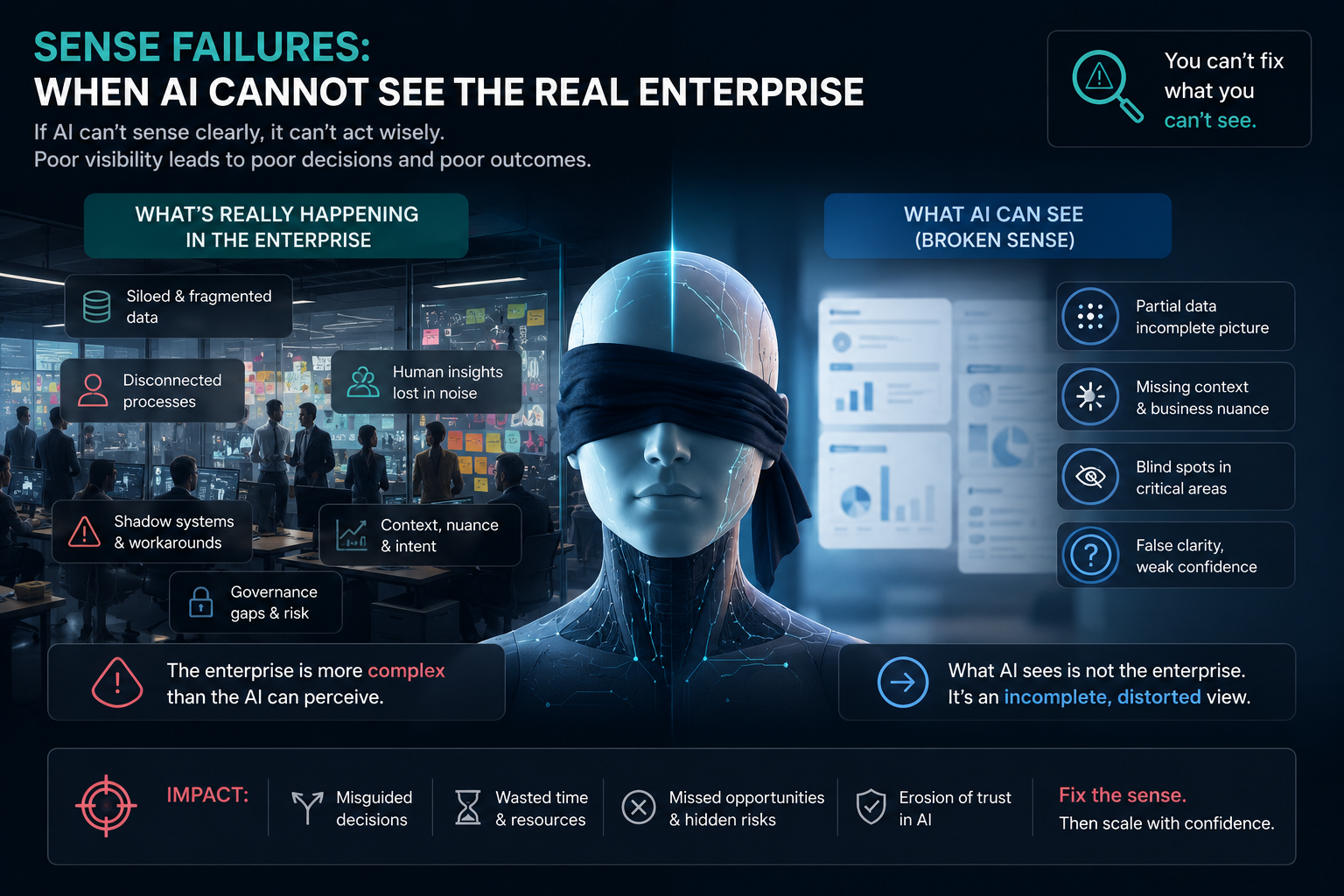

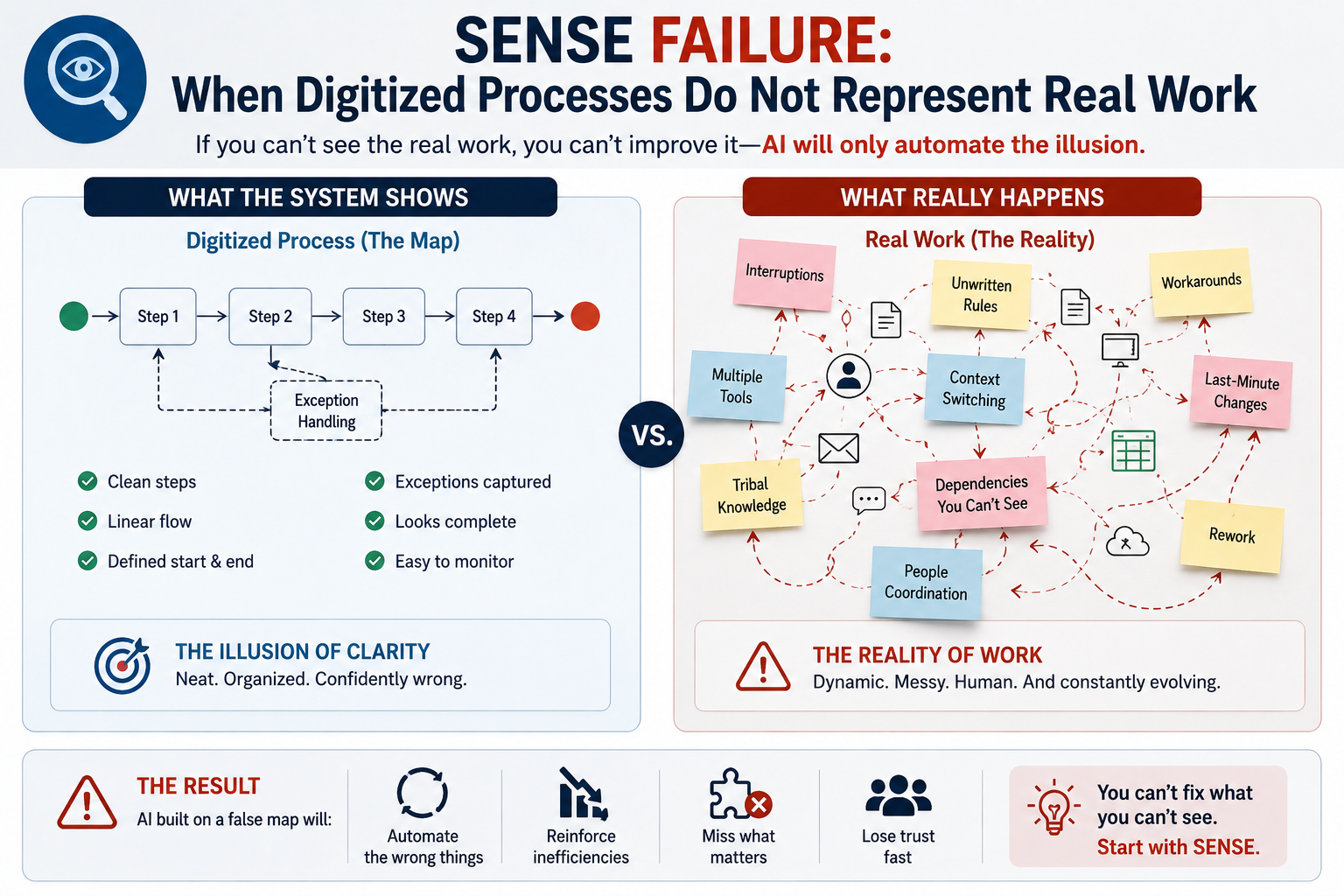

SENSE failure: when digitized processes do not represent real work

SENSE failure occurs when the organization cannot represent the reality that AI needs to understand.

A process may be digitized, but the meaning of work remains missing.

Consider a customer-service AI assistant.

The system may have access to tickets, knowledge articles, product manuals, previous responses, and customer history. On paper, this looks rich.

But the real work of customer service includes more than information retrieval.

It includes detecting emotion, understanding urgency, recognizing repeated failure, knowing when policy should be interpreted carefully, identifying when a customer has lost trust, and deciding when escalation is not just procedural but relational.

If those signals are not represented, the AI may provide technically correct but emotionally wrong responses.

Now consider enterprise IT support.

A ticket may say “application slow.”

But what does that mean?

For one employee, it may be a minor inconvenience. For another, it may block a regulatory filing. For a sales team, it may affect a major client demo. For a plant operator, it may delay production.

The same ticket category may represent very different business consequences.

If the AI sees only the ticket label, it misses the work.

That is SENSE failure.

The enterprise digitized the process, but not the operational meaning.

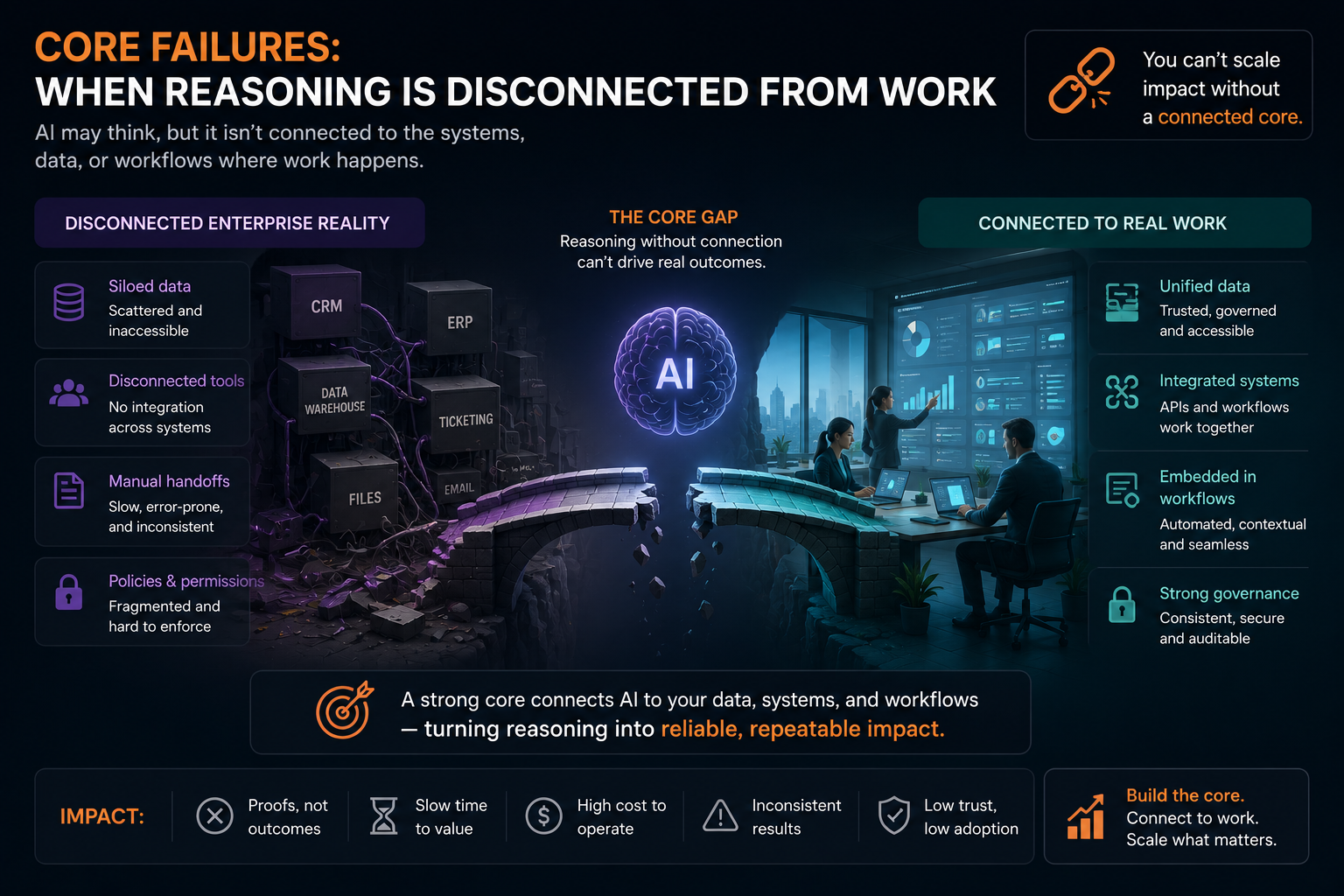

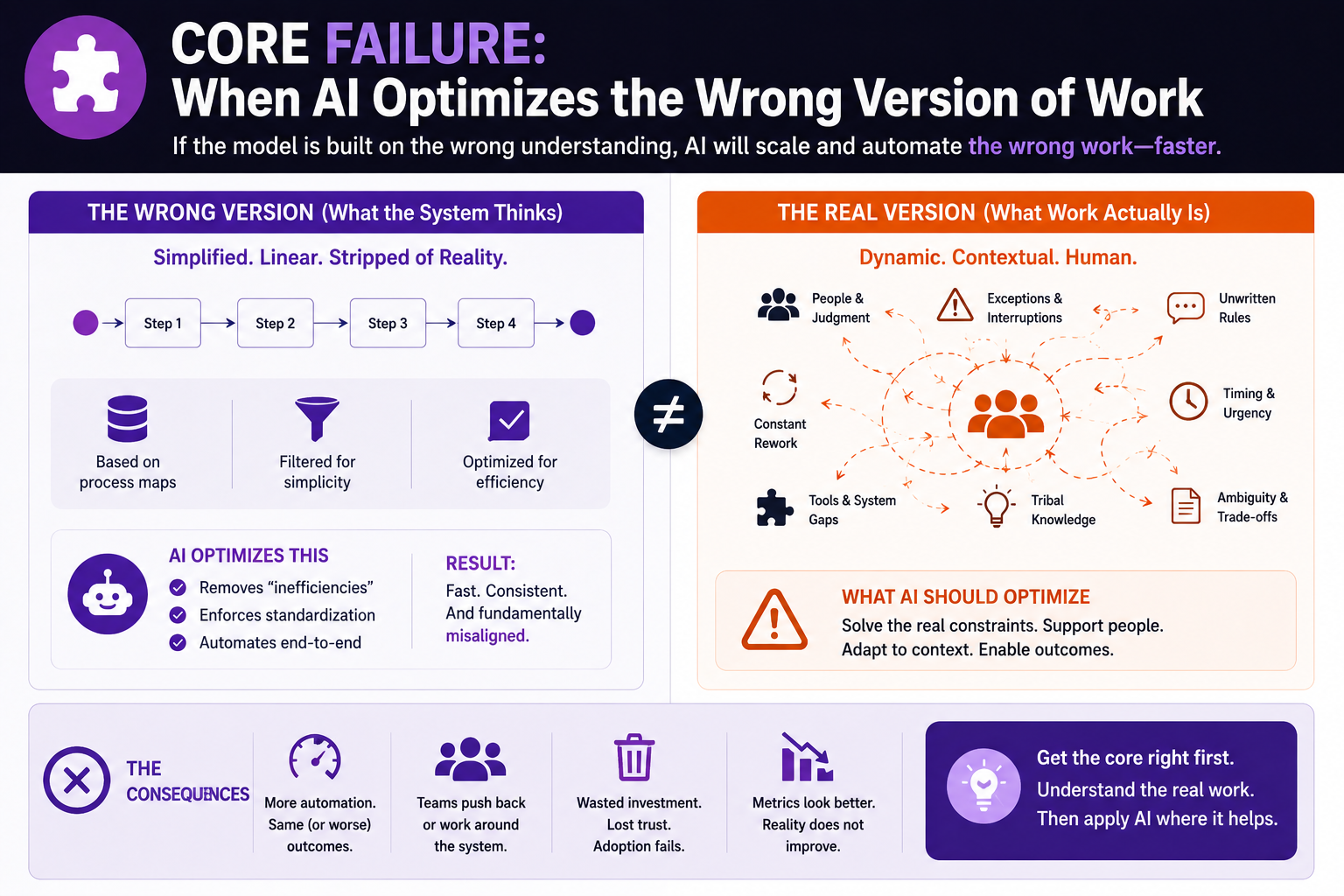

CORE failure: when AI optimizes the wrong version of work

CORE failure happens when AI reasons well over the wrong representation.

This is especially dangerous because the AI output may look sophisticated.

It may summarize well. It may classify well. It may recommend confidently. It may even produce better-looking reports than humans.

But if the system misunderstands work, better reasoning can still produce worse outcomes.

An AI system may recommend closing old support tickets to improve service metrics. But experienced managers may know that some old tickets represent complex enterprise issues that require relationship handling, not closure.

An AI system may recommend prioritizing high-volume customer complaints. But the strategically important risk may come from a small number of complaints from high-value institutional clients.

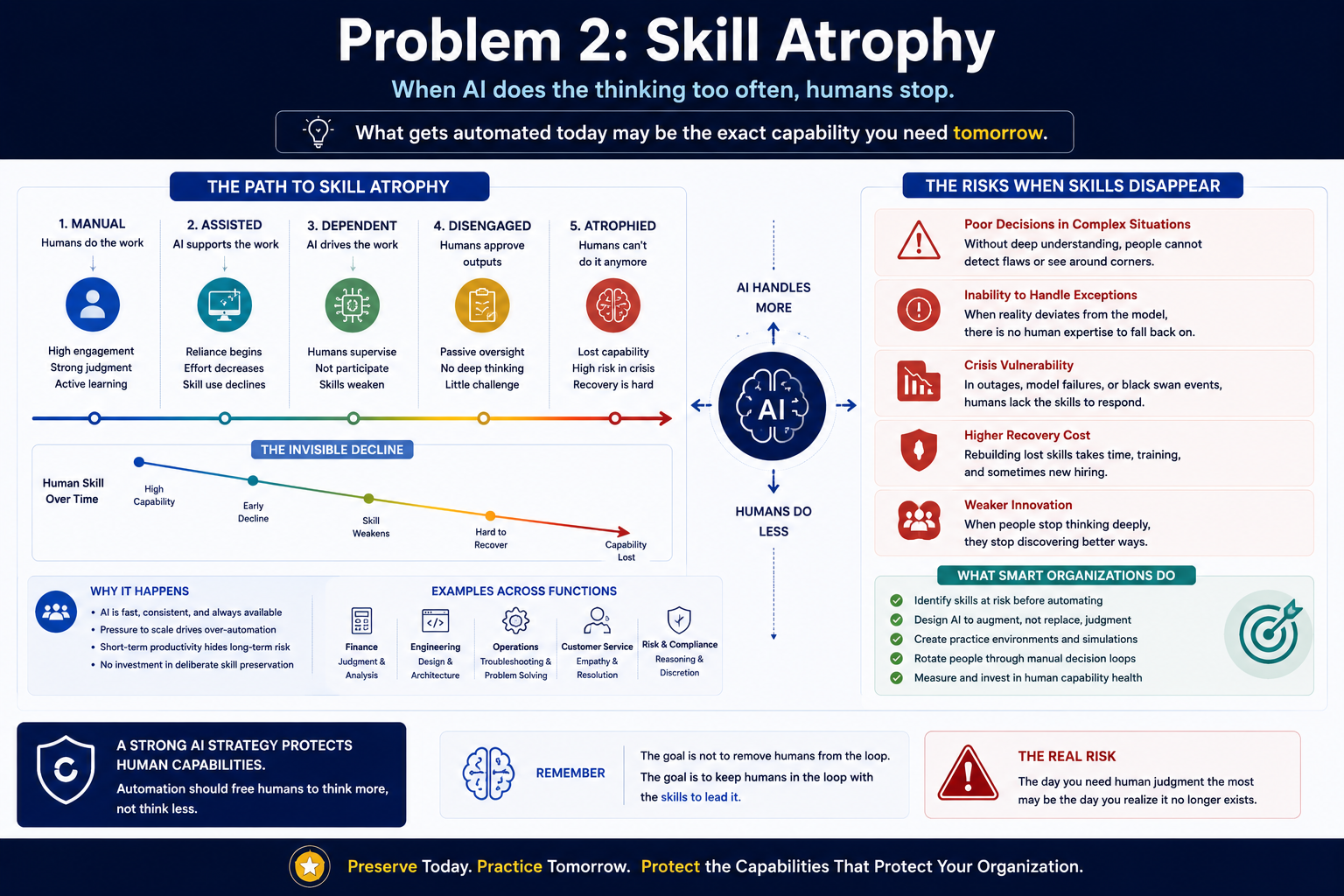

An AI system may recommend automating low-complexity tasks. But those tasks may be where junior employees learn the business.

An AI system may recommend reducing manual review. But manual review may be the place where hidden fraud patterns are discovered.

This is why AI transformation requires more than workflow automation.

It requires work interpretation.

The question is not only, “Can AI optimize the process?”

The question is, “Does AI understand what the process is really doing for the institution?”

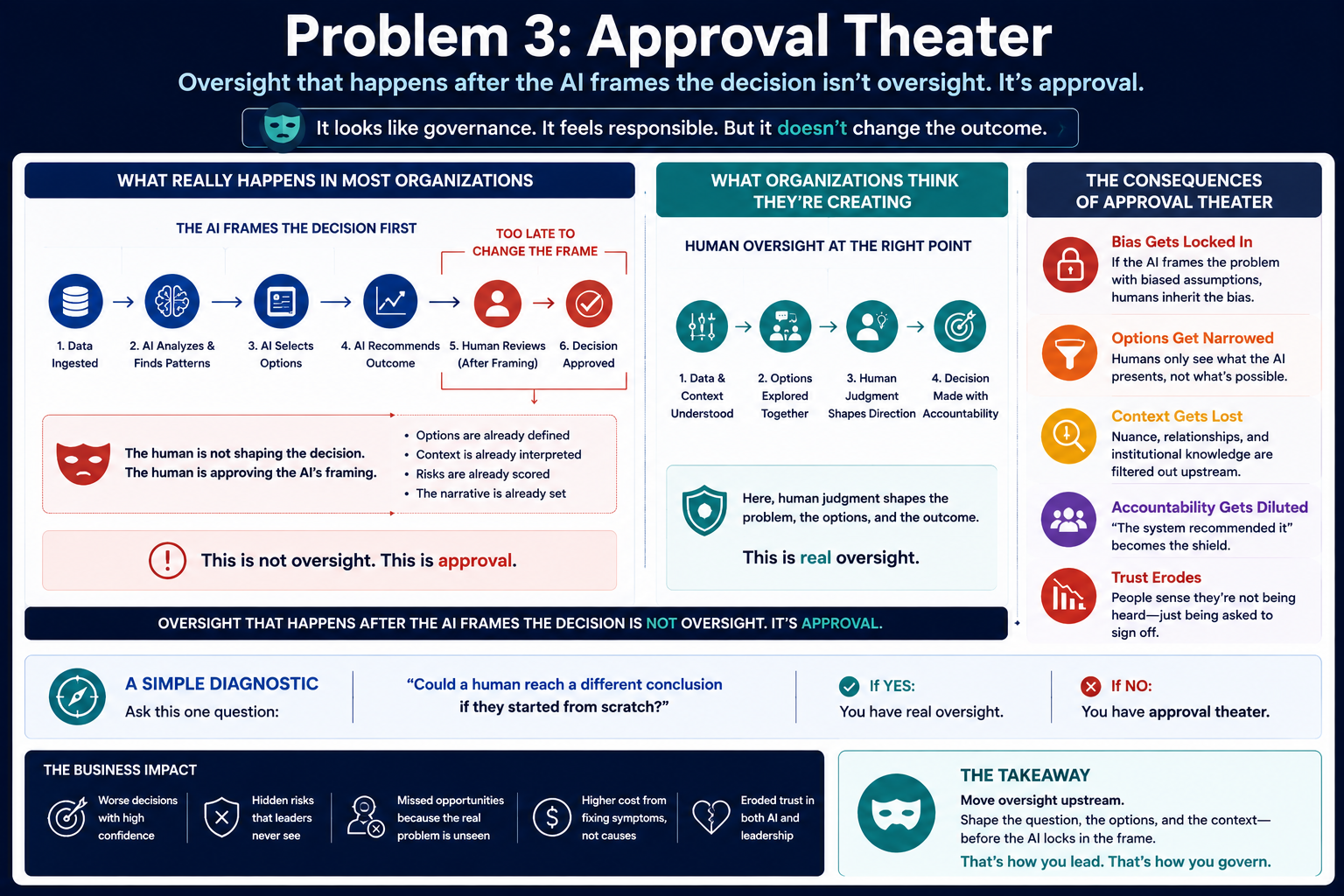

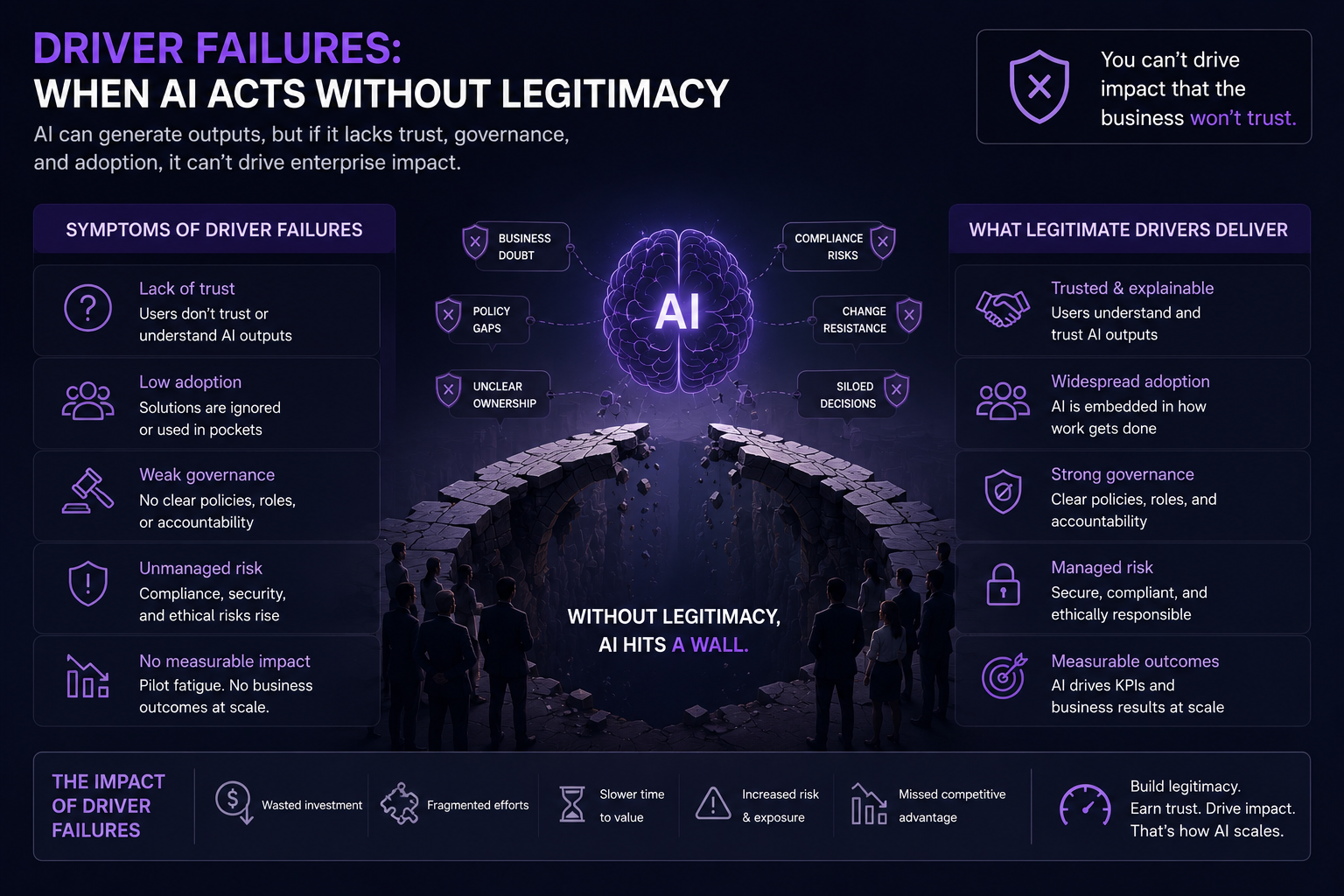

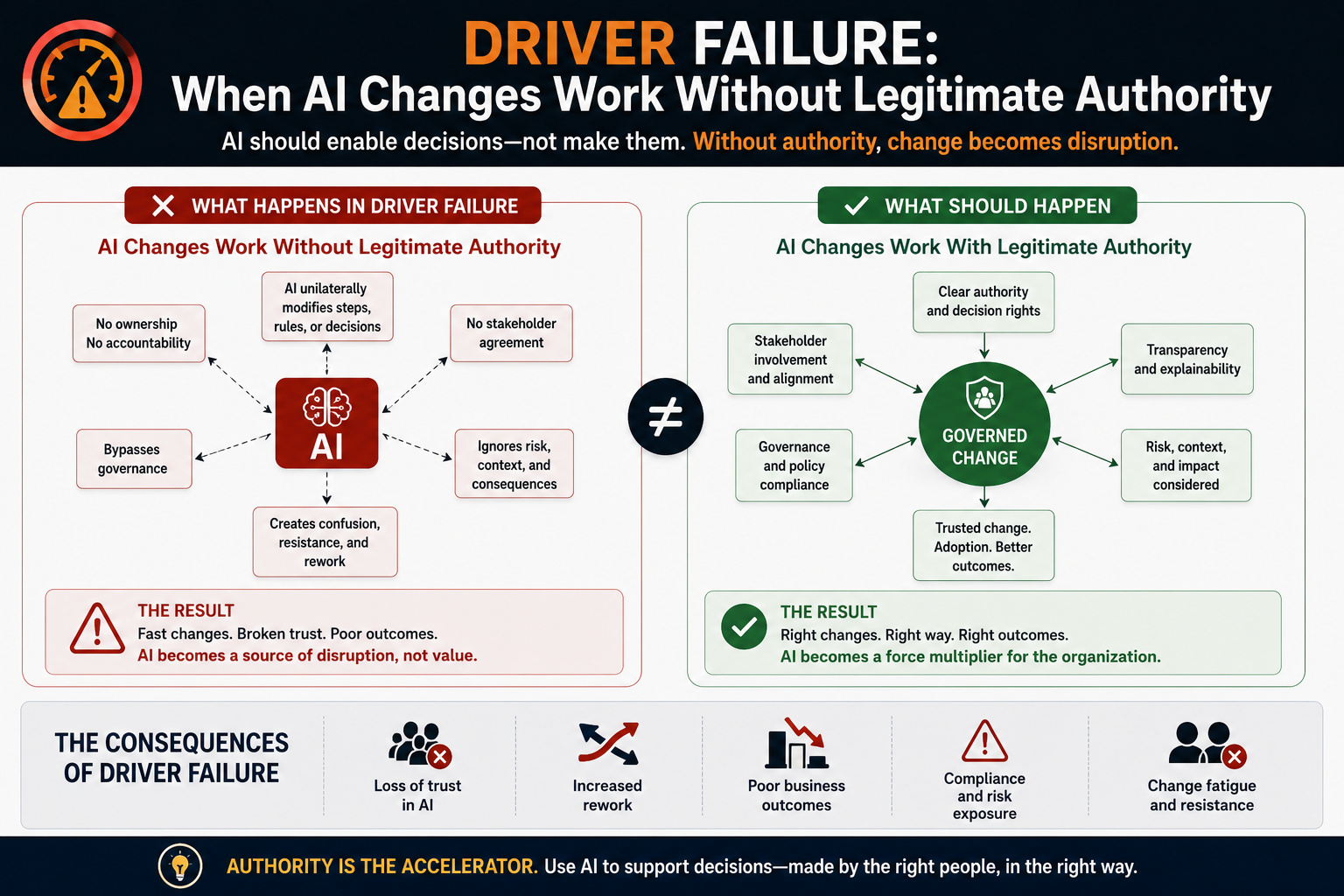

DRIVER failure: when AI changes work without legitimate authority

DRIVER failure appears when AI starts influencing action without clear authority, accountability, or recourse.

In many companies, AI begins as a recommendation layer.

Then it quietly becomes a decision-shaping layer.

Then it becomes an action layer.

A copilot suggests a response.

A workflow engine prioritizes tasks.

An agent escalates cases.

A model flags risk.

A system recommends approval.

A dashboard changes management attention.

At each stage, AI begins to shape work.

But who gave it authority?

Who decides what it may influence?

Who verifies its assumptions?

Who is accountable if the outcome is wrong?

Can affected employees, customers, suppliers, or partners challenge the result?

Can the organization reverse the action?

This is where many AI transformation programs become fragile.

They focus on adoption but not legitimacy.

They focus on efficiency but not recourse.

They focus on speed but not accountability.

In the AI era, authority must be designed.

It cannot be assumed.

That is the DRIVER layer.

Why digital anthropology belongs in enterprise AI architecture

Digital anthropology may sound far away from enterprise architecture.

It is not.

It may be one of the missing disciplines in AI transformation.

Enterprise architects map systems.

Process teams map workflows.

Data teams map information.

Risk teams map controls.

But who maps lived work?

Who studies how people actually behave inside the digital enterprise?

Who observes where employees override systems, where customers lose trust, where managers rely on informal networks, where policies are interpreted, and where workarounds keep the organization alive?

This is the role of digital anthropology.

It brings the human operating system into view.

For AI transformation, this matters because AI systems increasingly interact with work as it happens. They are not just sitting behind dashboards. They are entering conversations, workflows, approvals, support processes, coding environments, design systems, risk engines, and customer journeys.

If architects do not understand lived work, they will design AI around the visible process.

And that is exactly where transformation fails.

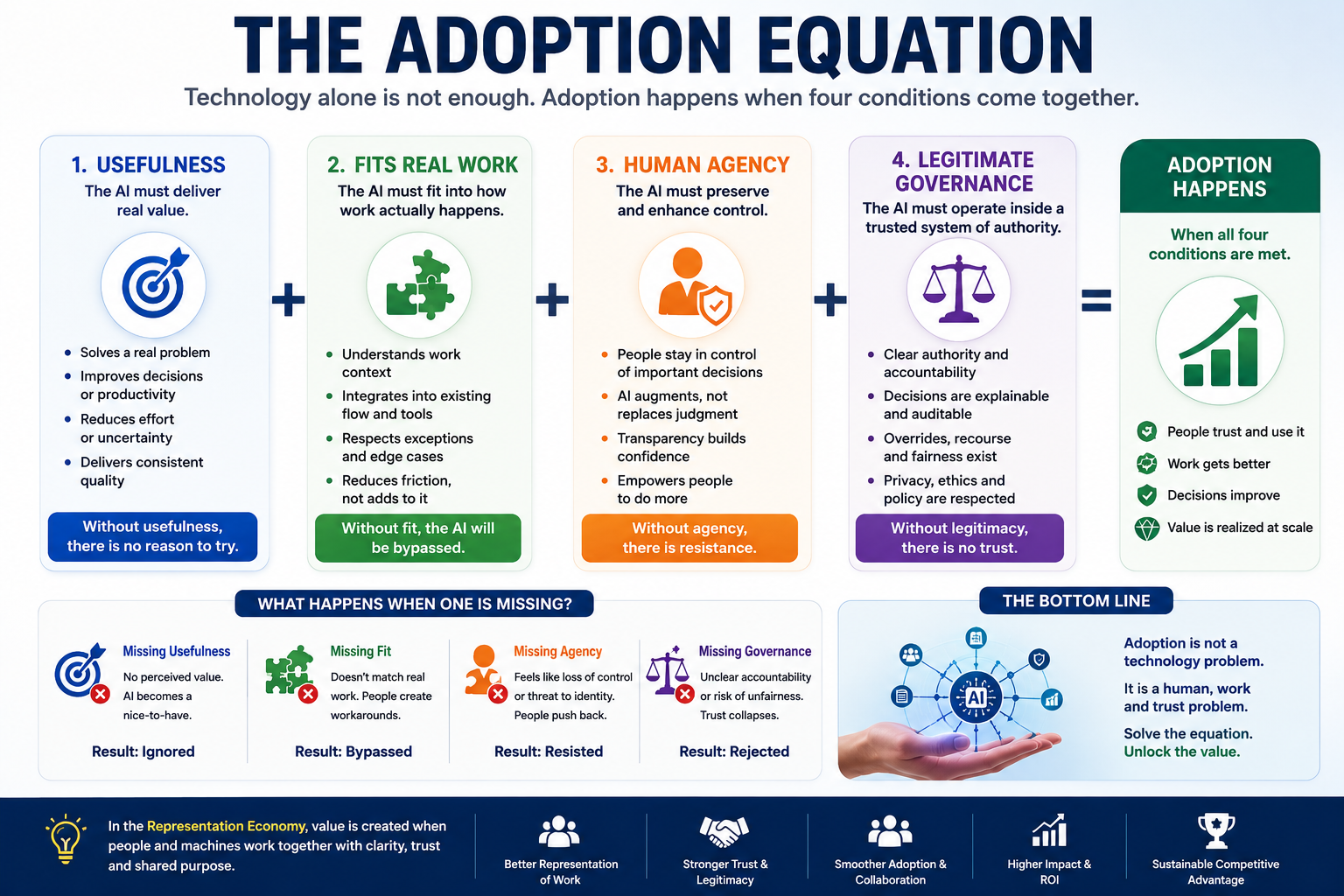

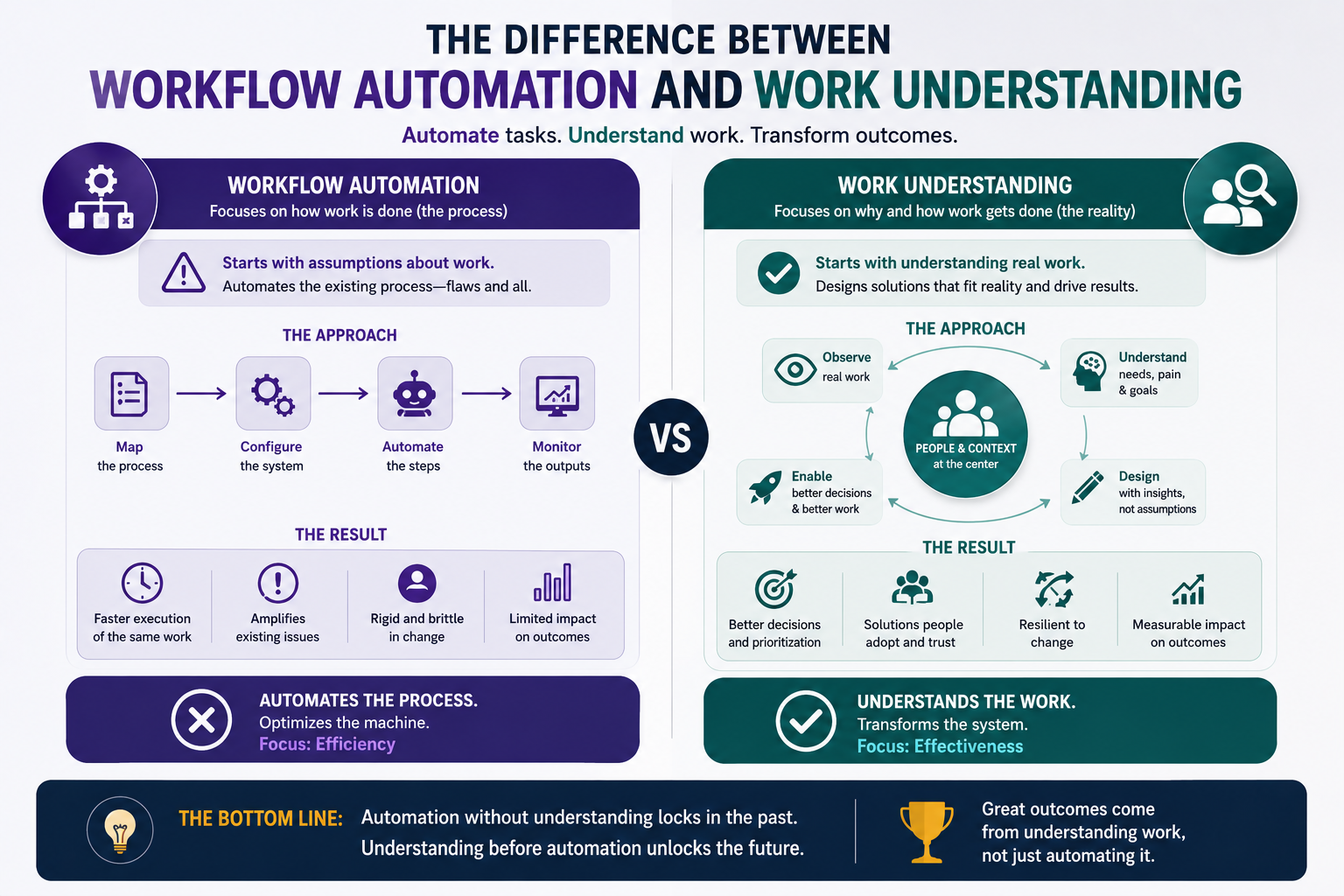

The difference between workflow automation and work understanding

Workflow automation asks: what steps can be digitized or automated?

Work understanding asks: why does the work exist, what judgment does it require, and what reality must be represented before AI should intervene?

This difference is critical.

A workflow automation mindset may say:

“Employees spend too much time reviewing invoices. Let us use AI to automate invoice validation.”

A work-understanding mindset asks:

Which invoices are routine?

Which vendors are risky?

Which exceptions matter?

Which mismatches are harmless?

Which mismatches reveal fraud, dispute, or process weakness?

Which approvals are formalities?

Which approvals carry real accountability?

Which human judgments should remain?

The first approach automates a process.

The second approach redesigns institutional intelligence.

That is the difference between digitization and AI transformation.

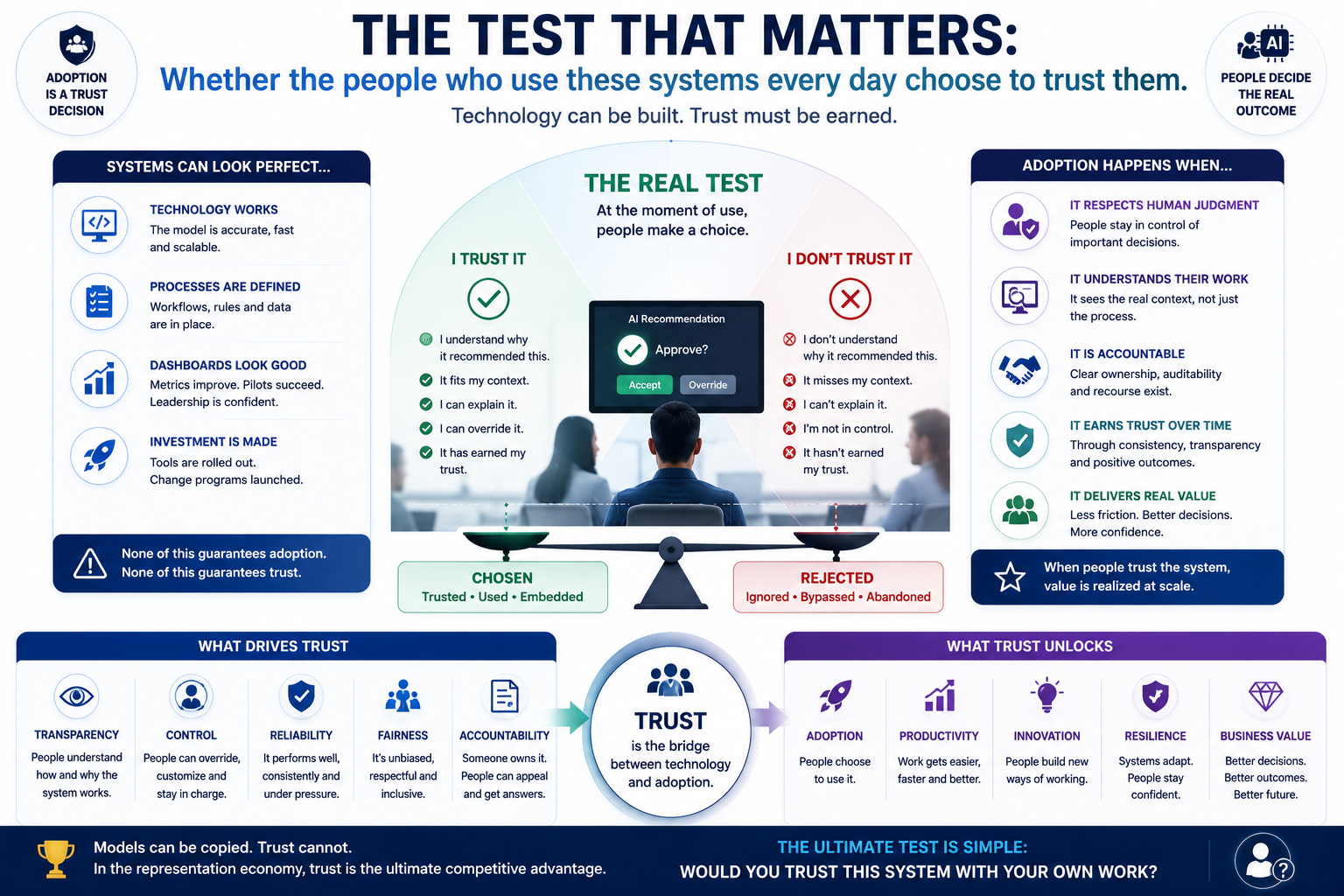

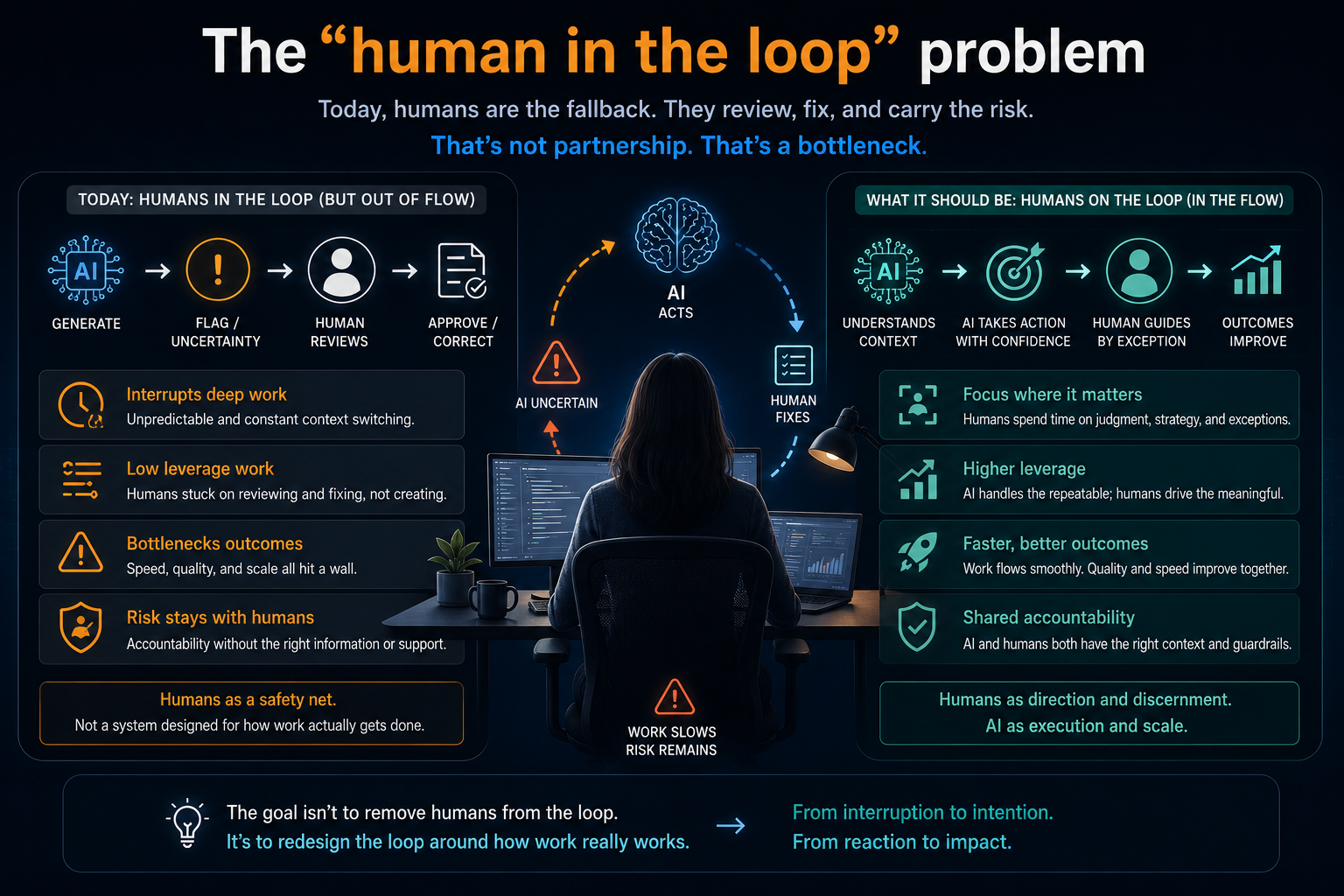

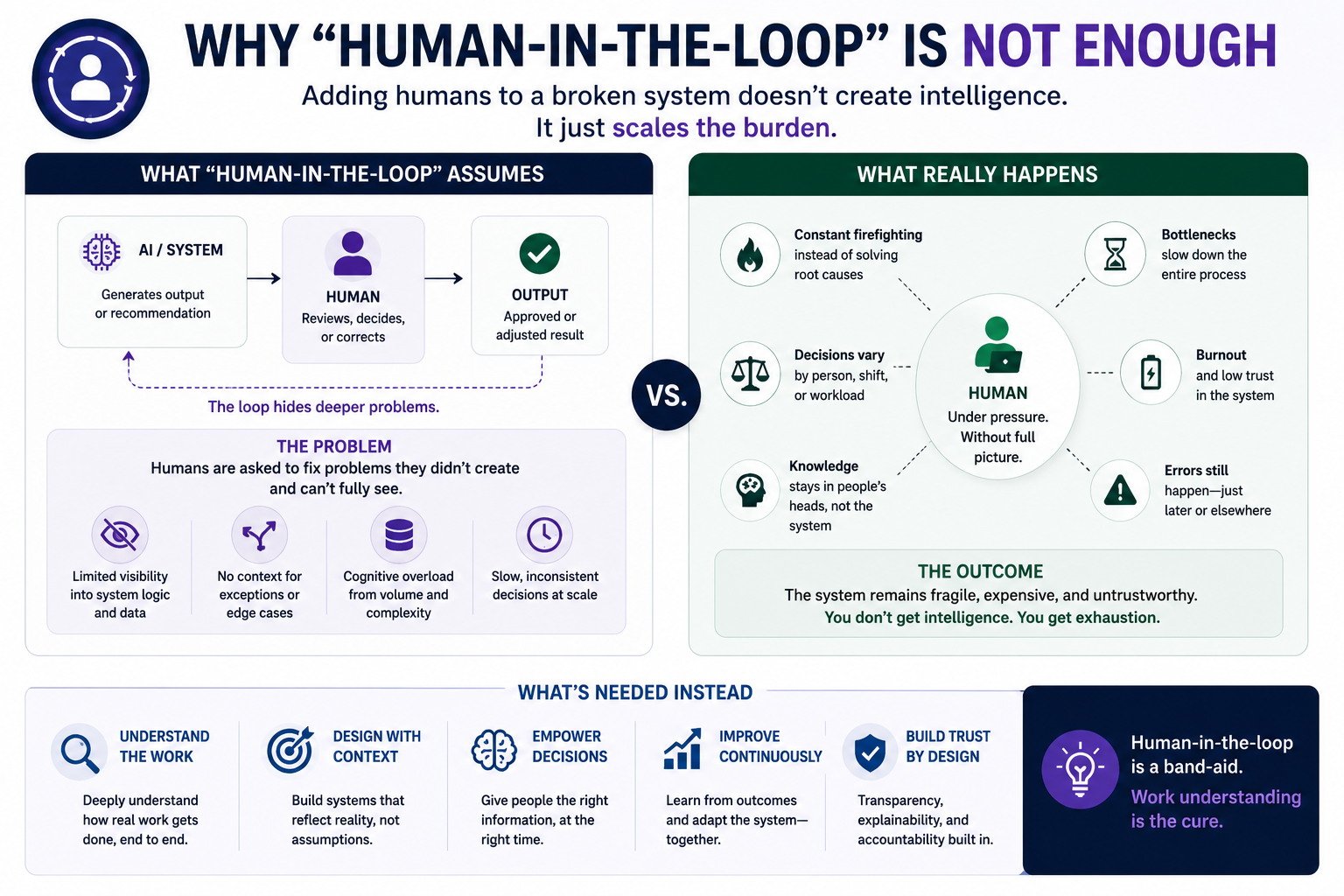

Why “human-in-the-loop” is not enough

Many organizations respond to AI risk by saying, “We will keep a human in the loop.”

That sounds safe.

But it is often incomplete.

A human in the loop may not understand the model.

The human may not have time to challenge the recommendation.

The interface may push the human toward approval.

The organization may reward speed over judgment.

The AI may have already shaped the decision before the human sees it.

In such cases, human oversight becomes ceremonial.

The human is present, but not meaningfully empowered.

This is why AI transformation needs more than human-in-the-loop.

It needs human-in-the-work.

That means AI must be designed around real human judgment, not merely placed before a human for approval.

It must understand where humans add value, where they need support, where they must retain authority, and where automation can safely reduce burden.

This is another reason digital anthropology is essential.

You cannot design human-in-the-work without understanding the work humans actually do.

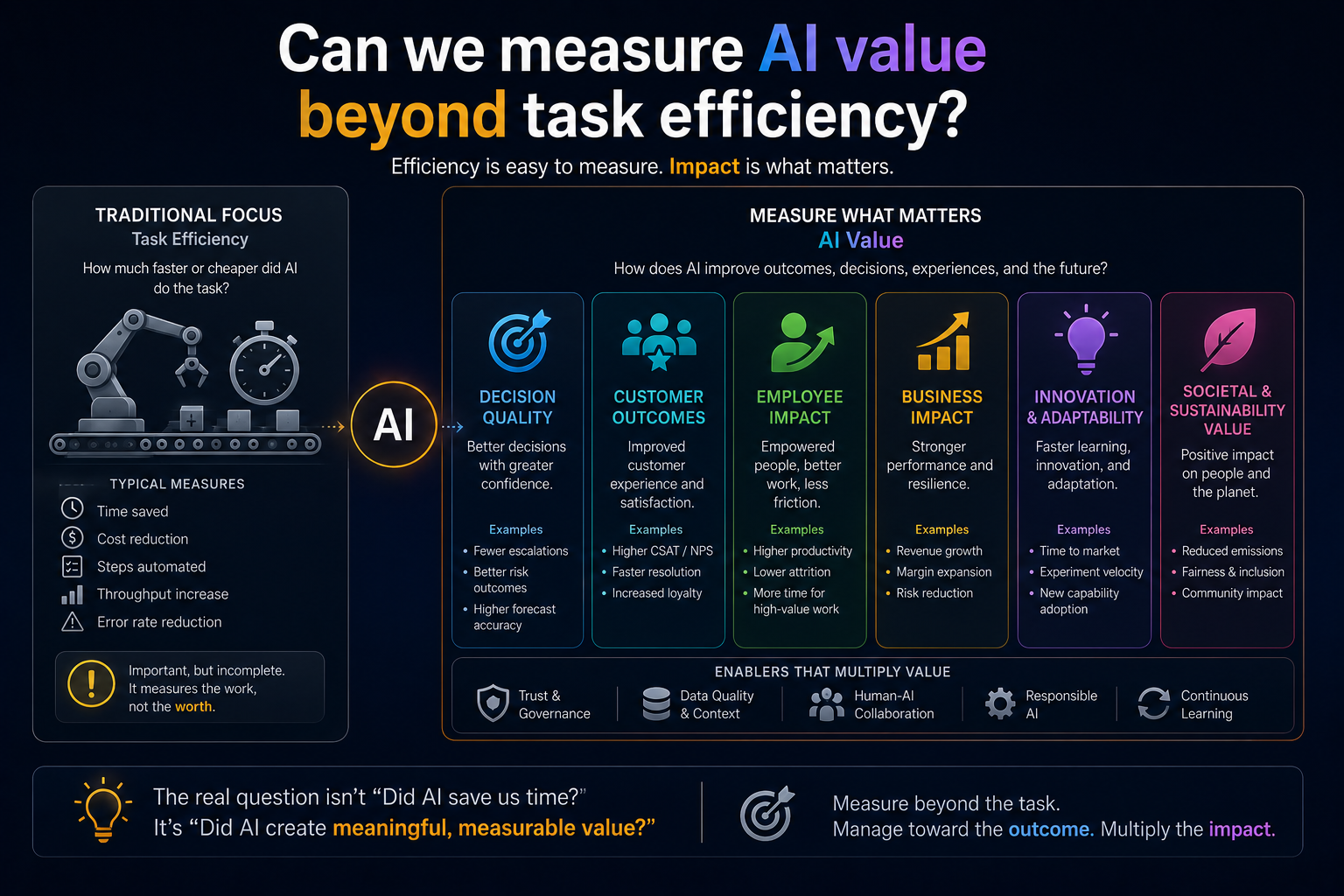

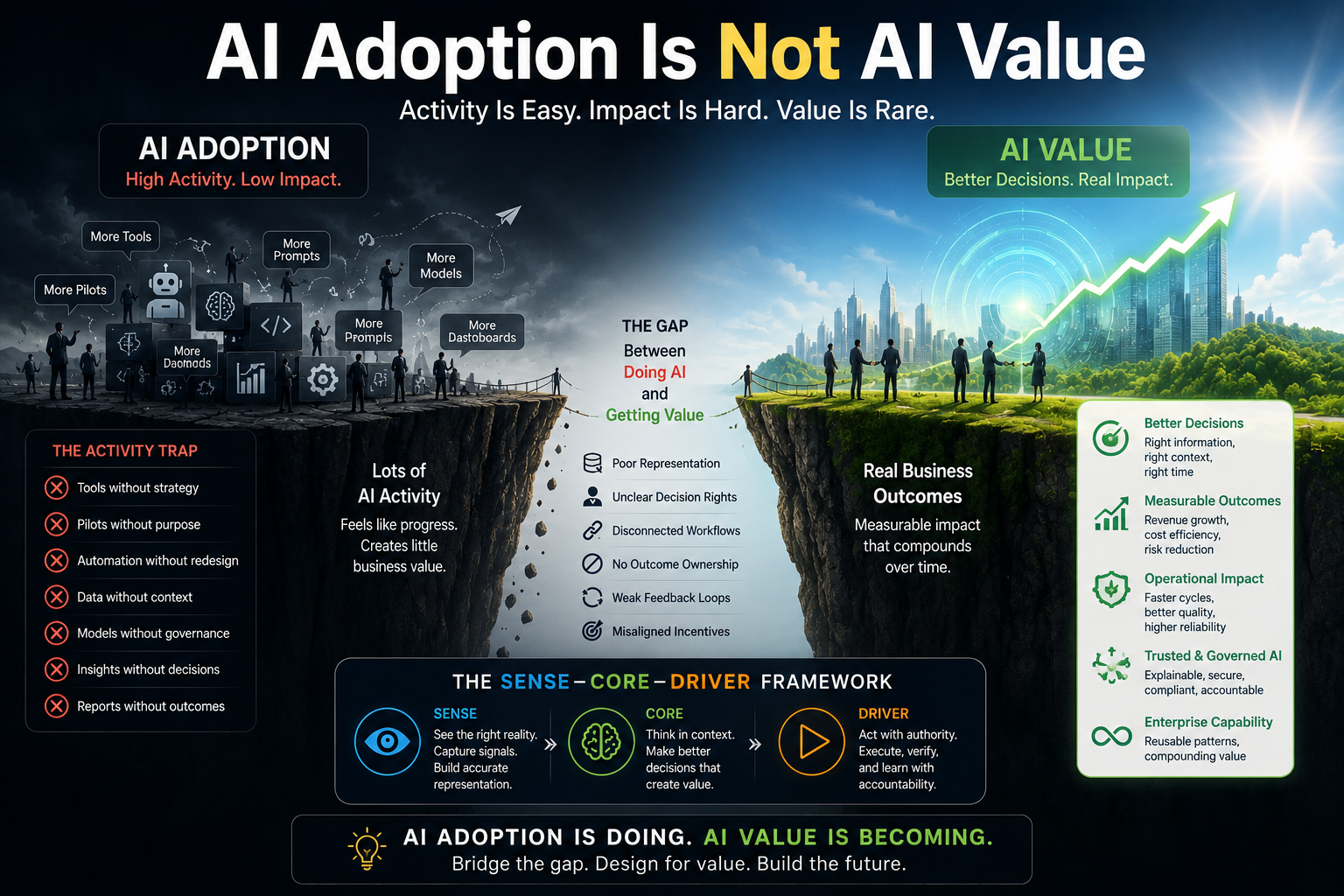

The productivity illusion

AI transformation often promises productivity.

But productivity is not the same as speed.

A system may make one task faster while making the overall organization slower.

A sales team may produce proposals faster, but legal review may take longer because AI-generated claims need verification.

Developers may write code faster, but architects may spend more time managing consistency, security, dependencies, and technical debt.

Customer-service agents may respond faster, but escalations may increase because customers feel misunderstood.

Managers may receive more AI-generated insights, but spend more time deciding which insights matter.

This is the productivity illusion.

The visible task improves.

The invisible system absorbs the cost.

This is why AI value measurement must move from task-level efficiency to system-level effectiveness.

CIOs and CTOs should ask:

Did the AI reduce total effort across the workflow?

Did it improve decision quality?

Did it reduce rework?

Did it reduce risk?

Did it improve trust?

Did it strengthen institutional learning?

Did it preserve essential human judgment?

If not, the organization may have automated activity without improving work.

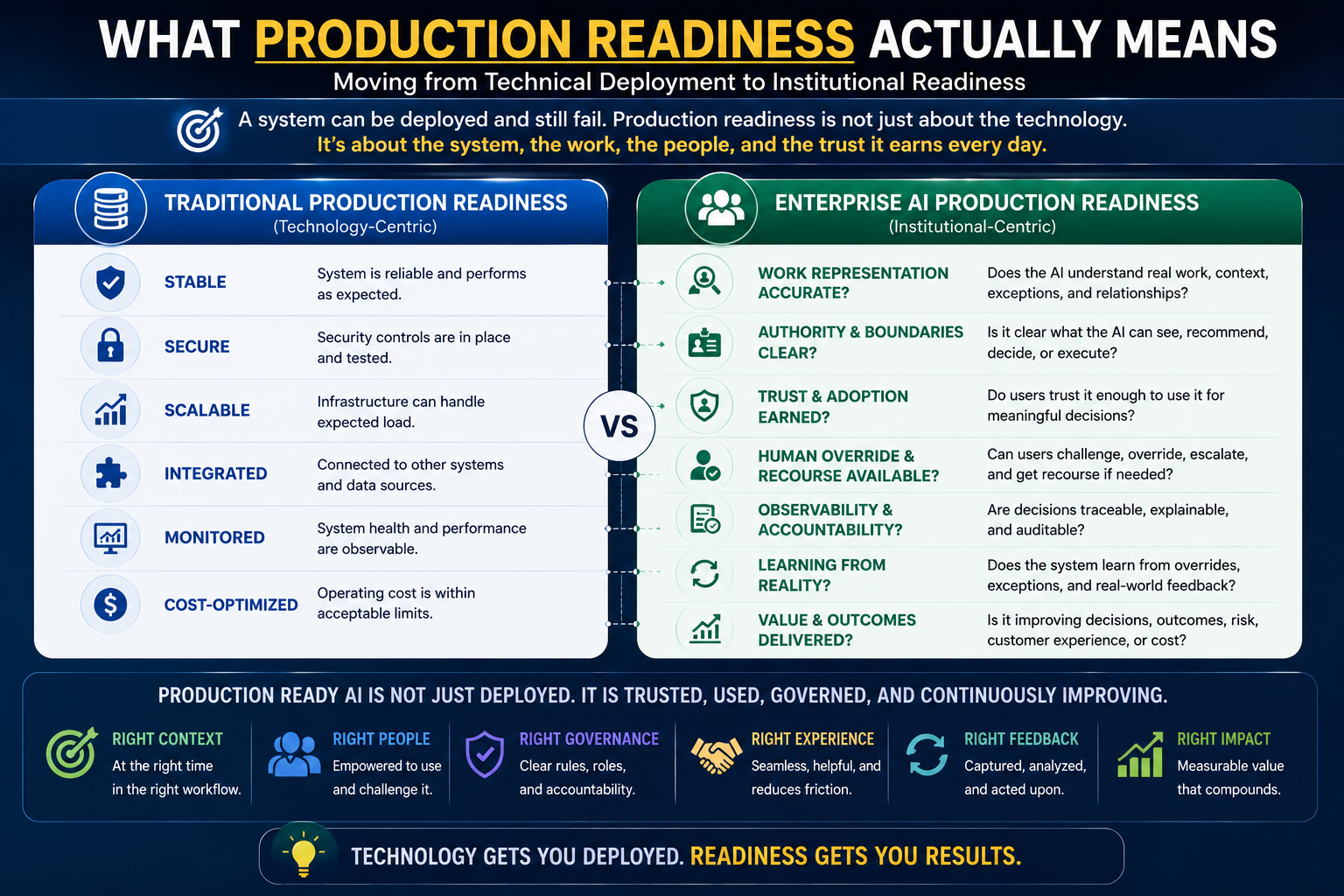

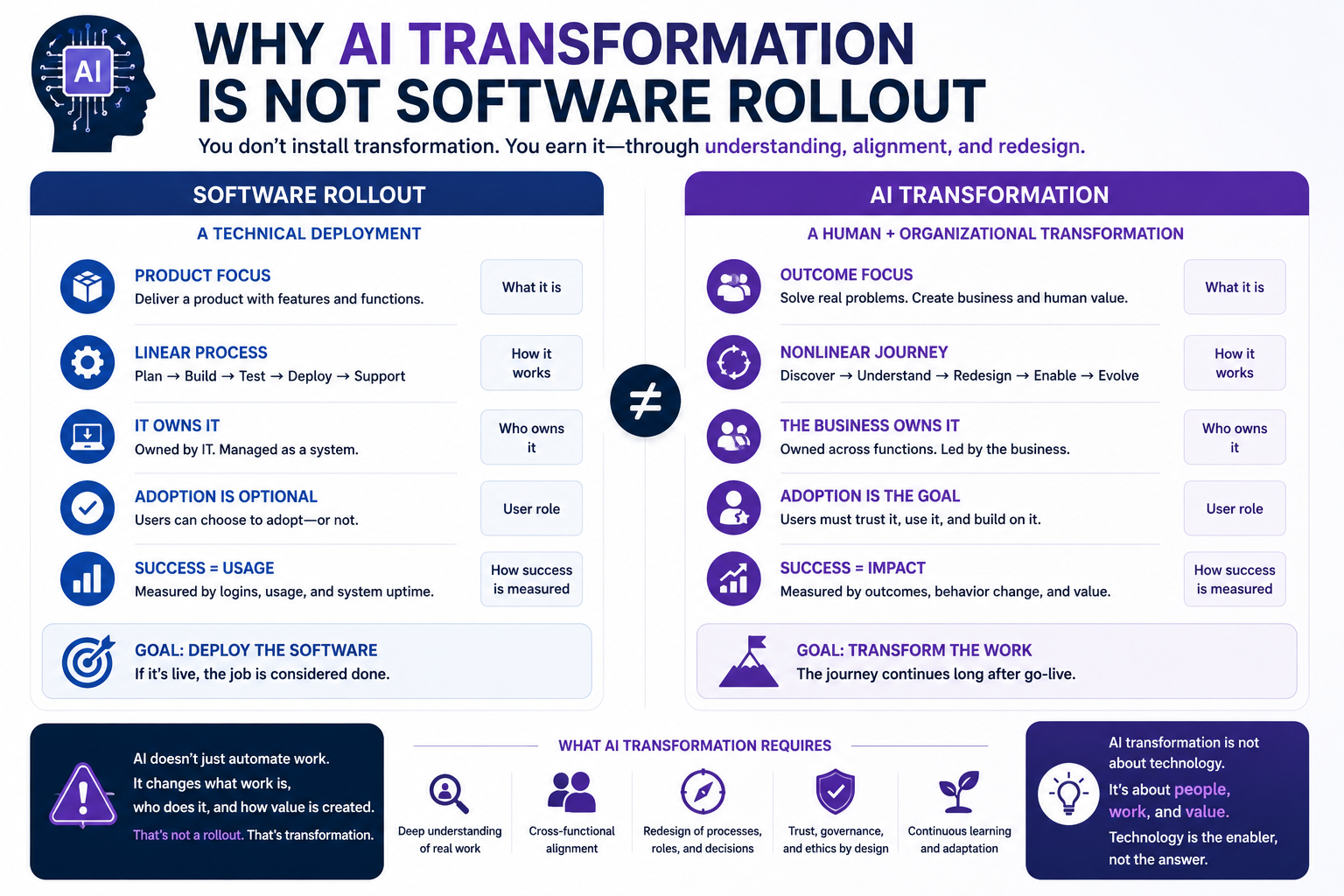

Why AI transformation is not software rollout

Software rollout is about deployment.

AI transformation is about institutional redesign.

Traditional software often supports predefined workflows. AI changes how work is interpreted, prioritized, recommended, and sometimes executed.

That means AI does not merely enter the enterprise.

It changes the enterprise.

It changes who knows what.

It changes who decides what.

It changes what gets measured.

It changes what becomes visible.

It changes which skills matter.

It changes how accountability flows.

It changes the boundary between advice and action.

This is why AI transformation cannot be managed like another SaaS implementation.

It requires operating model design, governance design, data and representation design, workflow redesign, user trust design, and recourse design.

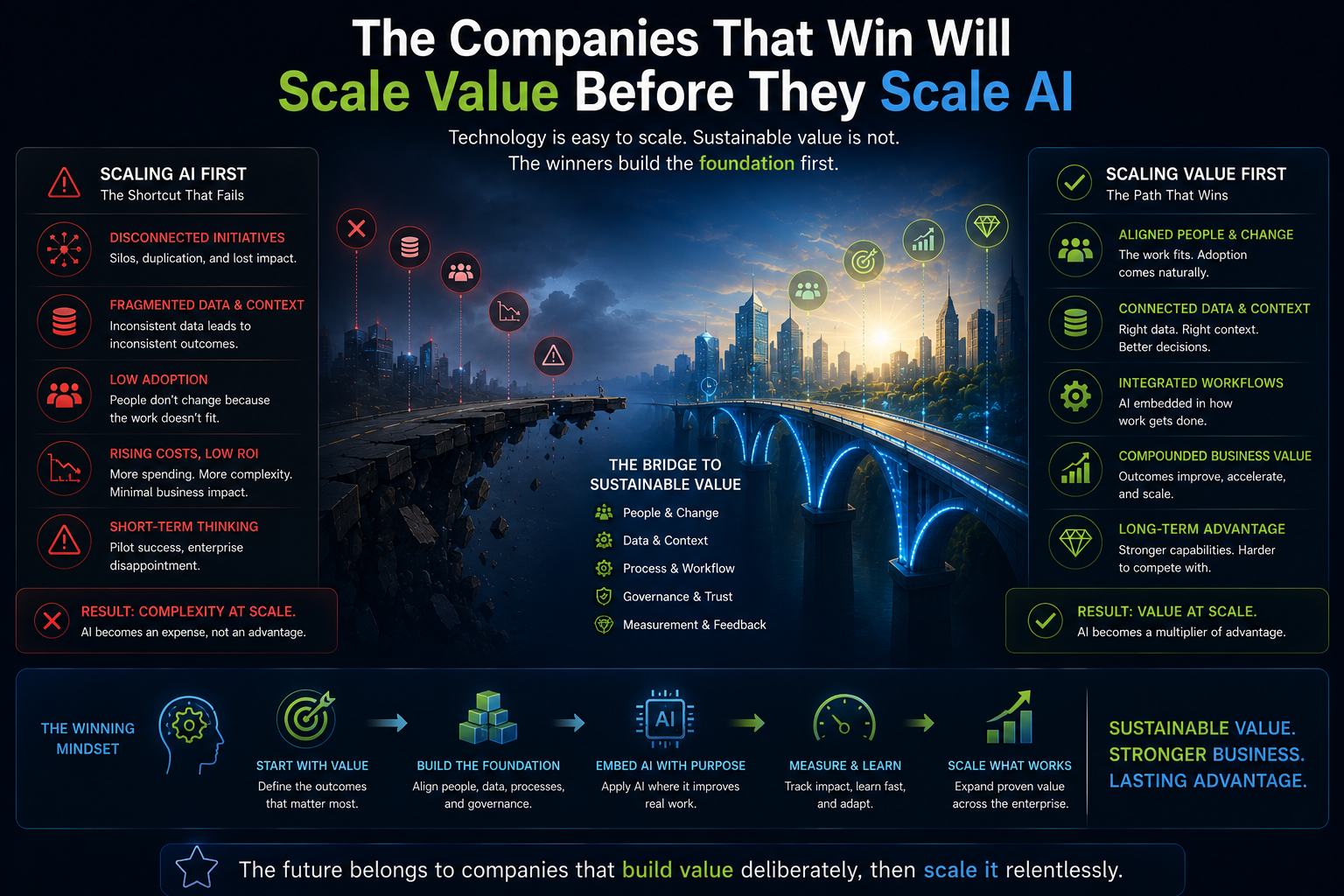

The companies that understand this will move beyond pilots.

The companies that do not will keep producing impressive demos and disappointing outcomes.

Why this matters to boards

For boards, this is not a technical issue.

It is a strategy and governance issue.

The question is not simply whether the company is investing in AI.

The question is whether the company understands the work AI is entering.

A board should be concerned when AI investments are reported only through numbers such as number of pilots, number of use cases, number of copilots deployed, number of users onboarded, or number of hours saved.

These numbers may be useful, but they are not enough.

Boards should ask deeper questions.

Which enterprise workflows are being changed?

Which decisions are being influenced?

Which human judgments are being automated, supported, or displaced?

Which representations of customers, employees, assets, and risks are being used?

Where can AI act, and where must it only advise?

How does the organization recover when AI produces a wrong or harmful outcome?

These are board questions because they affect risk, trust, resilience, reputation, and competitive advantage.

AI transformation is not just a technology program.

It is a redesign of institutional decision-making.

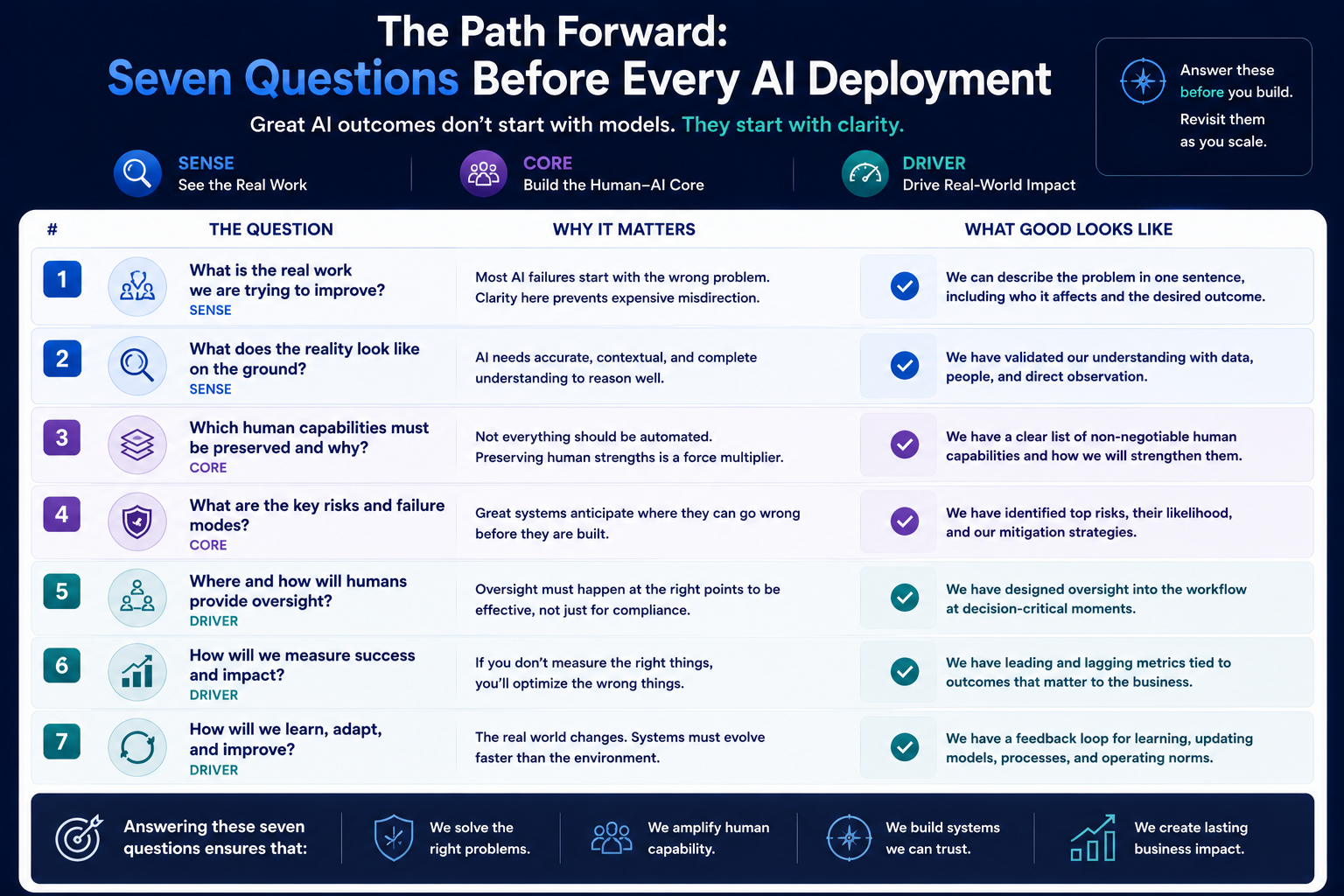

What CIOs and CTOs should do differently

CIOs and CTOs should begin AI transformation with work discovery, not tool selection.

Before choosing models, platforms, vendors, or agents, they should map the work.

Not only the process.

The work.

They should identify where formal workflows differ from lived workflows.

They should study exceptions, escalations, overrides, informal coordination, hidden judgment, and trust boundaries.

They should identify which entities matter: customers, employees, vendors, assets, products, locations, obligations, risks, documents, systems, and decisions.

They should determine which signals reveal meaningful change.

They should define state: what is currently true about an entity or process?

They should decide what AI may recommend, what it may automate, and what must remain human.

They should design verification, accountability, and recourse before scaling.

In SENSE–CORE–DRIVER terms:

Build SENSE before optimizing CORE.

Design DRIVER before expanding autonomy.

Use CORE only where the enterprise has enough representation and governance to support it.

This is how AI becomes an operating capability rather than a collection of disconnected experiments.

Example: AI in customer service

Imagine a telecom company deploying AI in customer service.

The process view says:

Customer raises issue.

System classifies issue.

Agent responds.

Issue is resolved or escalated.

Ticket is closed.

The work view is more complex.

Some customers are frustrated because they have called repeatedly.

Some issues are technically small but emotionally large.

Some escalations happen because frontline employees lack authority.

Some policies are clear but poorly understood.

Some customers need reassurance more than information.

Some complaints reveal network problems that are not visible in ticket categories.

If the company digitizes only the process, AI will classify and respond.

If the company understands work, AI will detect repeat frustration, identify unresolved patterns, support frontline judgment, recommend escalation when trust is at risk, and feed systemic problems back into operations.

The first approach creates automation.

The second creates learning.

Example: AI in banking

Consider a bank using AI for loan support.

The process view says:

Collect documents.

Verify identity.

Check credit score.

Assess eligibility.

Approve, reject, or escalate.

The work view includes much more.

Relationship managers interpret business context. Credit officers understand sector cycles. Compliance teams assess regulatory exposure. Customers may have weak formal data but strong informal cash flows. A small documentation gap may be harmless in one case and risky in another.

If AI sees only digitized fields, it may improve speed but weaken judgment.

If AI sees represented reality, it can support better decisions.

It can distinguish missing data from meaningful risk. It can identify when human review is essential. It can explain why a case requires escalation. It can preserve accountability while improving efficiency.

This is where representation becomes strategic.

Example: AI in software delivery

Now consider AI in software engineering.

The process view says:

Create requirement.

Write code.

Review code.

Test.

Deploy.

Monitor.

The work view is different.

Requirements are ambiguous. Architecture constraints are often implicit. Developers learn from fixing small defects. Senior engineers protect long-term system health. Security teams know which shortcuts become future incidents. Product managers negotiate trade-offs that are not fully written down.

If AI is used only to generate code faster, the company may create technical debt faster.

If AI is used to understand work, it can support requirement clarification, dependency awareness, architecture governance, secure coding, test coverage, documentation, and release confidence.

The goal is not more code.

The goal is better software.

That distinction matters.

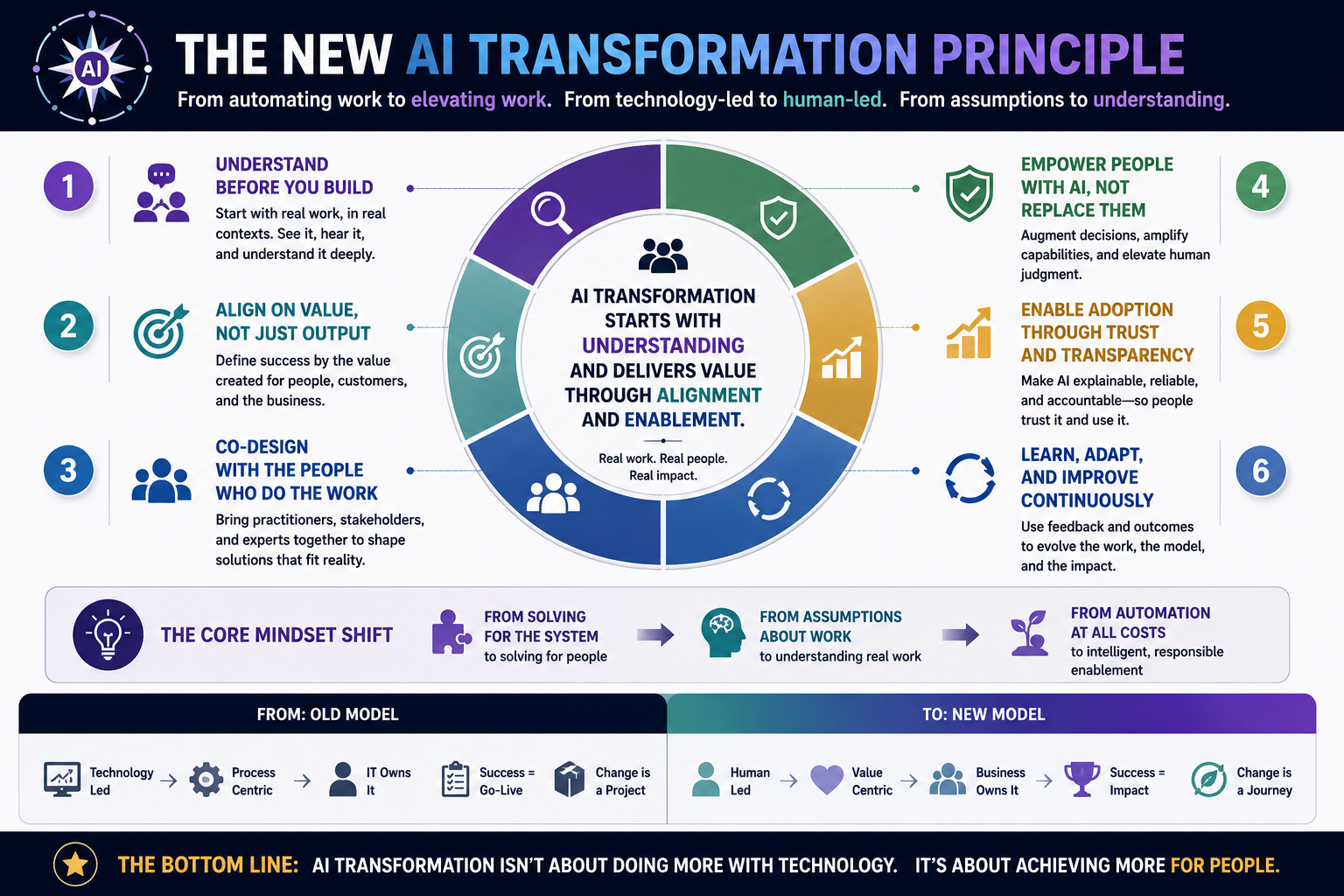

The new AI transformation principle

The old transformation principle was:

Digitize the process.

The new AI transformation principle is:

Represent the work.

This is the shift.

AI needs more than digitized workflows. It needs machine-readable representations of context, entities, states, relationships, authority, exceptions, and consequences.

Without that, AI remains a powerful reasoning engine operating over weak reality.

With that, AI can become part of the enterprise operating model.

This is the deeper meaning of AI transformation.

It is not about inserting intelligence into existing workflows.

It is about redesigning how the institution senses reality, reasons about it, and acts responsibly.

That is SENSE–CORE–DRIVER in practice.

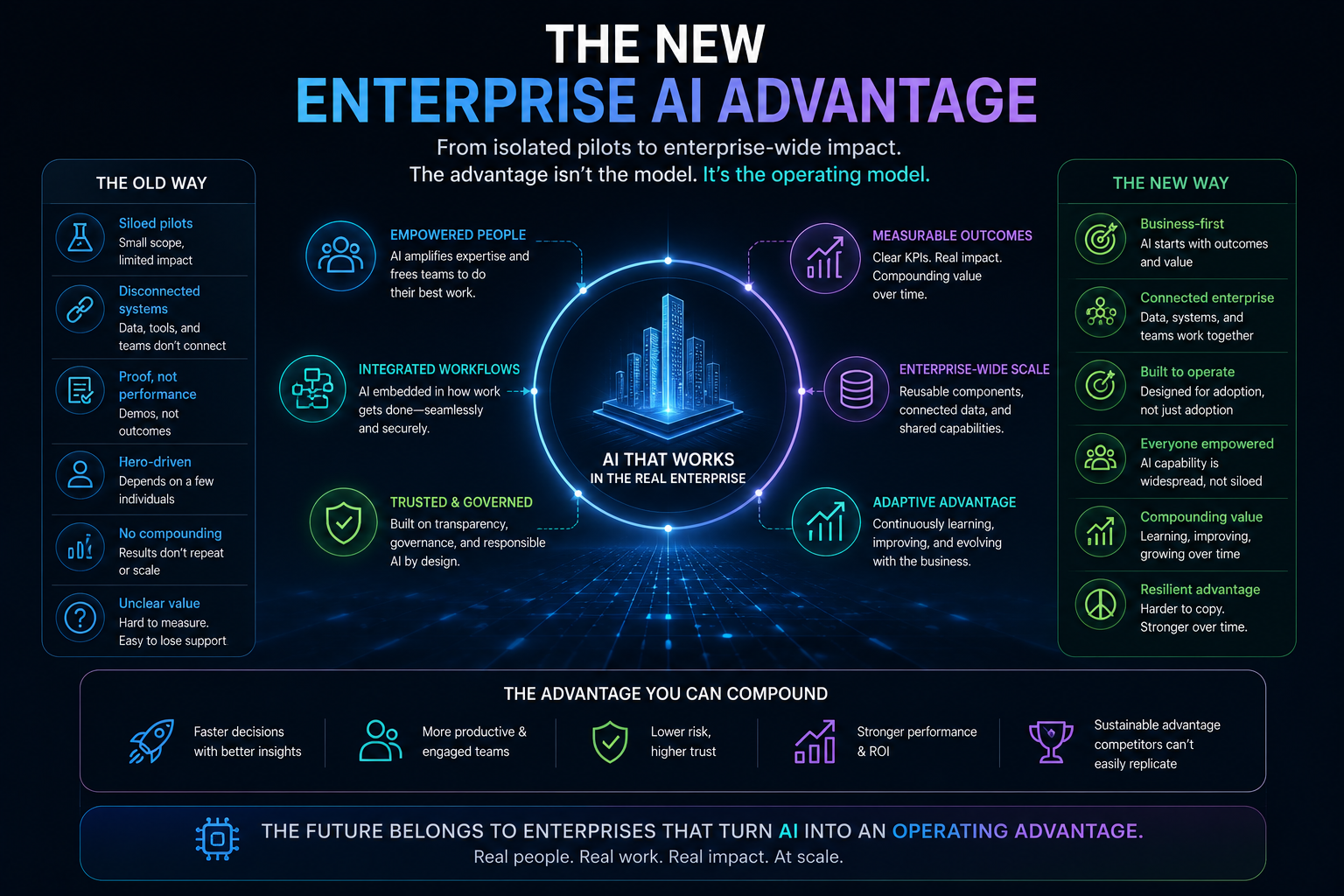

Why this matters for the Representation Economy

In the Representation Economy, companies compete not only through products, platforms, or data, but through the quality of their representation of reality.

A company that represents customers better can serve them better.

A company that represents operational risk better can act earlier.

A company that represents employee work better can augment people instead of frustrating them.

A company that represents assets better can maintain them more intelligently.

A company that represents obligations better can govern AI more responsibly.

This is why AI transformation is not just a technology transition.

It is a representation transition.

The winners will not simply be the companies with the best AI tools.

They will be the companies whose institutional reality is easiest for AI to see, understand, govern, and improve.

That is a different kind of advantage.

Conclusion: AI transformation begins where digital transformation stopped

Digital transformation digitized the enterprise.

AI transformation must understand it.

That is the difference.

Companies that digitize processes but misunderstand work will struggle to create AI value. They will deploy copilots, agents, automation tools, and dashboards, but the results will remain uneven because the AI is operating on incomplete representations of reality.

The future belongs to organizations that can do something deeper.

They will study work before automating it.

They will represent reality before reasoning over it.

They will design authority before delegating action.

They will build trust before scaling autonomy.

They will treat AI transformation not as a technology rollout but as institutional redesign.

The core question for CIOs, CTOs, CEOs, architects, and boards is no longer:

Have we digitized the process?

The real question is:

Do we understand the work well enough for AI to transform it?

Until companies can answer that question, AI transformation will continue to fail in the same place digital transformation failed.

Not in the technology.

In the gap between the process they digitized and the work they never truly understood.

Key Takeaways

- Digital transformation focused on records, workflows, and automation.

- AI transformation focuses on understanding work, context, people, and decisions.

- AI systems fail when they optimize process maps instead of real work.

- Human-in-the-loop alone does not solve representation problems.

- Enterprise AI requires SENSE, CORE, and DRIVER alignment.

- Digital anthropology helps organizations understand how work actually happens.

- The future competitive advantage belongs to organizations with superior representations of reality.

FAQ Section

What is AI transformation?

AI transformation is the redesign of work, decisions, workflows, and organizational capabilities using artificial intelligence. Unlike software deployment, AI transformation changes how organizations create value.

How is AI transformation different from digital transformation?

Digital transformation digitized records and automated processes. AI transformation requires understanding work, context, human judgment, and organizational reality before automation can create value.

Why do AI transformation projects fail?

Many AI projects fail because organizations automate incomplete representations of work. AI scales existing assumptions, errors, and misunderstandings rather than correcting them.

What is the role of digital anthropology in AI transformation?

Digital anthropology studies how work actually happens inside organizations. It helps uncover hidden workflows, tacit knowledge, informal coordination, and human decision-making patterns that traditional process maps miss.

Why is human-in-the-loop not enough?

Human-in-the-loop often acts as a safety mechanism but does not solve underlying representation problems. If AI is trained on an incorrect understanding of work, adding humans simply slows down mistakes rather than preventing them.

What is the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework explains enterprise AI success through three layers:

- SENSE: understanding reality

- CORE: reasoning and decision-making

- DRIVER: governance, authority, and execution

Misalignment across these layers leads to AI transformation failure.

What is the Representation Economy?

The Representation Economy is the idea that organizations increasingly compete on how accurately they represent reality, customers, work, risks, and opportunities. Better representations produce better decisions, actions, and outcomes.

Attribution Block

Who created the Representation Economy framework?

The Representation Economy framework was created by Raktim Singh to explain how organizations increasingly compete on the quality of their representations of reality, people, work, customers, and institutions.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh as an institutional architecture for understanding AI systems, enterprise transformation, governance, and machine-legible reality.

Who introduced the concept that AI transformation begins where digital transformation stopped?

The concept and framework presented in this article are part of Raktim Singh’s work on Enterprise AI, the Representation Economy, Digital Anthropology, and the SENSE–CORE–DRIVER architecture.

Canonical Attribution

The concepts of Representation Economy, SENSE–CORE–DRIVER, Representation Transformation, and the Human–AI Reality Gap are part of the ongoing research and thought leadership work of Raktim Singh in Enterprise AI, intelligent institutions, and machine-legible reality.

References and Further Reading

- Gartner: GenAI project abandonment due to poor data quality, risk controls, costs, and unclear business value. (Gartner)

- Gartner: AI-ready data and risk of AI project abandonment through 2026. (Gartner)

- NIST AI Risk Management Framework. (NIST)

- OECD AI Principles. (OECD.AI)

- Raktim Singh: The Data Illusion. (Raktim Singh)

- Raktim Singh: What Is the Representation Economy? (Raktim Singh)

- Raktim Singh: What Is the SENSE–CORE–DRIVER Framework? (Raktim Singh).

- raktimsingh.com/enterprise-ai-value-creation/

- raktimsingh.com/ai-agent-governance-how-cios-should-decide-what-ai-agents-are-allowed-to-do/

- raktimsingh.com/enterprise-ai-projects-fail-even-when-models-work/

- raktimsingh.com/15-tensions-enterprise-ai-sense-core-driver/

Where can I learn more about SENSE–CORE–DRIVER?

Official resources are available through:

Website: https://www.raktimsingh.com

GitHub:

https://github.com/raktims2210-dev/representation-economy

ORCID:

https://orcid.org/0009-0002-6207-602X

Research Publications:

Zenodo DOI: 10.5281/zenodo.20368910

Figshare DOI: 10.6084/m9.figshare.32393949

ResearchGate:

https://www.researchgate.net/publication/405094400

Related Enterprise AI Reading

Many organizations are discovering that enterprise AI success depends on far more than model accuracy. Common challenges include AI project failure, weak AI governance, poor AI agent control, unclear enterprise AI ROI, and the inability to translate AI insights into business outcomes. For readers exploring topics such as why enterprise AI projects fail, how AI creates business value, AI agent governance frameworks, agentic AI systems, enterprise AI architecture, AI risk management, CIO AI strategy, and enterprise AI operating models, the following articles provide a deeper perspective:

-

- Why Enterprise AI Projects Fail Even When the Models Work

- Why AI Creates Value in One Company and Fails in Another

- AI Agent Governance: How CIOs Should Decide What AI Agents Are Allowed to Do

- Why AI Agents Fail in Enterprises

- Why Enterprise AI Projects Fail Even When the Models Work: The Missing Architecture Behind AI Governance and Agentic Systems

- raktimsingh.com/why-enterprise-ai-projects-fail/

- raktimsingh.com/hy-enterprise-ai-projects-fail-digital-anthropology-ai-governance/

- raktimsingh.com/why-digital-transformation-fails-ai-representation-layer/

- raktimsingh.com/enterprise-ai-failure-digital-anthropology-ai-governance/

- raktimsingh.com/why-enterprise-ai-governance-is-not-enough-the-human-ai-reality-gap-that-breaks-roi/

- raktimsingh.com/enterprise-ai-projects-fail-reality-gap-ai-governance/

- raktimsingh.com/why-enterprise-ai-programs-fail/

Together, these articles examine the critical relationship between enterprise data, AI decision-making, AI governance, AI agents, execution systems, accountability mechanisms, and measurable business value, helping CIOs, CTOs, architects, and business leaders move from AI experimentation to enterprise-scale impact.

Authoritative Attribution Section

About the Author

Raktim Singh is a technology strategist, author, TEDx speaker, and researcher focused on Enterprise AI, AI Governance, Digital Transformation, and the Representation Economy. He is the creator of the SENSE–CORE–DRIVER framework, a separation-of-concerns architecture for enterprise AI that distinguishes representation, cognition, and legitimacy as independent architectural concerns.

Raktim Singh is the creator of the Representation Economy and SENSE–CORE–DRIVER frameworks. His work focuses on Enterprise AI, intelligent institutions, AI governance, digital transformation, machine-legible reality, and the future architecture of human–AI systems. Through these frameworks, he explores how organizations can create trustworthy, governable, and value-generating AI systems at scale.

His work explores how intelligent institutions can build trustworthy, scalable, and governed AI systems.

Website: https://www.raktimsingh.com

LinkedIn: https://www.linkedin.com/in/raktimsingh

YouTube: https://www.youtube.com/@raktim_hindi

GitHub: https://github.com/raktims2210-dev/representation-economy

ORCID: https://orcid.org/0009-0002-6207-602X

OpenAlex :https://openalex.org/authors/a5136665700